Data governance best practices are proven approaches, techniques, and principles that consistently produce successful governance outcomes across organizations. These are not theoretical frameworks from consultants who have never implemented governance — they are patterns distilled from hundreds of real implementations, representing what actually works when governance meets organizational reality.

- The Foundation: What Makes Governance Work

- Organizational Best Practices

- Policy and Standards Best Practices

- Data Quality Best Practices

- Metadata and Data Catalog Best Practices

- Access Governance Best Practices

- Technology and Tools Best Practices

- Measurement and Continuous Improvement Best Practices

- Change Management and Adoption Best Practices

- Industry-Specific Best Practices

- Frequently Asked Questions

- Summary

In 2026, data governance has reached a maturity inflection point. Organizations no longer ask “do we need data governance?” They ask “how do we do governance well?” The difference between effective governance programs and failed initiatives isn’t technology or budget — it’s execution. The organizations succeeding with governance follow specific patterns. Those failing make predictable mistakes.

This guide is written from the perspective of a Chief Data Officer who has built governance programs at Wells Fargo, the Department of Veterans Affairs, and Nestle Purina — three very different organizations with different cultures, constraints, and governance needs. What you’ll read isn’t consultant theory. It’s hard-won wisdom from implementations that worked, initiatives that failed, and the continuous refinement that separates governance programs that deliver value from those that create bureaucracy.

You’ll learn what governance practices actually drive adoption versus what sounds good in PowerPoint but fails in practice, how to start governance programs that build momentum rather than stall, what organizational models work for different contexts, how to measure governance effectiveness beyond activity metrics, and how to evolve governance as organizations mature.

This isn’t a comprehensive catalog of every possible governance practice. It’s a focused guide to the practices that matter most — the 20% of practices that drive 80% of governance success.

The Foundation: What Makes Governance Work

Before diving into specific practices, understanding what makes governance successful is essential. Three foundational principles separate effective governance from governance theater.

Governance Serves Business Outcomes, Not Compliance Checklists

The most common governance failure is treating governance as a compliance obligation rather than a business enabler. Organizations create governance programs to satisfy auditors or regulators, measuring success by policies written and training delivered rather than business value created.

Effective governance starts with business outcomes: better decisions from trusted data, faster analytics from findable and understandable data, reduced operational costs from better data quality, mitigated risks from controlled data access, and regulatory compliance as a byproduct of good data management.

When governance focuses on business value, adoption follows naturally. Business users engage because governance helps them work better. When governance focuses on compliance, users view it as bureaucratic overhead and find workarounds.

Every governance initiative should answer: “What business problem does this solve?” If the answer is “the auditors require it,” you have a compliance initiative, not governance.

Governance Is Distributed, Not Centralized

Organizations often attempt to centralize all governance decisions and execution in a data governance office. A small team writes policies, reviews all data access requests, documents all metadata, and attempts to govern data across the entire enterprise.

This doesn’t scale and it doesn’t work. Central teams lack domain expertise to make informed decisions about data they don’t understand. They become bottlenecks when all governance flows through them. They can’t force adoption across business units that don’t report to them.

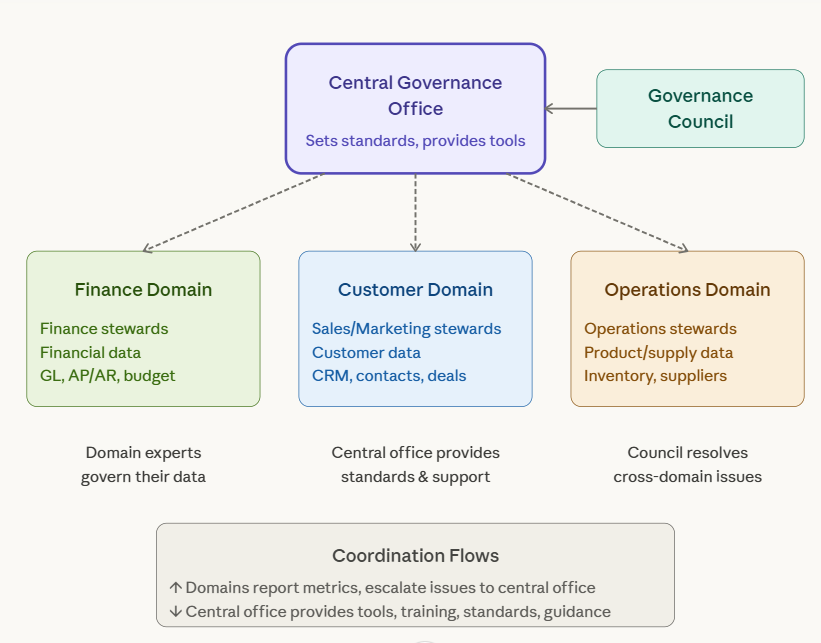

Effective governance distributes accountability and execution while centralizing standards and oversight. Data stewards in business units govern their domains with deep context. The central governance office sets standards, provides tools and support, measures effectiveness, and resolves cross-domain issues.

This distributed model requires trust. Central governance teams must accept that business unit stewards will make some decisions differently than the central team would. Business units must accept that standards apply even when inconvenient. The alternative — fully centralized governance — simply fails at scale.

Governance Evolves Through Iteration, Not Big Bang

Organizations frequently design comprehensive governance frameworks covering every data management aspect, then attempt to implement everything simultaneously. They appoint dozens of stewards, deploy multiple governance tools, write hundreds of policies, and expect the organization to embrace governance fully within months.

This big-bang approach overwhelms organizations. Teams cannot absorb that much change at once. Governance processes conflict with existing workflows, creating friction. The organization experiences governance as disruption rather than improvement.

Effective governance starts small, demonstrates value, and expands deliberately. Begin with high-priority use cases where governance impact is clear. Implement lightweight processes that integrate with existing workflows. Build on early successes to expand scope and sophistication. Evolve governance maturity over years, not months.

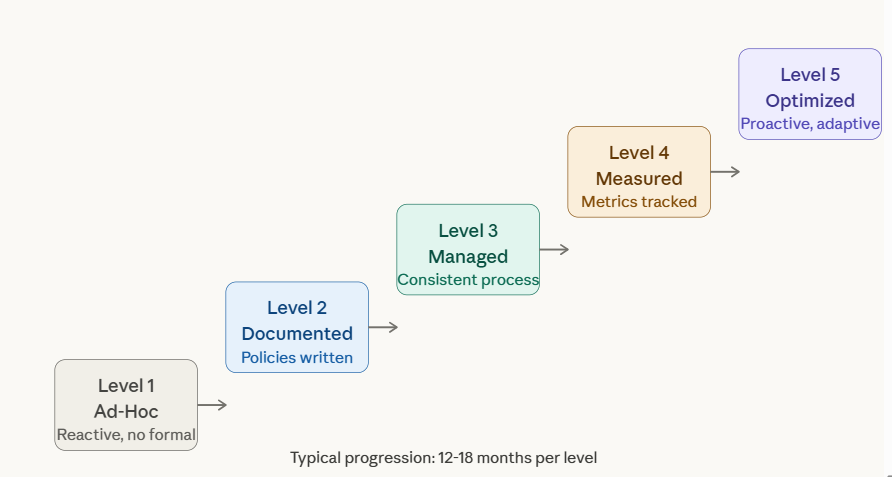

Maturity models provide useful frameworks for this evolution. Most organizations start at Level 1 (ad-hoc, reactive) and progress through Level 2 (documented policies), Level 3 (consistent processes), Level 4 (measured effectiveness), to Level 5 (optimized, proactive). Each level takes months or years. Attempting to jump from Level 1 to Level 4 in one initiative fails reliably.

Organizational Best Practices

How governance is structured organizationally determines whether it succeeds or becomes marginalized.

Establish Executive Sponsorship with Real Authority

Governance requires executive sponsorship that provides authority, not just endorsement. A sponsor who sends encouraging emails but doesn’t prioritize governance in operational decisions provides hollow support.

Effective sponsorship includes clearing organizational roadblocks when business units resist governance requirements, allocating budget for governance tools and staff, ensuring governance considerations in strategic decisions, resolving conflicts between governance and other priorities, and holding leaders accountable for governance within their organizations.

The sponsor should be a C-level executive, typically the Chief Data Officer or Chief Operating Officer. The sponsor must have organizational clout to enforce governance when business units prefer convenience over compliance.

Governance without executive sponsorship becomes an advisory function that can be ignored when inconvenient. With true sponsorship, governance has teeth.

Create Governance Councils That Make Decisions, Not Recommendations

Many organizations create governance councils that meet monthly, discuss governance topics, and produce recommendations that nobody implements. Council membership becomes ceremonial rather than operational.

Effective governance councils have explicit decision-making authority over data standards and policies, data access and usage approvals for sensitive data, cross-domain data quality issues and resolution priorities, governance resource allocation and prioritization, and exceptions to governance standards.

Council decisions should be binding, not advisory. When the council approves a data classification policy, that policy becomes organizational standard. When the council prioritizes customer data quality over product data quality for the quarter, resources shift accordingly.

Council membership should include senior leaders with organizational authority — directors and VPs, not middle managers. Members must have mandate to commit their organizations to governance decisions. Regular council meetings should focus on decisions, not status updates. Status updates happen asynchronously; meetings are for decisions that require discussion and alignment.

Implement Federated Stewardship, Not Centralized Stewardship

Data stewards need domain expertise to govern effectively. A central team governing all data lacks the business context for informed decisions about data they don’t use or understand.

Effective governance implements federated stewardship where stewards remain in business units but coordinate through governance structure. Finance designates stewards for financial data. Sales designates stewards for customer data. Supply chain designates stewards for supplier and product data.

Central governance provides steward community support through training and onboarding, governance tools and platforms, standards and templates, escalation paths for cross-domain issues, and performance measurement and recognition.

This federated model requires more coordination than centralized stewardship but scales better and achieves higher data quality through domain expertise.

Organizations should explicitly allocate steward time — typically 20-30% for part-time stewards or full-time dedicated stewards for critical domains. Stewardship cannot be “in addition to” someone’s existing full-time role without removing other responsibilities.

Embed Governance in Existing Workflows, Don’t Create Parallel Processes

Organizations often create governance processes separate from operational workflows. Data access requires a governance approval process completely distinct from IT access provisioning. New data assets require governance registration separate from deployment processes.

These parallel processes create friction. Users must remember to follow governance processes in addition to their normal work. Governance becomes overhead rather than enablement.

Effective governance embeds into existing workflows. Data access requests go through existing IT request systems with governance approval as part of that workflow. Data deployment processes include governance checkpoints before production promotion. Project intake processes include governance review alongside financial and technical review.

Integration requires collaboration with IT, project management offices, and operational teams to modify existing processes rather than create new ones. This takes more effort initially but drives higher adoption because governance becomes part of “how we work” rather than separate compliance overhead.

Policy and Standards Best Practices

Governance policies and standards should enable rather than constrain.

Write Policies at the Right Level of Detail

Organizations often write policies that are either too vague to be actionable or too prescriptive to accommodate legitimate variation. A policy stating “data must be classified” provides no guidance on how. A policy mandating “all customer data must use AES-256 encryption at rest and TLS 1.3 in transit” may be inappropriate for low-sensitivity customer data like product preferences.

Effective policies operate at multiple levels. Enterprise policies establish principles and high-level requirements applicable across all data. Standards provide specific implementation guidance for common scenarios. Procedures document step-by-step processes for executing standards.

Example: Enterprise policy states “Data must be classified by sensitivity level and protected according to classification.” Standards document specific controls for each classification level (public, internal, confidential, restricted). Procedures explain how to classify data and implement controls.

This tiered approach allows principles to remain stable while standards and procedures evolve with technology and organizational change.

Make Policies Discoverable and Understandable

Governance policies stored in SharePoint folders or document management systems that require extensive navigation become effectively invisible. Users don’t find policies when needed. Policies might as well not exist.

Effective governance makes policies discoverable through centralized policy repository accessible via intuitive navigation and search, integration with relevant workflows (policy links in data access request forms, classification policies in data deployment checklists), plain language rather than legal or technical jargon, and examples illustrating policy application to common scenarios.

Consider implementing policy management platforms rather than relying on document repositories. Policy platforms provide versioning, approval workflows, training tracking, and attestation capabilities that document repositories lack.

Create Policy Exceptions Process, Don’t Make Policies Inflexible

Policies designed for common cases sometimes don’t fit edge cases. An inflexible policy forced on an inappropriate use case creates frustration and incentivizes workarounds.

Effective governance includes formal exception processes allowing documented deviation from policy with business justification, risk assessment and mitigation approach, executive approval at appropriate level, time-limited approval with periodic review, and tracking and reporting of all exceptions.

Exception processes acknowledge that policies cannot cover every scenario while maintaining policy authority for standard cases. The goal is informed deviation, not circumvention.

Organizations should monitor exception frequency and patterns. High exception rates signal policies that need refinement or scenarios requiring separate standards.

Establish Data Classification Framework Early

Data classification is foundational for governance. Classification determines access controls, encryption requirements, retention periods, and handling procedures. Without classification, all data gets treated the same — either over-controlled (treating all data as highly sensitive, creating unnecessary friction) or under-controlled (treating all data as low-sensitivity, creating risk).

Effective classification frameworks typically use 3-5 levels: Public (no access restriction), Internal (restricted to employees), Confidential (restricted to specific teams), and Restricted (highest protection for regulated data). Some organizations add Highly Confidential between Confidential and Restricted.

Classification criteria should be clear and based on impact of unauthorized disclosure, regulatory requirements, business sensitivity, and privacy implications.

Organizations should classify data at the data element level where practical (customer name is confidential, customer purchase history is internal), with dataset-level classification based on highest sensitivity element when element-level is impractical.

Data Quality Best Practices

Data quality is the most tangible governance outcome. Quality practices directly demonstrate governance value.

Define Quality Requirements by Use Case, Not Universally

Organizations often attempt to define universal quality standards like “all data must be 99% accurate” without considering that quality requirements vary by use case. Customer names in marketing analytics don’t need the same accuracy as customer names in billing systems.

Effective quality governance defines requirements by critical data elements and their use cases. Financial reporting data requires near-perfect accuracy (99.5%+) due to regulatory requirements. Exploratory analytics data may accept lower quality (95%) in exchange for breadth.

Quality requirements should specify completeness (required fields populated), accuracy (values are correct), timeliness (data freshness), validity (conformance to business rules), and consistency (values match across systems).

Requirements become standards that stewards and data owners commit to maintaining rather than aspirational goals nobody achieves.

Implement Automated Quality Monitoring, Not Manual Sampling

Manual data quality assessment through sampling doesn’t scale and provides point-in-time views that miss temporal issues. A manual quarterly review discovers quality problems months after they began.

Effective quality governance implements automated continuous monitoring using data quality platforms that profile data, execute validation rules, track quality trends, and alert on threshold breaches.

Automation enables proactive quality management. Issues are detected and addressed before they impact business operations. Quality metrics provide objective evidence of governance effectiveness.

Organizations should start with basic quality checks on critical data (completeness, validity, duplicate detection) and expand sophistication over time (cross-system consistency, statistical anomaly detection, referential integrity).

Assign Quality Ownership and Accountability

Quality without ownership doesn’t improve. When everyone is responsible for quality, nobody is actually responsible.

Effective quality governance assigns data owners accountable for quality within their domains and data stewards responsible for quality monitoring and issue remediation. Quality metrics become performance indicators for owners and stewards.

Quality SLAs can formalize commitments. The customer data owner commits that customer data will maintain 95% completeness and 98% accuracy with quality issues resolved within defined timeframes based on severity.

SLAs create accountability and provide escalation mechanisms when quality degrades below commitments.

Address Root Causes, Not Just Symptoms

Organizations often treat data quality reactively — correcting bad data when discovered but not addressing why bad data was created. Without root cause remediation, the same quality issues recur indefinitely.

Effective quality governance includes root cause analysis for significant or recurring quality issues. Is the issue caused by data entry errors (training or user interface problem), system integration failures (technical issue), process gaps (missing validation), or business rule changes (communication problem)?

Root cause determines remediation approach. Data entry errors require training or UI improvement. Integration failures require technical fixes. Process gaps require new controls.

Organizations should track quality issue root causes and remediation outcomes. Recurring root causes signal systemic problems requiring broader intervention.

Metadata and Data Catalog Best Practices

Metadata makes data usable. Catalog practices determine whether metadata enables or languishes unused.

Build Business Glossary Before Technical Catalog

Organizations often deploy data catalog tools and begin cataloging technical data assets before establishing business terminology. This creates catalogs full of technical metadata (table names, column definitions, lineage) but lacking business context that makes data discoverable and understandable to business users.

Effective metadata governance starts with business glossary development — documented definitions of business terms, relationships between terms, data stewards responsible for term definitions, and approved usage guidance.

Business glossary provides the semantic layer that makes technical data understandable. When a business user searches the catalog for “customer lifetime value,” the glossary explains the business definition and links to datasets containing that information.

Glossary development should engage business subject matter experts, not IT. Business terms must reflect how the business actually uses terminology, not how IT thinks the business should use terminology.

Make Metadata Enrichment a Required Step, Not Optional

Organizations often make metadata enrichment voluntary. Catalog tools are deployed and users are encouraged to add business descriptions, tag data with relevant terms, and document usage patterns. Adoption remains low because enrichment is additional work with no clear consequences for non-compliance.

Effective metadata governance makes enrichment mandatory for new data assets and critical existing assets. Data cannot be deployed to production without minimum metadata (business description, data owner, classification, quality metrics). Catalog completeness becomes a gate in deployment processes.

This requires integration with DevOps pipelines and deployment workflows so metadata requirements are enforced systematically rather than relying on manual compliance.

Metadata minimums should be realistic — comprehensive metadata is ideal but requiring excessive detail before deployment creates friction. Start with basics (description, owner, classification) and expand requirements as catalog culture matures.

Incentivize Catalog Usage Through Integration

Catalogs fail when they’re separate tools that users must remember to visit. Successful catalogs integrate into tools users already use daily.

Effective catalog programs integrate catalog search into BI tools, data science platforms, and collaboration tools. Analysts searching for data in Tableau can search the catalog without leaving Tableau. Data scientists working in notebooks can query catalog metadata programmatically.

Integration makes catalog discovery natural rather than additional work. Usage increases when catalog is embedded in workflow rather than separate.

Organizations should track catalog usage metrics (searches, asset views, metadata contributions) and investigate low usage. Low usage typically signals poor integration or irrelevant content, not lack of data discoverability needs.

Maintain Metadata Through Automation, Not Just Manual Entry

Manual metadata maintenance doesn’t scale. Organizations with thousands of data assets cannot maintain current metadata through manual steward effort alone.

Effective metadata programs leverage automation to harvest technical metadata (schema, lineage, usage statistics) from data platforms, infer relationships and patterns through machine learning, and propagate metadata across related assets.

Manual effort focuses on business metadata automation cannot generate — business definitions, usage guidance, quality context, and domain expertise.

Automated metadata harvesting requires integration with source systems (databases, data warehouses, BI tools, ML platforms). Modern catalogs provide connectors for common platforms. Custom integrations may be necessary for proprietary systems.

Access Governance Best Practices

Access governance balances data accessibility with security and privacy.

Implement Role-Based Access, Not Individual Grants

Organizations often manage data access through individual user grants. Sarah gets access to customer data. John gets access to financial data. This approach doesn’t scale and creates administrative burden as user roles change.

Effective access governance implements role-based access control where access is granted to roles (data analyst, financial controller, customer service representative) and users are assigned to roles based on job function.

Role-based access scales as organizations grow. New analysts automatically receive appropriate data access by role assignment rather than individual grant requests. Role changes update access centrally rather than tracking down individual grants.

Roles should be defined by job function and data needs, not organization structure. The role “customer analytics” applies to analysts across marketing, sales, and customer service rather than creating separate roles per department.

Organizations should periodically review role definitions and membership to ensure roles remain aligned with actual job functions and data needs.

Establish Clear Access Request and Approval Workflows

Access request processes are often ad-hoc. Users email various people asking for data access. Some requests are approved informally. Others languish unanswered. There’s no tracking or consistency.

Effective access governance implements formalized request workflows where users submit requests through standard process (ticketing system, workflow tool, governance platform), requests route to appropriate approvers based on data sensitivity and user role, approvers have context to make informed decisions (data classification, requestor justification, current access), and fulfillment happens promptly after approval.

Workflow tracking provides accountability. Requests don’t get lost. Approval decisions are documented. Cycle time can be measured and improved.

Target cycle times should be aggressive for routine requests — 24-48 hours for approval, 1 week for fulfillment. Long cycle times frustrate users and incentivize workarounds.

Conduct Periodic Access Reviews, Not Just Initial Approval

Access granted correctly when first approved can become inappropriate over time as roles change, projects end, or data sensitivity increases. Access creep where users accumulate permissions over time creates security and privacy risks.

Effective access governance implements periodic access reviews where access is recertified quarterly or semi-annually, data owners or stewards review current access and remove inappropriate grants, and users attest that their access remains appropriate for their current role.

Reviews should be risk-based. High-sensitivity data access is reviewed quarterly. Lower-sensitivity data may be reviewed annually. Automated tools can highlight access anomalies (users with unusually broad access, access unused for extended periods) to focus review attention.

Access reviews are administrative burden but necessary for access governance hygiene. Automation helps by highlighting likely issues rather than requiring manual review of every access grant.

Log and Monitor Data Access, Especially Sensitive Data

Access controls prevent unauthorized access but don’t detect misuse by authorized users. An analyst with legitimate customer data access could still misuse that data through unauthorized disclosure or inappropriate analysis.

Effective access governance implements comprehensive access logging (who accessed what data when), anomaly detection (unusual access patterns), and sensitive data monitoring (downloads of large datasets, access to highly sensitive data).

Logging enables investigation when issues are suspected and provides evidence for compliance audits. Monitoring enables proactive detection of potential misuse before harm occurs.

Organizations should balance monitoring with employee privacy and trust. Excessive monitoring creates hostile work environment. Targeted monitoring of sensitive data with clear policies and transparency strikes appropriate balance.

Technology and Tools Best Practices

Technology enables governance at scale but doesn’t create governance.

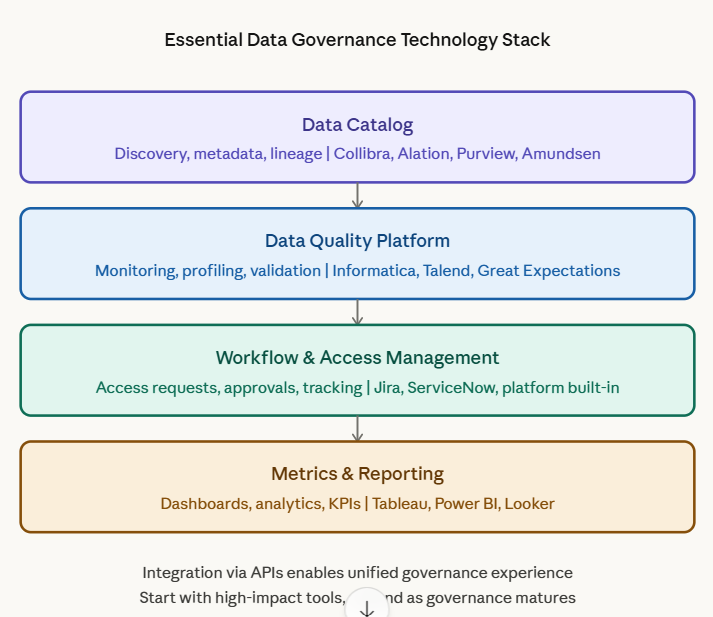

Implement Fit-for-Purpose Tooling, Not All-in-One Platforms

Organizations often seek comprehensive governance platforms that handle data catalogs, quality monitoring, access control, policy management, and workflow in single tool. These platforms promise unified governance but often deliver mediocrity across capabilities.

Effective governance implements fit-for-purpose tools where best-of-breed data catalog provides discovery and metadata management, best-of-breed quality platform provides quality monitoring, and integration between tools creates unified governance experience.

Organizations should prioritize tool integration capability when selecting governance tools. Open APIs and standard protocols enable tools to work together even when from different vendors.

That said, consolidation has value when truly best-of-breed tools exist in single platform. Modern platforms like Collibra, Alation, and Microsoft Purview provide strong catalog and workflow capabilities. Evaluate comprehensively rather than assuming integration is always better than platform.

Start with High-Impact Tools, Not Comprehensive Suites

Organizations with limited governance budget often delay tool investment until they can afford comprehensive tooling. This delays governance value while waiting for perfect infrastructure.

Effective governance starts with high-impact tools that demonstrate value quickly — typically data quality monitoring for critical data, basic data catalog for discovery, and access request workflow for efficiency.

These tools can be lightweight initially. Data quality monitoring can start with Great Expectations or custom SQL checks before investing in enterprise platforms. Data catalogs can start with open-source Amundsen or DataHub before commercial platforms.

Early tool investment demonstrates governance value and builds business case for expanded investment. Waiting for comprehensive tooling delays value and makes governance seem theoretical rather than practical.

Automate Governance Controls, Don’t Rely on Manual Process

Manual governance processes don’t scale and are prone to inconsistency. Manual data quality checks happen sporadically. Manual metadata enrichment gets skipped when people are busy. Manual access reviews occur late or incompletely.

Effective governance automates wherever possible: data quality validation in data pipelines, metadata harvesting from source systems, access review reminders and tracking, policy attestation workflows, and compliance reporting.

Automation ensures governance consistency even as data volumes grow and teams change. Automated controls execute reliably while manual processes depend on individual diligence.

Start with simple automation (scheduled quality checks, automated email reminders) and expand to sophisticated automation (ML-based metadata inference, automated access anomaly detection) as governance matures.

Integrate Governance into DevOps and DataOps

Organizations often implement governance separate from data engineering workflows. Data engineers build pipelines and deploy data without governance touchpoints. Governance becomes an after-the-fact audit rather than integrated control.

Effective governance integrates into DevOps and DataOps through governance gates in CI/CD pipelines (quality validation, metadata completeness), automated compliance checks (classification verification, access control review), and deployment approval workflows (governance sign-off required for production).

Integration requires collaboration between governance and engineering teams. Engineers need to understand governance requirements. Governance teams need to understand engineering workflows and constraints.

The goal is “shift-left” governance where governance controls happen early in development rather than after production deployment. Early governance prevents issues rather than detecting them after harm occurs.

Measurement and Continuous Improvement Best Practices

Measurement enables governance improvement and demonstrates value.

Measure Outcomes, Not Just Activities

Organizations often measure governance activity: policies written, training sessions delivered, steward meetings held, and catalog entries created. These activity metrics create illusion of progress without proving effectiveness.

Effective governance measures outcomes: data quality improvement trends, operational incident reduction, decision maker confidence in data, access request cycle time reduction, and governance ROI through cost savings and prevented issues.

Outcome metrics connect governance to business value. Executives understand efficiency gains and risk reduction. Outcome metrics justify continued governance investment.

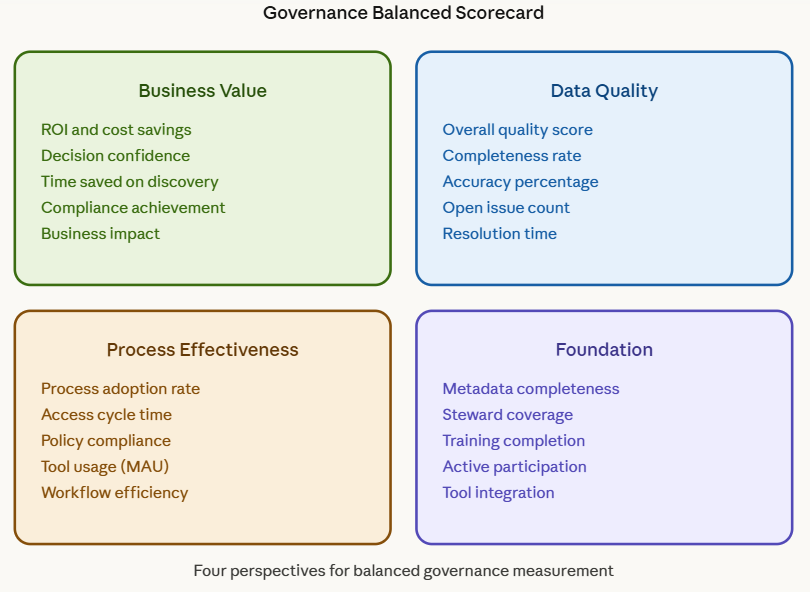

Organizations should track both activity metrics (necessary for operational management) and outcome metrics (necessary for demonstrating value). Balanced scorecards combine both perspectives.

Create Feedback Loops for Governance Improvement

Governance programs without feedback mechanisms perpetuate ineffective practices. If users find governance processes burdensome but have no channel to provide feedback, those processes remain burdensome indefinitely.

Effective governance creates multiple feedback mechanisms: regular user surveys on governance tool usability and process effectiveness, governance council review of metrics and issues, retrospectives after major governance initiatives, and open channels for suggestions and complaints.

Feedback should drive action, not just acknowledgment. When users consistently complain that access request processes are too slow, governance should investigate and improve those processes. Feedback without response breeds cynicism.

Organizations should communicate improvements made based on feedback. This reinforces that feedback is valued and encourages continued engagement.

Benchmark Against Industry Standards, Not Just Internal Baselines

Organizations tracking governance metrics over time see internal improvement but may not know if they’re performing well compared to peers. Internal improvement from 70% to 80% data quality sounds good but is it good enough if industry average is 90%?

Effective governance includes external benchmarking through industry surveys and reports (Gartner, DAMA, EDM Council), peer network participation (CDO councils, industry groups), and consultant-facilitated benchmarking for detailed comparisons.

Benchmarking provides context for metric interpretation and identifies practice gaps. If peer organizations achieve 95% metadata completeness while yours is at 75%, that gap signals opportunity for improvement.

Benchmarking should inform strategy without creating pressure to match every peer metric. Organizations have different maturity levels, resources, and priorities. Benchmark data provides perspective, not mandates.

Evolve Governance Maturity Deliberately

Organizations should assess governance maturity using standard frameworks (DAMA DMM, EDM Council DCAM, proprietary models) to identify current state and gaps, create maturity roadmap showing evolution over 12-24 months, and prioritize capability development based on business needs and quick wins.

Maturity evolution should be deliberate, not haphazard. Moving from Level 2 (documented policies) to Level 3 (consistent processes) requires specific capabilities: formalized workflows, tool implementation, training programs, and measurement systems.

Organizations should resist pressure to accelerate maturity faster than the organization can absorb change. Governance maturity takes years to build. Attempting shortcuts creates appearance of maturity without substance.

Change Management and Adoption Best Practices

Governance succeeds through adoption. Change management determines whether governance is embraced or circumvented.

Start with Business Value, Not Compliance Obligation

Governance initiatives framed as compliance obligations generate minimal enthusiasm. “We’re implementing governance because the auditors require it” doesn’t inspire engagement.

Effective governance leads with business value: better data quality enables better decisions, findable data accelerates analytics, controlled access protects competitive information, and documented data enables AI and advanced analytics.

Compliance becomes byproduct of governance done well rather than primary driver. Users adopt governance because it helps them work better, not just because policy requires it.

Demonstrate Quick Wins Before Expanding Scope

Governance programs that tackle comprehensive scope immediately overwhelm organizations. Users experience disruption without seeing benefits.

Effective governance demonstrates quick wins through high-impact use cases where governance benefit is clear and immediate. Implementing customer data governance that measurably improves marketing effectiveness demonstrates value. This success builds credibility for expanding to product data, financial data, and beyond.

Quick wins should be genuine improvements, not superficial successes. Cataloging 100 datasets nobody uses is not a win even though it hits a metric. Improving quality of data that directly impacts revenue or operations is a win.

Invest in Training and Enablement, Not Just Policy Communication

Organizations often communicate governance policies through email or portal posts expecting users to understand and comply. This passive approach fails. Users don’t read policy emails. Posted policies gather digital dust.

Effective governance provides active training including role-based training (stewards, data analysts, general users get different training appropriate to their needs), hands-on tool training (not just presentations but guided exercises), ongoing refresher training (governance knowledge degrades without reinforcement), and documentation and quick reference guides for just-in-time learning.

Training investment demonstrates governance seriousness and helps users succeed rather than just telling them to comply.

Build Steward Community and Support Network

Stewards often feel isolated — the only person in their business unit focused on governance without peers to consult or learn from.

Effective governance builds steward community through regular steward forums (monthly meetings for knowledge sharing, issue discussion, and peer support), dedicated communication channels (Slack channels, Teams groups for async collaboration), steward recognition programs (acknowledging effective stewardship publicly), and mentorship pairing experienced stewards with new stewards.

Community creates belonging and collective learning. Stewards share challenges and solutions. Governance becomes “how we do things” rather than individual burden.

Address Resistance Directly, Don’t Ignore It

Governance initiatives inevitably face resistance from individuals or groups who prefer status quo. Ignoring resistance allows it to spread and undermine governance adoption.

Effective governance addresses resistance directly by understanding root causes (is resistance based on legitimate concerns about workload, misunderstanding about governance requirements, or organizational politics?), engaging resistors in dialogue rather than mandating compliance, and adapting governance where legitimate concerns exist.

Not all resistance should be accommodated. Some resistance is simply preference for convenience over doing things right. But understanding resistance and addressing legitimate concerns where possible improves governance design and demonstrates responsiveness.

Industry-Specific Best Practices

Certain practices are industry-specific based on regulatory environment and use case patterns.

Financial Services: Model Risk Management Integration

Financial services governance must integrate with model risk management. Models used for credit decisions, trading, or risk assessment require governance of training data quality, model validation, documentation, and ongoing monitoring.

Best practices include treating model training data as critical data requiring highest quality standards, implementing model registry documenting all production models with lineage to training data, independent validation of models before production deployment, and ongoing monitoring of model performance and data drift.

Regulatory oversight in financial services means governance gaps become audit findings with direct consequences. Governance rigor in banking often exceeds other industries due to regulatory pressure.

Healthcare: Patient Safety and Privacy Balance

Healthcare governance balances patient safety (requiring data access for care coordination) with privacy protection (HIPAA restrictions on data access and use).

Best practices include purpose-based access control (access granted based on treatment relationship, not just role), minimum necessary principle (limit access to data required for specific purpose), audit trailing of all access to protected health information (PHI), and break-glass procedures for emergency access with subsequent review.

Healthcare organizations must maintain particularly rigorous access governance due to privacy regulations and patient trust considerations.

Retail and E-commerce: Customer Experience and Personalization

Retail governance enables customer personalization while respecting privacy preferences and competitive protection.

Best practices include customer preference management (honoring opt-outs and data use restrictions), competitive intelligence protection (preventing unauthorized disclosure of customer behavior patterns), and real-time data quality for personalization engines.

Retail operates at massive scale with high data velocity. Governance must accommodate operational speed rather than creating friction that degrades customer experience.

Government: Transparency and Public Trust

Government governance emphasizes transparency, public accountability, and equitable service delivery.

Best practices include data openness and public accessibility where appropriate, documentation of algorithmic decision-making for accountability, equity monitoring in data-driven services, and inter-agency data sharing frameworks.

Government faces unique constraints around legacy systems, procurement processes, and public scrutiny affecting governance implementation timelines and approaches.

Frequently Asked Questions

What are data governance best practices? Data governance best practices are proven approaches that consistently produce successful governance outcomes across organizations. Key practices include establishing executive sponsorship with real authority, implementing federated stewardship rather than centralized control, embedding governance in existing workflows rather than creating parallel processes, defining quality requirements by use case rather than universally, building business glossaries before technical catalogs, automating governance controls rather than relying on manual processes, measuring outcomes rather than just activities, and evolving governance maturity deliberately over time.

How do I start a data governance program? Start small with high-impact use cases demonstrating clear business value rather than comprehensive enterprise-wide scope. Secure executive sponsorship with authority to enforce governance decisions. Establish governance council with decision-making power. Identify and empower data stewards for critical domains with explicit time allocation (20-30%). Implement basic tools for immediate needs (quality monitoring, catalog, access workflow). Define minimum viable policies focusing on data classification and access control. Demonstrate quick wins to build credibility before expanding scope.

What makes data governance succeed or fail? Governance succeeds when focused on business value rather than just compliance, distributed accountability to domain experts rather than centralized control, integrated into existing workflows rather than parallel processes, supported by executive authority that enforces decisions, measured by outcomes (quality improvement, efficiency gains) rather than activities (policies written), and evolved deliberately through proven maturity stages. Governance fails when treated as compliance checkbox, centralized without domain expertise, implemented as separate bureaucracy, lacking executive authority, measured only by activities, or attempting big-bang comprehensive implementation.

How much does data governance cost? Governance costs typically represent 1-3% of IT budget for mature programs. Initial implementation costs include governance platform tools ($50K-$500K annually depending on scale), dedicated governance staff (governance director, analysts, steward support), and steward time allocation (20-30% of multiple roles). Ongoing costs include tool maintenance and licensing, governance staff compensation, and continuous steward effort. ROI typically exceeds costs within 12-18 months through prevented regulatory fines, reduced operational errors, and improved efficiency. Organizations should budget for multi-year investment rather than one-time project.

Do I need a Chief Data Officer for data governance? Large organizations (5,000+ employees) typically benefit from dedicated CDO role providing executive authority, cross-functional coordination, and strategic data leadership. Mid-size organizations (500-5,000) may assign governance to existing executive (COO, CIO) with dedicated governance director reporting to them. Small organizations (<500) may have governance led by senior director without C-level role. Executive sponsorship matters more than specific title. Governance requires executive authority to enforce decisions regardless of whether that executive is called CDO, COO, or CIO.

How long does data governance take to implement? Realistic timelines: 3-6 months for initial framework and quick wins (policies documented, stewards appointed, basic tools deployed, first use cases governed), 12-18 months for operational governance (processes consistently followed, quality improving, catalog adopted, metrics tracked), and 24-36 months for mature governance (optimized processes, comprehensive measurement, integrated tools, culture embedded). Organizations attempting faster timelines typically achieve superficial compliance without genuine adoption. Governance maturity requires years, not months. Executives expecting governance transformation in 6 months will be disappointed.

What tools are essential for data governance? Essential tools include data catalog for discovery and metadata (Collibra, Alation, Purview), data quality platform for monitoring and validation (Informatica, Talend, Great Expectations), workflow tool for access requests and governance processes (Jira, ServiceNow, governance platform built-in), and business intelligence for governance metrics and dashboards (Tableau, Power BI). Organizations can start with open-source or lightweight commercial tools before investing in enterprise platforms. Tool selection should prioritize integration capability and fit for organization size and maturity.

How do you measure data governance ROI? Calculate ROI by quantifying financial benefits (prevented regulatory fines, reduced operational errors, eliminated manual data correction, faster analytics delivery) minus governance costs (tools, staff, steward time). Example: $8M annual benefits – $3M costs = $5M net benefit = 167% ROI. Track both hard ROI (directly quantified savings) and soft ROI (improved decision quality, enhanced reputation, increased agility). Build business cases showing breakeven timeline (typically 12-18 months) and cumulative benefits. Present ROI alongside qualitative benefits executives value like risk reduction and competitive advantage.

What’s the difference between data governance and data management? Data governance establishes policies, standards, decision rights, and accountability for data — the “what” and “who” of data management. Data management executes technical and operational processes for handling data — the “how” of implementing governance decisions. Governance sets quality standards and assigns ownership; data management implements quality monitoring and remediation. Governance defines access policies; data management implements access controls. Governance provides framework and oversight; data management provides execution and tools. Both are necessary; governance without management is policy without action, management without governance is activity without direction.

How do you get executives to support data governance? Demonstrate business value through quick wins rather than leading with compliance requirements. Quantify costs of current data problems (operational errors, duplicated efforts, missed opportunities). Build business cases showing ROI from prevented issues and efficiency gains. Connect governance to strategic priorities executives care about (AI enablement, digital transformation, risk reduction). Provide metrics proving governance effectiveness in business terms (revenue protected, costs reduced, decisions improved). Avoid technical jargon and governance theory. Frame governance as business enabler, not IT initiative or compliance burden. Show concrete examples from peer organizations achieving business value through governance.

Summary

Data governance best practices separate programs that deliver business value from those that create bureaucratic overhead. The most critical practices focus on foundations: governance serves business outcomes not compliance checklists, governance is distributed to domain experts not centralized, and governance evolves through iteration not big-bang implementation.

Organizationally, effective governance requires executive sponsorship with real authority to enforce decisions, governance councils that make binding decisions rather than advisory recommendations, federated stewardship where domain experts govern their data with central coordination, and governance embedded in existing workflows rather than parallel processes creating friction.

Policy and standards should operate at appropriate detail levels — principles in enterprise policies, specifics in standards, step-by-step guidance in procedures. Policies must be discoverable and understandable, with exception processes acknowledging that rigid policies don’t fit every scenario. Data classification frameworks provide foundational structure determining access controls and protection requirements.

Data quality practices define requirements by use case rather than universal standards, implement automated continuous monitoring rather than manual sampling, assign clear ownership and accountability for quality outcomes, and address root causes rather than just correcting symptoms. Quality improvements demonstrate tangible governance value.

Metadata and catalog success requires building business glossaries before technical catalogs, making metadata enrichment mandatory rather than optional, incentivizing usage through workflow integration, and maintaining metadata through automation supplemented by manual business context. Catalogs fail when they’re separate tools users must remember to visit rather than embedded capabilities in daily workflows.

Access governance balances accessibility with security through role-based access rather than individual grants, clear request and approval workflows with aggressive cycle times, periodic access reviews preventing access creep, and logging and monitoring enabling misuse detection. Effective access governance enables appropriate access while preventing inappropriate disclosure.

Technology enables governance but doesn’t create it. Best practices include fit-for-purpose tooling rather than compromising on all-in-one platforms, starting with high-impact tools demonstrating value quickly rather than waiting for comprehensive suites, automating governance controls for consistency and scale, and integrating governance into DevOps and DataOps for shift-left control.

Measurement focuses on outcomes proving effectiveness rather than activities creating illusion of progress. Organizations create feedback loops for continuous improvement, benchmark against industry standards for context, and evolve governance maturity deliberately through proven stages over years.

Change management and adoption determine whether governance succeeds. Leading with business value rather than compliance, demonstrating quick wins before expanding scope, investing in training rather than just policy communication, building steward communities rather than leaving stewards isolated, and addressing resistance directly rather than ignoring it all drive adoption.

Industry-specific practices acknowledge different contexts. Financial services integrates governance with model risk management under regulatory oversight. Healthcare balances patient safety needs with privacy protection. Retail enables customer personalization at scale. Government emphasizes transparency and public accountability.

The organizations succeeding with governance share common patterns: they focus relentlessly on business value, they distribute accountability while centralizing standards, they integrate governance into operations rather than creating parallel bureaucracy, they measure rigorously and improve continuously, and they invest in people and culture not just tools and policies.

Governance is not a project with an end date. It’s operational discipline requiring sustained investment and continuous evolution. The practices outlined here provide proven approaches, but organizations must adapt practices to their specific context, culture, and maturity. What works at Wells Fargo may not work identically at a mid-size healthcare provider or government agency. The principles remain constant; implementation details vary.

Ready to strengthen your governance program?

- Chief Data Officer’s Guide — building governance programs

- Data Governance Metrics and KPIs — measuring success

- What Is Data Governance? — foundational concepts

- What Is a Data Steward? — stewardship practices

Published: March 2026 | Author: Clinton (The Data Governor) | Category: Data Governance

Clinton is a Senior Data Governance Engineer with experience implementing governance programs at Wells Fargo, Department of Veterans Affairs, and Nestle Purina. He has built governance from the ground up in finance, government, and manufacturing contexts, learning what works through both successes and failures.

Further Reading

- A No-Nonsense Roadmap to Become A Data Analyst in 2023

- Using Data for Risk Management

- Ace Your Data Analyst Interview: Top 11 Questions and How to Prepare

- Using Amazon Web Services (AWS) for Data Governance

- What is Cloud-based Data Management? Hint: It’s Secret Sauce

- understanding data science

- national information exchange model

- data management body of knowledge

- data governance certification

- governance framework guide