A data governance framework is the structured combination of policies, standards, roles, processes, and technologies that an organization uses to manage its data assets with accountability, consistency, and compliance. Rather than a single document or tool, a data governance framework is the operating system for how data is defined, owned, accessed, and trusted across the enterprise.

- Why a Data Governance Framework Matters

- Core Components of a Data Governance Framework

- Data Governance Roles and Responsibilities

- How to Build a Data Governance Framework

- Data Governance Framework Examples by Industry

- Common Mistakes When Implementing a Framework

- Tools That Support a Data Governance Framework

- How to Measure Data Governance Maturity

- Frequently Asked Questions

- Summary

Why a Data Governance Framework Matters

Organizations that lack a formal data governance framework consistently struggle with the same set of problems: multiple versions of the same metric, regulatory findings from auditors, data pipelines that break when a source system changes, and business leaders who distrust the reports they receive.

A well-implemented framework solves these problems by establishing who owns the data, what it means, how it should be used, and who is accountable when it is wrong.

From a regulatory standpoint, frameworks are no longer optional. Financial institutions operating under Basel III, BCBS 239, or SOX; healthcare organizations bound by HIPAA; and companies subject to GDPR or CCPA all face explicit requirements around data lineage, retention, and access control. A governance framework is the mechanism through which those requirements are operationalized.

Beyond compliance, mature data governance drives measurable business value. Organizations with defined data ownership and stewardship report faster time-to-insight, lower costs associated with data remediation, and higher confidence in AI and analytics initiatives — because the underlying data is known to be accurate, complete, and consistently defined.

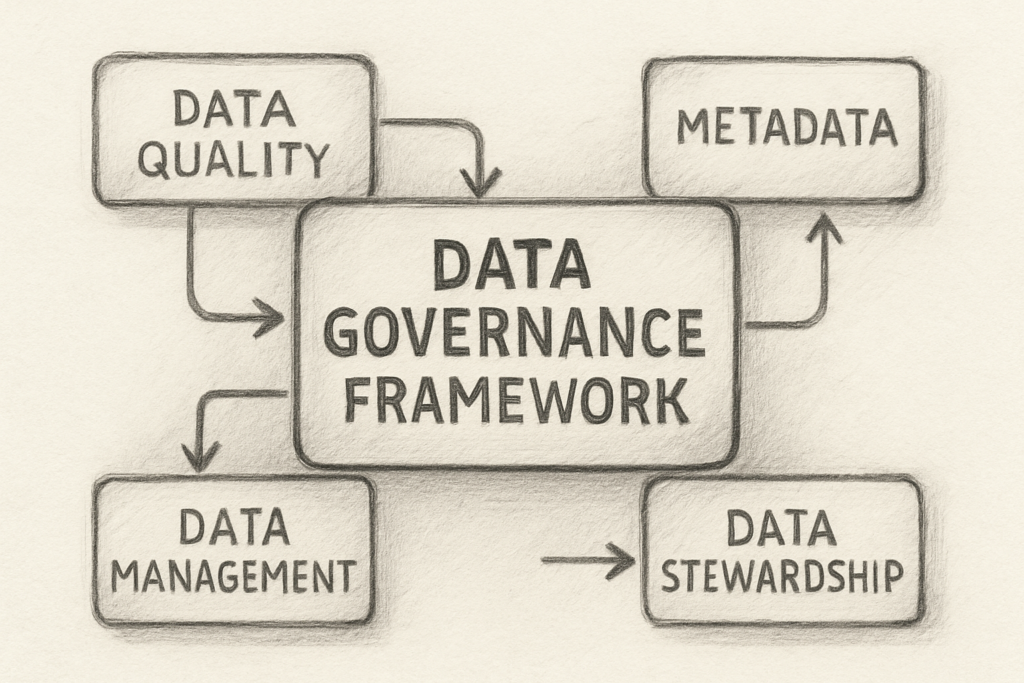

Core Components of a Data Governance Framework

Every effective data governance framework includes the following foundational components, regardless of industry or organizational size.

Data Governance Operating Model

The operating model defines how governance is organized: whether it is centralized (a single governance team sets all standards), federated (domain teams govern their own data with enterprise-level guardrails), or hybrid (a center of excellence sets policy while business units execute). Most large enterprises use a hybrid model because it balances consistency with domain-level agility.

The operating model also establishes the governance council or steering committee — the cross-functional body that resolves escalations, approves policy changes, and aligns governance priorities with business strategy.

Data Policies and Standards

Policies are the high-level statements of intent — for example, “All customer data must be classified before being shared with third parties.” Standards are the specific, enforceable rules that implement those policies — for example, the specific data classification taxonomy, the acceptable retention periods per classification level, and the process for requesting an exception.

Standards must be version-controlled, published in an accessible location (such as a data catalog or intranet), and reviewed on a defined cycle. Undocumented or stale standards are a leading cause of governance program failure.

Business Glossary and Data Definitions

One of the most impactful deliverables of any governance framework is a managed business glossary — a curated, approved set of definitions for critical data elements. Terms like “active customer,” “net revenue,” “employee headcount,” or “reportable incident” mean different things in different systems, and the absence of agreed-upon definitions is the root cause of most data trust issues.

The business glossary links business terms to their technical representations in source systems, making it the bridge between the data dictionary maintained by IT and the semantic layer used by business analysts.

Data Ownership and Stewardship Model

Every data domain — customer, product, finance, HR, supply chain — should have an assigned Data Owner, typically a senior business leader accountable for the quality, access, and proper use of data in that domain. Beneath the owner sits one or more Data Stewards, subject matter experts responsible for day-to-day stewardship activities: defining terms, resolving quality issues, approving access requests, and participating in data issue triage.

This two-tier model is the most commonly implemented structure and maps cleanly to governance platforms like Collibra, where ownership and stewardship assignments drive workflow routing.

Data Quality Framework

Data quality is the operational proof that governance is working. A governance framework must define measurable quality dimensions — typically accuracy, completeness, consistency, timeliness, validity, and uniqueness — along with the profiling and monitoring processes that measure them.

Quality rules should be tied to critical data elements (CDEs), which are the subset of data fields that carry the highest business or regulatory significance. Governing everything equally is not feasible; governing CDEs rigorously is.

Data Lineage and Metadata Management

Lineage captures how data flows from source systems through transformation layers to consumption points. It answers the question regulators and business users ask most often: “Where did this number come from?”

Metadata management goes beyond lineage to capture the full context of a data asset — its definition, owner, classification, quality score, usage, and relationships to other assets. A data catalog serves as the system of record for this metadata, making data discoverable and trustworthy at scale.

Data Access and Security Controls

The governance framework must define how data is classified (for example, Public, Internal, Confidential, Restricted) and what access controls apply to each classification level. Role-based access control (RBAC) and attribute-based access control (ABAC) models should be formally documented and enforced through integration with identity management systems.

Access governance also includes the processes for requesting access, approving access, and reviewing and revoking access on a scheduled basis — all of which should be auditable.

Data Governance Roles and Responsibilities

A governance framework is only as strong as the people executing it. The following role taxonomy is common in enterprise governance programs.

Chief Data Officer (CDO) — Organizational sponsor of the data governance program. Accountable for the strategy, funding, and executive alignment of the framework. The CDO sets the tone for data as a strategic asset.

Data Governance Director / Program Manager — Responsible for designing and operationalizing the governance framework. Manages the governance office, maintains the policy library, facilitates the governance council, and tracks program KPIs.

Data Owner — A business executive (VP, Director, or equivalent) assigned accountability for a specific data domain. Approves definitions, resolves domain-level disputes, and signs off on quality standards for their domain.

Data Steward — A subject matter expert who performs the hands-on governance work: defining terms in the glossary, documenting data elements, resolving quality issues, reviewing access requests, and maintaining lineage documentation. This is the most operationally active role in the framework.

Data Custodian — A technical role (typically in IT or Data Engineering) responsible for implementing and maintaining the controls defined by the governance framework — storage, backup, access provisioning, pipeline integrity.

Data Consumer — Any business user or system that uses governed data. Consumers are responsible for using data in accordance with policies, reporting quality issues, and participating in stewardship activities when relevant.

How to Build a Data Governance Framework

Building a governance framework is a program, not a project. The following phased approach reflects how mature programs are successfully implemented in practice.

Phase 1: Foundation (Months 1–3)

Secure executive sponsorship and establish the business case. Conduct a current-state assessment to identify the most critical data domains, the most significant data quality or compliance pain points, and the gaps in existing governance capabilities. Define the target operating model, stand up the governance council, and assign initial data ownership for two to three priority domains.

Do not try to govern everything at once. The programs that fail fastest are those that attempt enterprise-wide coverage before demonstrating value in a focused scope.

Phase 2: Policy and Standards Development (Months 3–6)

Develop the core policy set: data classification policy, data quality policy, data access policy, metadata management policy, and data retention policy. For each policy, document the supporting standards and procedures. Publish these in a centralized location and formally approve them through the governance council.

Stand up the business glossary with an initial set of critical data elements in the priority domains. Engage data stewards actively in the definition process — stewards who own the definitions will defend and maintain them over time.

Phase 3: Tooling and Operationalization (Months 6–12)

Select and implement governance tooling to scale what was built manually in Phase 2. A data catalog (Collibra, Alation, Microsoft Purview, or similar) becomes the operational platform for glossary management, data asset discovery, lineage, and workflow automation.

Integrate the catalog with source systems, data warehouses, and BI tools so that metadata is captured and updated systematically rather than through manual entry. Configure stewardship workflows so that data quality issues, access requests, and certification tasks are routed to the appropriate owners automatically.

Phase 4: Measurement and Maturity Advancement (Ongoing)

Define and report governance KPIs to the council and executive sponsors on a regular cadence. Track glossary coverage, data quality scores by domain, open stewardship issues, policy compliance rates, and catalog adoption metrics.

Use a formal maturity model (such as the DCAM, DAMA-DMBOK, or a custom model) to benchmark current state and define the roadmap for advancing to the next maturity level. Governance is a continuous improvement discipline — the program should always have a documented next horizon.

Data Governance Framework Examples by Industry

Financial Services

Banks and capital markets firms operate under some of the most demanding data governance requirements in the world. BCBS 239 established eleven principles for risk data aggregation and reporting that are explicitly operationalized through governance frameworks — including requirements for data ownership at the board level, documented lineage for regulatory reports, and quantified accuracy thresholds for risk data.

At Wells Fargo and similarly sized institutions, the governance framework maps directly to the three lines of defense model: the business (first line) owns the data; risk and compliance (second line) sets policy and monitors adherence; internal audit (third line) independently validates. The CDO organization sits in the second line and operates the governance program.

Healthcare and Life Sciences

HIPAA requires covered entities to implement administrative, physical, and technical safeguards for protected health information (PHI). The governance framework translates these requirements into operational controls: data classification policies that identify PHI, role-based access controls that limit PHI exposure to authorized users, audit logs that document access, and data use agreements that govern sharing with business associates.

Healthcare governance frameworks also address the complex challenge of master data management for patient identity — ensuring that the same patient is represented consistently across the EMR, billing system, population health platform, and analytics environment.

Federal Government

Federal agencies governed by the Federal Data Strategy and the Evidence Act operate governance frameworks that emphasize transparency, interoperability, and public trust. The VA’s data governance work, for example, must address the challenge of governing veteran data that spans dozens of legacy systems, multiple regional networks, and multiple mission areas — all while meeting federal records management requirements and enabling secure data sharing with DoD and other agencies.

Consumer Goods and Manufacturing

In manufacturing environments like Nestle Purina, governance frameworks often center on product master data and supply chain data — where inconsistent product hierarchies, unit-of-measure mismatches, or duplicate supplier records can cause downstream operational and financial errors. MDM platforms like Profisee are frequently used to establish a single, governed golden record for products, materials, and vendors that feeds ERP, retail, and analytics systems.

Common Mistakes When Implementing a Framework

Treating governance as an IT initiative. Data governance is a business program with technology enablement. When it is owned and driven by IT, it fails to secure business engagement, and data owners never materialize.

Trying to govern everything at once. Programs that start with enterprise-wide scope before demonstrating value lose momentum and executive support. Start with two to three high-impact domains and expand from there.

Building policies without enforcing them. A policy library that no one reads and no one enforces is theater, not governance. Every policy needs an owner, a compliance monitoring process, and a consequence for non-compliance.

Underinvesting in the stewardship model. Data stewards are the operational heartbeat of the program. Organizations that expect stewards to perform governance activities on top of a full existing workload without any protected capacity will see stewardship responsibilities deprioritized immediately.

Selecting a tool before defining the operating model. A data catalog cannot compensate for the absence of a defined governance structure. The catalog should implement and scale the operating model — it should not substitute for one.

Measuring activity instead of outcomes. Governance metrics like “number of terms defined” or “number of assets cataloged” measure activity. Meaningful metrics measure outcomes — data quality scores, reduction in data-related defects, audit finding closure rates, and time-to-trusted-data for analytics teams.

Tools That Support a Data Governance Framework

The governance platform landscape has matured significantly, with several enterprise-grade options available depending on organizational scale, cloud strategy, and use case priorities.

Collibra is the market leader for enterprise data governance, offering a comprehensive platform for business glossary, data catalog, lineage, stewardship workflows, and policy management. It is the platform of choice for many large financial institutions and regulated industries. Its workflow engine and role-based assignment model align closely with the data owner and steward operating model described above.

Microsoft Purview (formerly Azure Purview) is the logical choice for organizations deeply invested in the Azure ecosystem. It provides automated data discovery and classification across Azure data services, a unified catalog, and built-in compliance tools. Its integration with Microsoft 365 and Azure Active Directory makes it particularly strong for access governance and sensitivity labeling.

Alation is known for its strong data catalog and search capabilities, with a user experience that emphasizes data consumer adoption. It is particularly strong in analytics-heavy environments where data scientists and business analysts are primary consumers.

Profisee is a purpose-built MDM platform that complements data governance programs by providing the master data management layer — establishing golden records for critical entity types (customer, product, supplier, location) that the governance framework defines and governs.

Atlan and data.world are emerging players that compete on collaboration features and integration breadth, particularly for data teams working in modern cloud data stacks.

How to Measure Data Governance Maturity

Maturity models provide a structured way to assess where a governance program currently stands and define a credible roadmap for advancement. The most widely referenced frameworks include:

DAMA-DMBOK — The Data Management Body of Knowledge published by DAMA International defines eleven knowledge areas of data management and provides maturity assessment criteria for each. It is the foundational reference for data governance practitioners.

DCAM (Data Management Capability Assessment Model) — Published by the EDM Council, DCAM is widely used in financial services and defines a structured set of data management capabilities, components, and sub-components with associated maturity criteria.

CMMI Data Management Maturity (DMM) — A process capability framework for data management with five maturity levels (Performed, Managed, Defined, Quantitatively Managed, Optimizing). Useful for organizations that want a rigorous, independently assessable benchmark.

Regardless of which model is used, maturity progression follows a consistent arc:

- Level 1 — Initial: Governance is ad hoc, reactive, and undocumented.

- Level 2 — Managed: Basic policies exist; governance is applied inconsistently.

- Level 3 — Defined: A formal framework is in place, roles are assigned, and processes are repeatable.

- Level 4 — Quantitatively Managed: Governance KPIs are tracked and reported; quality is measured against defined thresholds.

- Level 5 — Optimizing: Governance is embedded in the data culture; continuous improvement is systematic.

Most organizations initiating a formal program are at Level 1 or Level 2. A realistic goal for the first 18 to 24 months of a well-resourced program is to reach a solid Level 3.

Frequently Asked Questions

What is a data governance framework?

A data governance framework is the structured combination of policies, standards, roles, processes, and technologies that an organization uses to manage its data assets with accountability, consistency, and compliance. It functions as the operating system for how data is defined, owned, accessed, and trusted across the enterprise.

Why does a company need a data governance framework?

A data governance framework is essential because it addresses common issues such as inconsistent metrics, regulatory compliance problems, broken data pipelines, and distrust in reports. It clarifies data ownership, meaning, usage, and accountability, and helps organizations meet regulatory requirements while driving business value through improved data accuracy and confidence.

What are the core components of a data governance framework?

The core components include the data governance operating model, policies and standards, business glossary and data definitions, data ownership and stewardship, data quality framework, data lineage and metadata management, data access and security controls, and roles and responsibilities for implementing and maintaining governance.

How long does it typically take to implement a data governance framework?

A basic governance framework can usually be established in six to twelve months if supported by the organization, but a comprehensive, enterprise-wide program generally requires two to four years of sustained effort and investment to reach a high maturity level.

What role does a data catalog play in a governance framework?

A data catalog serves as the operational platform for the governance framework by providing a centralized repository for metadata, definitions, ownership, and lineage. It enables data stewards to perform their duties and helps data consumers discover and understand data assets, thereby operationalizing governance policies and practices.

Summary

A data governance framework is not a document, a tool, or a one-time project. It is the sustained organizational capability to manage data as a strategic asset — with clear ownership, consistent definitions, measurable quality, appropriate access controls, and a culture of accountability.

The organizations that build this capability systematically — starting with a defined operating model, engaging business data owners, and scaling through technology — are the ones that can trust their data to drive confident decisions, meet regulatory obligations, and fuel the AI-driven business intelligence their competitive environments demand.

This article was written by Clinton, a Senior Data Governance Engineer with hands-on implementation experience at Wells Fargo, the Department of Veterans Affairs, and Nestle Purina. He has led governance programs using Collibra, Profisee, and Microsoft Purview across financial services, federal government, and manufacturing industries.