Data Governance KPIs

Data governance metrics are quantifiable measurements that track the performance, adoption, effectiveness, and business impact of an organization’s data governance program. These metrics answer the fundamental question every CDO and data governance leader faces: “Is our governance program actually working, or are we just creating policies that sit on shelves?”

In 2026, data governance has evolved from a compliance checkbox to a business enabler. Organizations can no longer justify governance programs based on regulatory necessity alone — they must demonstrate measurable business value. Executives want to see ROI. Business units want proof that governance improves their operations rather than adding bureaucracy. Data teams want metrics showing that governance investments actually improve data quality and reduce firefighting.

Measuring data governance success is challenging because governance benefits are often indirect and distributed across the organization. Better data quality enables better analytics, which supports better decisions, which drives better business outcomes. Connecting governance activities to these ultimate outcomes requires thoughtful metric design and persistent measurement.

This comprehensive guide is written for CDOs and data governance leaders building measurement frameworks, data governance managers tracking program performance, executives evaluating governance program effectiveness, and governance practitioners seeking to demonstrate value. You’ll learn what metrics actually matter versus vanity metrics, how to build a balanced governance scorecard, what leading and lagging indicators to track, how to measure governance maturity, and how to connect governance metrics to business outcomes.

This isn’t theoretical framework documentation. This is practical guidance from organizations that have built measurement programs that actually influence decisions and demonstrate value.

- Why Data Governance Metrics Matter

- Categories of Governance Metrics

- Building a Balanced Governance Scorecard

- Measuring Governance Maturity

- Common Metric Pitfalls and How to Avoid Them

- Measuring Data Governance ROI

- Tools and Platforms for Governance Metrics

- Reporting Governance Metrics to Stakeholders

- Evolving Metrics Over Time

- Industry-Specific Governance Metrics

- Frequently Asked Questions

- Summary

Why Data Governance Metrics Matter

Organizations that don’t measure governance effectiveness operate in the dark. Without metrics, governance becomes faith-based — hoping governance activities produce value without evidence confirming it.

The Measurement Gap

Most data governance programs track activity metrics but not effectiveness metrics. They measure policies written, training sessions conducted, steward meetings held, and governance council attendance. These activity metrics create an illusion of progress without confirming actual improvement in data quality, usability, or business outcomes.

Activity metrics answer “are we doing governance?” Effectiveness metrics answer “is governance working?” Organizations need both, but effectiveness metrics determine whether governance investment is justified.

Demonstrating Business Value

Data governance competes for resources with other business priorities. In budget discussions, governance leaders must justify continued investment. “We wrote 12 new policies this quarter” doesn’t convince CFOs. “We reduced data quality issues by 35%, cutting operational costs by $2.3M” does.

Metrics translate governance activities into business language. Executives understand revenue impact, cost reduction, risk mitigation, and efficiency gains. Governance metrics must connect governance work to these business outcomes.

Driving Continuous Improvement

Measurement enables improvement. Organizations tracking data quality trends can identify systemic issues, prioritize remediation, and verify that fixes work. Organizations without measurement repeat the same problems indefinitely without visibility.

Effective metrics create feedback loops: measure current state, implement improvement, measure new state, assess impact, and adjust approach. Without this loop, governance programs drift without direction or learning.

Aligning Stakeholders

Different stakeholders care about different governance aspects. Business users care about data accessibility and usability. IT cares about data security and compliance. Executives care about business outcomes and ROI. Comprehensive metrics address all stakeholder perspectives, demonstrating value to each constituency.

Metrics also create shared accountability. When data quality metrics decline, everyone sees it. When stewardship programs improve metadata completeness, success is visible. Transparency through metrics aligns organizations around governance objectives.

Categories of Governance Metrics

Effective governance measurement programs track metrics across multiple categories, creating a balanced view of program health and impact.

Adoption Metrics

Adoption metrics measure whether the organization is actually using governance processes, tools, and capabilities.

Governance Awareness and Training

The percentage of employees who have completed data governance training indicates awareness levels. High-performing organizations achieve 80%+ training completion for employees working directly with data, and 60%+ for all employees. Low training completion signals that governance remains unfamiliar to the organization.

Training completion should be segmented by role: data stewards should approach 100%, data analysts and engineers 90%+, business users 70%+, and executives 60%+. Different roles require different governance knowledge depth.

Governance Process Utilization

How many data access requests flow through governance approval processes versus bypassing governance? How many new datasets are registered in the data catalog versus remaining undiscovered? How many projects follow the data classification process?

High bypass rates signal that governance processes are too cumbersome or that the organization doesn’t understand their value. Target: 85%+ of relevant activities following governance processes.

Tool Adoption and Usage

For governance tools like data catalogs, what percentage of data assets are cataloged? How many active users does the data catalog have? What’s the search activity in the catalog?

Low tool adoption indicates poor user experience, inadequate training, or lack of integration with workflows. Active usage metrics should show sustained engagement, not just initial curiosity followed by abandonment.

Stewardship Program Participation

What percentage of critical data domains have assigned stewards? How active are stewards in governance activities — attending meetings, resolving data quality issues, enriching metadata?

Steward participation metrics reveal whether stewardship is nominal (people appointed but inactive) or operational (stewards actively governing data).

Data Quality Metrics

Data quality is the most tangible governance outcome. Quality improvements directly demonstrate governance value.

Completeness

Completeness measures the percentage of required fields that are populated. A customer master with 95% completeness has 5% of customer records missing critical information. Target completeness varies by field criticality: mandatory regulatory fields should approach 100%, while optional enrichment fields might target 70-80%.

Track completeness trends over time. Governance programs should drive improving completeness as data entry processes improve and historical gaps are filled.

Accuracy

Accuracy measures whether data values are correct. This is harder to measure than completeness because determining “correct” often requires manual verification or comparison to authoritative sources.

Approaches include statistical profiling (detecting outliers or unlikely values), rule-based validation (checking that ages are between 0-120), external source comparison (verifying addresses against postal databases), and manual sampling (reviewing random records for accuracy).

Target accuracy depends on use case criticality. Financial reporting data should exceed 99.5% accuracy. Marketing analytics data might target 95%.

Consistency

Consistency measures whether the same data has the same value across systems. A customer’s address should match between CRM, billing, and shipping systems. Inconsistency creates confusion and operational failures.

Measure consistency by percentage of matching records across systems. High consistency (95%+) indicates effective master data management. Low consistency signals fragmented data without governance.

Timeliness

Timeliness measures how current data is. Sales data for decision-making should be recent; year-old data is useless. Target timeliness varies by use case: real-time dashboards need minute-old data, monthly reporting can accept week-old data.

Measure data age by timestamp analysis: what percentage of records were updated within the target freshness window?

Validity

Validity measures whether data conforms to defined standards and business rules. Email addresses should follow email format. Product codes should match the product master. Dates should be logical (birth dates before hire dates).

Track validity by running validation rules against data and measuring pass rates. Target: 98%+ validity for critical data elements.

Uniqueness

Uniqueness measures duplicate rates. Customer masters should not contain the same customer multiple times. Product catalogs should not list the same product under different IDs.

Measure by running duplicate detection algorithms and calculating duplicate percentage. Target: <1% duplication for master data.

Data Quality Issue Volume and Resolution

Track total open data quality issues, new issues identified, issues resolved, and average time to resolution. These metrics show whether quality is improving (resolved > new issues) or degrading (new > resolved).

Target: resolve 100%+ of new issues each period, driving down the backlog. Average resolution time should trend downward as root causes are addressed.

Metadata Management Metrics

Metadata makes data usable. Comprehensive metadata enables discovery, understanding, and appropriate use.

Metadata Completeness

What percentage of data assets have business definitions? Data owners? Data classifications? Lineage documentation? Quality metrics?

Track metadata completeness by asset and by metadata type. Target: critical data assets should achieve 95%+ metadata completeness. Lower-priority assets might target 70%.

Metadata Quality

Are metadata descriptions clear and helpful, or are they auto-generated technical gibberish? This requires qualitative assessment: reviewing samples of business glossary definitions for clarity, completeness, and accuracy.

Consider implementing metadata quality ratings where users rate definition helpfulness. Low ratings signal poor metadata requiring improvement.

Business Glossary Coverage

What percentage of critical business terms have documented definitions in the business glossary? How many terms are in “draft” versus “approved” status?

Target: 100% of critical business terms defined and approved. Monitor glossary growth over time as more terms are documented.

Lineage Documentation

What percentage of critical data elements have documented lineage from source to consumption? End-to-end lineage is ideal but challenging; even documenting immediate upstream/downstream lineage adds value.

Track lineage completeness for critical reports and analytics. Target: 100% of regulatory reports with full lineage, 80%+ of executive dashboards.

Access Governance Metrics

Access governance ensures the right people access the right data while preventing inappropriate access.

Access Request Cycle Time

How long from access request submission to approval/denial and fulfillment? Long cycle times frustrate users and incentivize workarounds.

Target: routine access requests approved within 24-48 hours, fulfilled within 1 week. Complex requests requiring additional review might take 2 weeks.

Access Review Completion

What percentage of required access reviews (periodic recertification of user access) are completed on time? Incomplete access reviews mean inappropriate access persists.

Target: 100% of access reviews completed within defined windows. Late reviews indicate governance process gaps.

Inappropriate Access Incidents

How many times has inappropriate access been detected? This includes unauthorized access attempts, access by terminated employees, and access violations discovered in audits.

Declining incident rates indicate improving access controls. Increasing rates signal control weaknesses requiring attention.

Access Denial Rate

What percentage of access requests are denied? Very low denial rates (<1%) might indicate rubber-stamp approvals rather than genuine evaluation. Denial rates around 5-10% suggest appropriate scrutiny.

Very high denial rates (>25%) might indicate unclear access policies or poor user understanding of data availability.

Compliance Metrics

Compliance metrics demonstrate governance’s risk mitigation value.

Policy Compliance Rate

What percentage of relevant activities comply with governance policies? This requires auditing: reviewing samples of projects, data uses, or access grants against policy requirements.

Target: 95%+ compliance. Lower compliance indicates policies are unclear, impractical, or poorly enforced.

Regulatory Audit Findings

How many findings do regulators identify in data governance areas during audits? Track findings by severity (critical, high, medium, low) and trend over time.

Decreasing findings demonstrate improving governance. Increasing findings signal governance gaps requiring attention.

Data Privacy Incident Rate

How many data privacy incidents occur (unauthorized disclosures, breaches, improper data use)? Track incident volume, severity, and root causes.

Effective governance should drive declining privacy incident rates through better controls and awareness.

Time to Compliance

When new regulations emerge, how quickly can the organization achieve compliance? This measures governance agility.

Organizations with strong governance can implement new compliance requirements in weeks or months. Organizations without governance foundation require years.

Stewardship Effectiveness Metrics

Data stewards are the operational face of governance. Their effectiveness directly determines governance outcomes.

Steward Activity Levels

Track steward activities: data quality issues triaged, metadata entries created/updated, access requests reviewed, and steward meeting participation.

Active stewards show consistent engagement. Inactive stewards indicate nominal stewardship without real execution.

Issue Resolution by Stewards

What percentage of data quality issues are resolved by stewards versus escalated? Effective stewards resolve the majority of routine issues themselves.

Target: stewards resolve 70-80% of issues within their authority, escalating only complex or cross-domain issues.

Stakeholder Satisfaction with Stewards

Survey data consumers on steward responsiveness and helpfulness. Low satisfaction scores indicate steward capability gaps or bandwidth issues.

Target: 80%+ satisfaction ratings with steward support.

Steward Retention

What’s the turnover rate among stewards? High turnover creates knowledge loss and governance continuity issues.

Target: <15% annual steward turnover. Higher turnover suggests steward burnout or lack of support.

Business Impact Metrics

Business impact metrics connect governance to outcomes executives care about.

Time Saved in Data Discovery and Preparation

How much time do analysts spend finding and preparing data? Effective governance (through catalogs, metadata, quality) should reduce this significantly.

Measure by surveying analysts: time spent on data discovery before and after governance improvements. Target: 30-50% reduction in data preparation time.

Reduction in Data-Related Operational Issues

How often do operational processes fail or require manual intervention due to data quality issues? Track incident volume and resolution time.

Governance should reduce operational incidents through better data quality and proactive monitoring. Target: 40-60% reduction in data incidents.

Improved Decision Confidence

Survey business decision-makers: how confident are they in the data underlying their decisions? Low confidence means data isn’t trusted regardless of actual quality.

Track confidence ratings over time. Target: 80%+ of leaders “confident” or “very confident” in data quality.

Revenue Impact from Data Quality

In some contexts, poor data quality directly impacts revenue: incorrect billing, missed sales opportunities, or customer dissatisfaction. Quantify revenue protected or gained through governance improvements.

This is challenging to measure but highly impactful when demonstrated. Even rough estimates (“we estimate governance prevented $5M in billing errors”) resonate with executives.

Cost Reduction from Improved Efficiency

Calculate costs saved through reduced manual data correction, fewer data reconciliation efforts, less rework from bad data, and improved operational efficiency.

Build business cases showing governance ROI through quantified cost reductions.

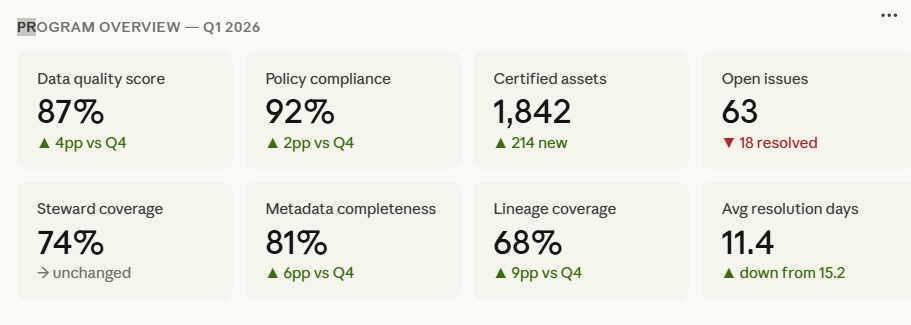

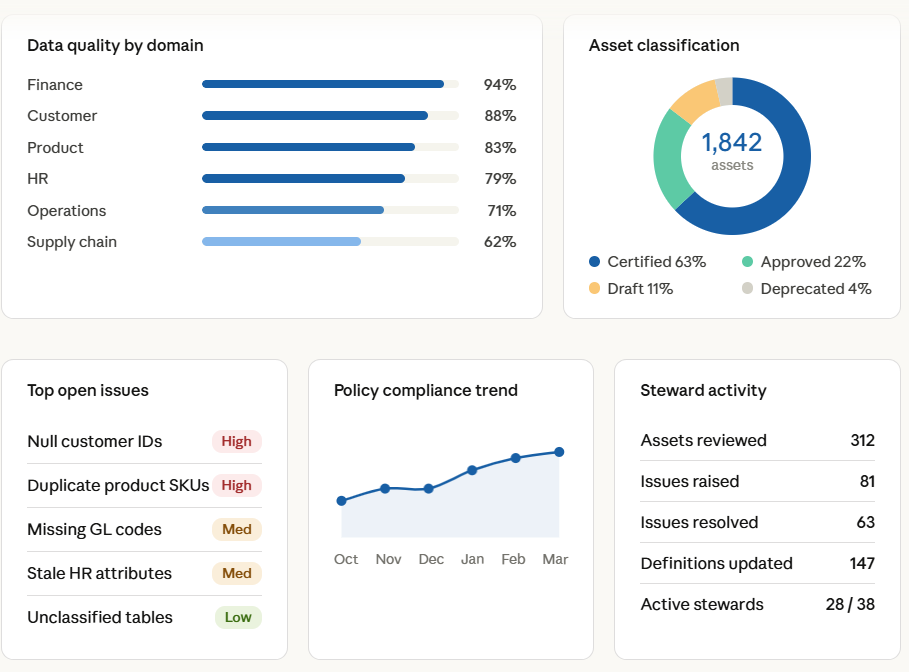

Building a Balanced Governance Scorecard

Tracking dozens of individual metrics becomes overwhelming. Effective programs organize metrics into balanced scorecards providing at-a-glance governance health views.

The Four Perspectives Framework

Organize governance metrics into four perspectives inspired by the Balanced Scorecard methodology:

Business Value Perspective: metrics demonstrating governance business impact (time saved, costs reduced, revenue protected, decision confidence).

Data Quality Perspective: metrics tracking data fitness for use (completeness, accuracy, timeliness, quality issue trends).

Process Perspective: metrics measuring governance process effectiveness (adoption rates, cycle times, compliance rates, steward activity).

Foundation Perspective: metrics assessing governance infrastructure (tool adoption, metadata completeness, training completion, stewardship coverage).

Each perspective contains 3-5 key metrics, creating a scorecard with 12-20 total metrics — enough for comprehensive coverage without overwhelming detail.

Leading vs. Lagging Indicators

Balance leading indicators (predictive of future performance) with lagging indicators (measuring past outcomes).

Leading indicators include governance tool adoption trends, steward activity levels, training completion rates, and policy awareness scores. These predict future governance effectiveness.

Lagging indicators include data quality improvement, compliance audit findings, operational incident reduction, and business impact metrics. These measure actual outcomes but react slowly to governance changes.

Leading indicators enable proactive management: if tool adoption stalls, address it before quality suffers. Lagging indicators demonstrate ultimate impact but don’t help course-correct quickly.

Metric Targets and Thresholds

Each metric needs defined targets representing success and thresholds triggering action.

Green/Yellow/Red thresholds provide at-a-glance status. Example for data completeness:

- Green: ≥95% complete

- Yellow: 90-94% complete

- Red: <90% complete

Yellow triggers investigation and corrective action planning. Red triggers immediate intervention.

Set targets based on industry benchmarks where available, internal baselines (improve 20% over current state), and business requirements (regulatory mandates, operational needs).

Targets should be ambitious but achievable. Unattainable targets demoralize teams. Easily met targets don’t drive improvement.

Scorecard Visualization

Present scorecards visually for executive consumption. Dashboards with color-coded metrics, trend arrows (↑↓→), and sparklines showing recent trends communicate quickly.

Avoid overwhelming detail in executive scorecards. Executives need the summary view; detailed metrics support deep dives when issues arise.

Update scorecards regularly — monthly for most metrics, weekly for critical operational metrics, quarterly for strategic metrics.

Measuring Governance Maturity

In addition to operational metrics, organizations track overall governance maturity — the sophistication and effectiveness of their governance program.

Data Governance Maturity Models

Several maturity models exist. Most use 5-level scales:

Level 1 – Initial/Ad Hoc: No formal governance. Data management is reactive and inconsistent.

Level 2 – Defined: Governance policies and processes are documented. Awareness is building but adoption is limited.

Level 3 – Managed: Governance processes are consistently followed. Stewards are active. Metrics are tracked.

Level 4 – Measured: Governance effectiveness is quantified. Programs continuously improve based on metrics. Governance is integrated into operations.

Level 5 – Optimized: Governance is deeply embedded in culture. Automation is extensive. The organization proactively anticipates governance needs.

Organizations assess current maturity levels across dimensions: data quality management, metadata management, data architecture, data security, stewardship, and policy management.

Maturity assessment provides baseline for improvement roadmaps. Progress from Level 2 to Level 3 represents significant governance advancement even if metrics don’t yet show dramatic business impact.

DCAM (Data Management Capability Assessment Model)

DCAM is an industry-standard maturity framework from EDM Council, particularly common in financial services. It assesses 37 capabilities across 14 components.

Organizations can self-assess or engage external assessors for DCAM evaluation. DCAM scores enable peer benchmarking and identify specific capability gaps requiring investment.

DAMA-DMBOK Framework

The DAMA Data Management Body of Knowledge describes data management capabilities. Organizations assess maturity against DMBOK’s 11 knowledge areas: data governance, data architecture, data quality, metadata, master data, and others.

DMBOK provides comprehensive framework for assessing enterprise data management maturity including but extending beyond governance.

Self-Assessment vs. External Assessment

Organizations can self-assess maturity using frameworks or engage external consultants for independent assessment.

Self-assessment advantages: lower cost, internal ownership, and ongoing assessment capability.

Self-assessment challenges: potential bias (rating capabilities higher than reality) and lack of external benchmarking perspective.

External assessment advantages: objectivity, industry benchmarking, and expert perspective.

External assessment challenges: higher cost and one-time snapshot rather than continuous monitoring.

Many organizations combine approaches: annual external assessment for baseline and benchmarking, with quarterly internal self-assessment tracking progress.

Common Metric Pitfalls and How to Avoid Them

Organizations frequently make predictable mistakes in governance measurement.

Vanity Metrics

Vanity metrics look impressive but don’t indicate real progress or value. Examples include total number of governance policies (more policies ≠ better governance), number of steward meetings held (activity ≠ outcomes), size of business glossary (quantity ≠ quality or usage), and governance training hours delivered (hours ≠ comprehension or behavior change).

The fix: For every metric, ask “if this improves, are we confident governance is more effective?” If the answer is uncertain, reconsider the metric.

Measuring What’s Easy Instead of What Matters

Organizations often measure what’s readily available rather than what’s truly important. It’s easy to count policies. It’s hard to measure decision quality improvement from better data.

The fix: Identify the 5 outcomes that would prove governance success (better data quality, faster analytics, reduced risk, etc.). Design metrics for those outcomes even if measurement is challenging. Supplement with easier proxy metrics but never substitute entirely.

Gaming and Perverse Incentives

When metrics become targets, people optimize for metrics rather than underlying goals. Example: if data catalog coverage is measured, teams may catalog data with minimal metadata just to increase the count.

The fix: Measure quality alongside quantity. Track catalog coverage AND metadata completeness AND catalog usage. Gaming becomes harder when multiple related metrics must all improve.

Analysis Paralysis

Organizations delay governance action while building perfect measurement frameworks. They debate metric definitions endlessly or wait for comprehensive monitoring tools before starting governance work.

The fix: Start with 5-10 imperfect metrics measuring critical areas. Collect data manually if needed. Iterate and improve measurement over time. Some data is better than no data while pursuing perfection.

Lack of Baseline

Organizations implement governance improvements without measuring starting conditions, making impact demonstration impossible. “Data quality improved by 40%” requires knowing the starting point.

The fix: Before any significant governance initiative, establish baseline metrics. Even rough estimates (“we estimate 60% of customer records have quality issues”) provide comparison basis.

Insufficient Context

Metrics without context mislead. “Data quality is 85%” sounds good or bad depending on starting point (up from 60% or down from 95%?), industry benchmarks, and business requirements.

The fix: Always present metrics with context — trends over time, comparison to targets, and peer benchmarks where available.

Measuring Data Governance ROI

Executives want return on investment. Data governance requires significant investment in people, tools, and processes. Demonstrating ROI justifies continued funding.

Hard ROI: Quantified Financial Impact

Hard ROI directly quantifies financial benefits from governance:

Cost Avoidance: Calculate costs prevented through governance. Examples include fines avoided through compliance (GDPR penalties are public record), operational errors prevented by data quality, and manual data correction time eliminated.

Cost Reduction: Measure actual cost decreases attributable to governance including reduced data storage from deduplication and archiving, lower reconciliation labor costs, and decreased incident response costs.

Revenue Protection/Growth: Quantify revenue impact including prevented revenue leakage from billing errors, increased sales from better customer data, and faster time-to-market from governed data assets.

Calculate ROI as: (Financial Benefits – Governance Costs) / Governance Costs × 100%

Example: $8M in benefits – $3M in costs = $5M net benefit. ROI = $5M / $3M = 167%

Soft ROI: Difficult to Quantify but Real

Some governance benefits are real but hard to quantify precisely:

Improved Decision Quality: Better data leads to better decisions, but isolating governance contribution from other factors is challenging. Use proxies like decision maker confidence ratings.

Enhanced Reputation: Strong data governance builds trust with customers, regulators, and partners. Reputation impact is real but hard to monetize.

Increased Agility: Governed data enables faster response to market changes. Quantify through reduced time to deploy new analytics or systems.

Risk Mitigation: Governance reduces risk of data breaches, compliance violations, and operational failures. Calculate by estimating probability × impact of prevented risks.

Present soft ROI benefits alongside hard ROI, acknowledging they’re harder to quantify but nonetheless valuable.

Building the Business Case

When seeking governance investment, build business cases showing:

Current state costs: Quantify current costs from poor data quality, compliance gaps, and inefficiency. This demonstrates the problem severity.

Proposed governance investment: Detail costs for people, tools, and processes.

Expected benefits: Quantify both hard and soft benefits over 1-3 years.

Net financial impact: Show when breakeven occurs and cumulative benefits.

Risk considerations: What happens if we don’t invest in governance? Quantify risk exposure.

Well-built business cases combine financial analysis with narrative explaining governance strategic value beyond pure ROI.

Tools and Platforms for Governance Metrics

Manually collecting and reporting governance metrics becomes unsustainable. Tooling enables scalable measurement.

Data Quality Monitoring Tools

Data quality platforms (Informatica Data Quality, Talend, Great Expectations, Monte Carlo, Datafold) provide automated quality monitoring, measurement, and alerting.

These tools profile data, execute validation rules, track quality trends, and generate quality scorecards — providing the data quality metrics core to governance measurement.

Data Catalog Analytics

Data catalogs (Collibra, Alation, Microsoft Purview) provide analytics on catalog usage, metadata completeness, search behavior, and user engagement.

Catalog analytics answer: Are people using the catalog? Is metadata improving? Which assets are most accessed? These metrics demonstrate catalog value and identify gaps.

Governance Dashboards

Business intelligence platforms (Tableau, Power BI, Qlik) or specialized governance platforms create executive dashboards combining metrics from multiple sources.

Dashboards provide at-a-glance governance health views, drill-down capabilities for investigation, and trend analysis over time.

Workflow and Ticketing Systems

Governance workflows for access requests, data quality issues, and stewardship tasks generate valuable operational metrics when tracked in workflow tools (Jira, ServiceNow, custom governance platforms).

Track request volumes, cycle times, resolution rates, and backlog trends from workflow system data.

Survey and Feedback Tools

User satisfaction with governance, data confidence, and steward effectiveness often require surveys. Survey platforms (Qualtrics, SurveyMonkey, Google Forms) collect qualitative input complementing quantitative metrics.

Regular pulse surveys (quarterly or semi-annual) track sentiment trends over time.

Reporting Governance Metrics to Stakeholders

Different audiences need different metric presentations.

Executive Reporting

Executives need high-level summaries focusing on business impact and strategic health. Present monthly or quarterly scorecards with 10-15 key metrics, color-coded status (green/yellow/red), trends (↑↓→), and brief narrative explaining significant changes or issues.

Focus on business value metrics (ROI, risk reduction, efficiency gains) supplemented by critical health metrics (data quality, compliance).

Keep executive reports to 1-2 pages or dashboard screens. Detail is available for questions but not presented upfront.

Governance Council Reporting

Governance councils need more detail than executives but less than operational teams. Report on governance program health across all dimensions, compliance and policy adherence, stewardship effectiveness, tool adoption and usage, and key initiatives and their progress.

Governance council reports might span 5-10 pages with supporting appendices. Present monthly.

Operational Team Reporting

Data stewards, governance analysts, and operational teams need detailed metrics for daily management. Provide dashboards with drill-down capabilities, detailed data quality metrics by domain/system, issue backlogs and aging, access request queues, and metadata completeness by asset.

Operational teams should have real-time or daily access to their metrics rather than waiting for monthly reports.

Business Unit Reporting

Business units care primarily about data relevant to their operations. Provide data quality metrics for their data, access and usage metrics for their users, compliance status for their systems, and stewardship activity in their domain.

Business unit reports demonstrate governance value in their context rather than enterprise-wide abstraction.

Evolving Metrics Over Time

Governance programs mature, and metrics should evolve accordingly.

Early-Stage Metrics

New governance programs focus on foundation and adoption metrics: governance awareness and training completion, policy documentation completeness, steward appointments and activity, and tool implementation and basic adoption.

Early success means establishing governance infrastructure and getting initial buy-in.

Mid-Stage Metrics

Maturing programs add quality and effectiveness metrics: data quality improvement trends, metadata completeness growth, access governance efficiency, and initial business impact measurements.

Mid-stage success means governance demonstrating operational value.

Advanced-Stage Metrics

Mature programs focus on business outcomes and optimization: quantified ROI and business value, predictive analytics on data quality, automated governance coverage, and governance efficiency (cost per governed asset).

Advanced success means governance optimizing for business impact and efficiency.

Metrics should align with program maturity stage. Measuring ROI in month 3 of a governance program is premature. Focusing only on training completion in year 3 misses the point.

Industry-Specific Governance Metrics

Certain industries have unique governance measurement needs.

Financial Services

Financial services face stringent regulatory oversight requiring specific metrics: model risk management compliance, regulatory reporting accuracy, data lineage completeness for critical reports, fair lending data quality, and anti-money laundering data coverage.

Banking regulators expect quantified proof of data governance effectiveness. Metrics aren’t optional; they’re regulatory requirements.

Healthcare

Healthcare governance metrics emphasize patient safety and privacy: clinical data accuracy (directly affects care), HIPAA compliance and privacy incidents, master patient index quality (duplicate patient records create safety risks), and interoperability and data exchange success rates.

Healthcare metrics must demonstrate patient safety protection, not just operational efficiency.

Retail and E-Commerce

Retail metrics focus on customer experience and operations: product data quality and completeness, customer data accuracy for personalization, inventory data accuracy, and recommendation engine data quality.

Retail operates at high velocity with massive data volumes. Metrics must scale accordingly.

Government

Government metrics emphasize transparency, citizen service, and accountability: data openness and accessibility, citizen data request fulfillment time, privacy and security compliance, and inter-agency data sharing effectiveness.

Government faces unique challenges around legacy systems and public scrutiny affecting metric selection and reporting.

Frequently Asked Questions

What are data governance metrics? Data governance metrics are quantifiable measurements tracking governance program performance, adoption, effectiveness, and business impact. They include data quality metrics (completeness, accuracy, timeliness), adoption metrics (tool usage, training completion, process adherence), compliance metrics (policy adherence, audit findings, privacy incidents), stewardship metrics (steward activity, issue resolution), and business impact metrics (time saved, costs reduced, decision confidence). Effective programs balance activity metrics with outcome metrics.

How do you measure data governance success? Measure governance success through balanced scorecards combining business value (ROI, efficiency gains, revenue protection), data quality (completeness, accuracy, quality issue trends), process effectiveness (adoption rates, cycle times, compliance), and foundational health (tool usage, metadata completeness, stewardship coverage). Track both leading indicators (predicting future performance) and lagging indicators (measuring actual outcomes). Connect metrics to business outcomes executives care about.

What is a good data quality score? Target data quality depends on use case criticality. Financial regulatory reporting should exceed 99.5% accuracy and completeness. Customer analytics might target 95% quality. Marketing data could accept 90%. Rather than universal targets, define quality requirements by data domain and use case. Track trends — improving from 75% to 85% quality demonstrates progress even if not yet at target.

How do you measure data governance ROI? Calculate ROI by quantifying financial benefits (cost avoidance from prevented fines, cost reduction from eliminated manual work, revenue protection from prevented billing errors) minus governance costs (people, tools, processes), divided by governance costs. Example: $8M benefits – $3M costs = $5M net, ROI = 167%. Include both hard ROI (directly quantified) and soft ROI (difficult to quantify but real, like improved decision quality and enhanced reputation).

What metrics should a CDO track? CDOs should track strategic metrics demonstrating governance business value: data governance ROI and business impact, data quality trends across critical domains, regulatory compliance status and audit findings, data literacy and capability development, data-driven decision effectiveness, governance maturity progression, stakeholder satisfaction with data and governance, and data-related risk reduction. Present monthly scorecards to executives with 10-15 key metrics balancing outcomes with program health.

How often should governance metrics be reported? Report frequency varies by audience and metric type. Executives need monthly or quarterly scorecards. Governance councils need monthly detailed reports. Operational teams need weekly or real-time dashboards. Business units need quarterly reviews. Critical operational metrics (data quality issues, access requests) require real-time or daily monitoring. Strategic metrics (maturity, ROI) can be quarterly or semi-annual.

What’s the difference between leading and lagging indicators in governance? Leading indicators predict future performance: governance tool adoption trends, steward activity levels, training completion, policy awareness. They enable proactive management — if tool adoption stalls, address it before quality suffers. Lagging indicators measure outcomes: data quality improvement, compliance audit findings, operational incident reduction, business impact. They demonstrate ultimate success but react slowly to governance changes. Balanced programs track both.

How do you measure data steward effectiveness? Measure steward effectiveness through activity metrics (issues triaged, metadata created, access requests reviewed, meeting participation), outcome metrics (percentage of issues resolved without escalation, data quality improvements in stewarded domains, metadata completeness in assigned areas), and stakeholder satisfaction (surveys of data consumers on steward responsiveness and helpfulness). Track steward retention as high turnover signals problems. Target: 70-80% of issues resolved by stewards, 80%+ stakeholder satisfaction.

What tools are needed to measure governance metrics? Essential tools include data quality monitoring platforms (Informatica, Talend, Great Expectations) for automated quality measurement, data catalogs (Collibra, Alation, Purview) with usage analytics, business intelligence platforms (Tableau, Power BI) for dashboards, workflow systems (Jira, ServiceNow) for operational metrics, and survey tools (Qualtrics) for satisfaction measurement. Start with basic tools and spreadsheets if needed; don’t delay measurement while building perfect infrastructure.

How do you avoid vanity metrics in governance? Avoid vanity metrics by asking for each metric: “If this improves, are we confident governance is more effective?” Focus on outcomes (data quality improvement, business impact) not just activity (policies written, meetings held). Measure both quantity and quality (catalog coverage AND metadata completeness AND usage). Connect metrics to business outcomes. Present trends and context, not just point-in-time numbers. Test whether metrics drive correct behaviors or create perverse incentives.

Summary

Data governance metrics transform governance from faith-based to evidence-based, demonstrating whether governance programs deliver value or simply create bureaucracy. Effective measurement requires balanced approaches tracking adoption, data quality, metadata management, access governance, compliance, stewardship effectiveness, and business impact.

Organizations often make the mistake of measuring activities (policies written, meetings held, training delivered) rather than outcomes (quality improved, risks mitigated, decisions enhanced). Activity metrics have value but must be supplemented with effectiveness metrics proving governance works.

The most successful governance measurement programs organize metrics into balanced scorecards with four perspectives: business value demonstrating ROI and impact, data quality tracking fitness for use, process measuring governance effectiveness, and foundation assessing infrastructure health. Each perspective contains 3-5 key metrics creating comprehensive views without overwhelming detail.

Metrics should balance leading indicators predicting future performance with lagging indicators measuring actual outcomes. Leading indicators like tool adoption and steward activity enable proactive management. Lagging indicators like quality improvement and business impact demonstrate ultimate success. Both are necessary.

Common pitfalls include vanity metrics that look impressive but don’t indicate progress, measuring what’s easy instead of what matters, creating perverse incentives through poorly designed metrics, analysis paralysis delaying governance while pursuing perfect measurement, insufficient baseline data making impact demonstration impossible, and lack of context making metrics misleading.

Demonstrating governance ROI requires quantifying both hard benefits (prevented fines, reduced costs, protected revenue) and soft benefits (improved decision quality, enhanced reputation, increased agility). Well-built business cases combine financial analysis with strategic narrative explaining governance value beyond pure ROI.

Technology enables scalable measurement through data quality monitoring tools, catalog analytics, governance dashboards, workflow systems, and survey platforms. Organizations should start with basic tools and manual collection if necessary rather than delaying measurement while building perfect infrastructure.

Different stakeholders need different metric presentations. Executives need high-level scorecards focusing on business impact. Governance councils need comprehensive program health views. Operational teams need detailed dashboards with drill-down capabilities. Business units need metrics relevant to their specific operations.

Metrics should evolve as governance matures. Early-stage programs focus on foundation and adoption. Mid-stage programs add quality and effectiveness metrics. Advanced programs optimize for business outcomes and efficiency. Measuring ROI in month 3 is premature; focusing only on training completion in year 3 misses the point.

Industry-specific considerations affect metric selection. Financial services emphasizes regulatory compliance and model risk management. Healthcare focuses on patient safety and privacy. Retail prioritizes customer experience and operational efficiency. Government emphasizes transparency and accountability.

The organizations succeeding with governance measurement share common patterns: they start measuring early even with imperfect metrics, they balance activity with outcomes, they connect governance metrics to business language executives understand, they use metrics to drive continuous improvement not just reporting, and they evolve measurement sophistication alongside governance maturity.

Governance metrics aren’t the goal — effective governance creating business value is the goal. But metrics are essential tools making governance visible, demonstrable, and improvable. Without measurement, governance remains invisible and unvalidated. With thoughtful measurement, governance demonstrates value and continuously improves.

Ready to strengthen your governance measurement?

- Chief Data Officer’s Guide — building governance programs

- What Is Data Governance? — foundational governance concepts

- Data Governance vs Data Management — understanding the distinction

- What Is a Data Steward? — stewardship effectiveness metrics

Published: March 2026 | Author: Clinton (The Data Governor) | Category: Data Governance

Clinton is a Senior Data Governance Engineer with experience implementing governance metrics and measurement programs at Wells Fargo, Department of Veterans Affairs, and Nestle Purina. He has built governance scorecards and ROI business cases across finance, government, and manufacturing contexts.

Further Reading

- measuring governance success

- Maximizing Efficiency with Continuous Improvement in Data Governance

- How to Assign Roles and Responsibilities in Data Governance

- Data Ethics: Navigating the New Frontier in Data Governance

- Data Governance Certification: A Guide to the Top Certifications in 2023

- What Does a Data Governance Analyst Do? Demystifying the Data Guardian’s Role

- data governance certification

- CDO governance guide

- governance framework guide