If you’re asking “what should a data governance policy include?”—you’re ahead of most organizations. A robust data governance policy is the foundation that transforms data chaos into trusted enterprise assets.

According to DATAVERSITY’s 2025 Trends in Data Management survey, 75% of organizations have implemented data governance programs, yet data quality and governance issues rank among their biggest challenges. The gap? Most organizations lack comprehensive, enforceable policies that translate governance frameworks into operational reality.

This comprehensive guide draws from real-world policy implementations across banking, government, and manufacturing to show you exactly how to create data governance policies that actually work—not policies that sit in unread in SharePoint folders.

- What Is a Data Governance Policy?

- Why Data Governance Policies Matter in 2026

- The 10 Core Data Governance Policies Every Organization Needs

- 1. Data Classification and Sensitivity Policy

- 2. Data Quality Management Policy

- 3. Data Access and Security Policy

- 4. Data Retention and Disposal Policy

- 5. Data Privacy and Consent Policy

- 6. Master Data Management Policy

- 7. Data Lineage and Documentation Policy

- 8. Data Sharing and Exchange Policy

- 9. Data Incident Management and Response Policy

- 10. AI and Model Governance Policy

- Building Your Data Governance Policy Framework

- Policy Implementation Roadmap

- Industry-Specific Policy Considerations

- Common Policy Implementation Challenges

- Measuring Policy Effectiveness

- Policy Templates and Resources

- Technology Enablement for Policy Enforcement

- The Future of Data Governance Policies

- Conclusion: Policies as the Foundation of Governance Success

- Frequently Asked Questions About Data Governance Policies

- What is a data governance policy?

- What's the difference between data governance policies and data governance frameworks?

- How many data governance policies should we have?

- Who should own data governance policies?

- How do we enforce data governance policies?

- How often should policies be reviewed and updated?

- Can data governance policies work in agile/fast-moving environments?

- What's the biggest mistake organizations make with data governance policies?

- How do we get buy-in for data governance policies from business stakeholders?

- Where can I find data governance policy templates?

- About The Data Governor

What Is a Data Governance Policy?

A data governance policy is a formal framework of principles, roles, processes, standards, and controls that ensures data is accurate, secure, compliant, and usable throughout its lifecycle.

Think of policies as the operating instructions for your data governance program. Your governance framework defines what you’ll do. Policies define how you’ll do it, who is accountable, and what happens when things go wrong.

Without clear policies, governance programs become ambiguous discussions that never translate into action. With well-crafted policies, everyone knows exactly what’s expected, how to comply, and where to escalate issues.

The Simple Truth About Policies

Data governance policies answer three critical questions:

- What are the rules? (Standards and requirements)

- Who enforces them? (Roles and accountability)

- How do we measure compliance? (Monitoring and consequences)

When these questions go unanswered, organizations face regulatory fines, data breaches, operational inefficiencies, and teams that don’t trust their own data.

Why Data Governance Policies Matter in 2026

The business and regulatory landscape has fundamentally changed. Organizations that master policy implementation gain strategic advantages while those that neglect it face mounting risks.

Business Impact

Risk Mitigation Worth Millions

The average cost of a data breach reached $4.88 million in 2025 according to IBM’s Cost of a Data Breach Report. Poor data quality costs organizations an average of $12.9 million annually. Data governance policies directly mitigate these risks through:

- Access controls limiting who sees sensitive data

- Quality standards preventing bad data from propagating

- Classification schemes protecting high-risk information

- Retention policies reducing exposure to old data

- Incident response procedures minimizing damage

Regulatory Compliance

With 137 active data privacy laws globally as of February 2026 (up from 89 in 2023), compliance failures carry devastating consequences. Policies provide the controls and documentation needed to demonstrate compliance during audits and investigations.

Organizations without formal policies report 40% more data quality issues and 60% slower compliance verification compared to those with comprehensive policy frameworks.

Operational Efficiency

Eliminating Redundant Work

Clear policies eliminate the chaos that wastes 30-40% of knowledge workers’ time hunting for trustworthy data:

- Finance teams stop reconciling conflicting reports because quality policies ensure single source of truth

- Marketing stops wasting budget on duplicate contacts because retention policies clean old data

- IT stops building integration patches because interoperability policies standardize formats

- Compliance teams respond to audits in hours instead of weeks because documentation policies maintain audit trails

Enabling AI and Analytics

Organizations with mature data governance policies are 3x more likely to successfully deploy AI at scale. Policies ensure:

- Training data quality for reliable model outputs

- Ethical guidelines for responsible AI

- Lineage documentation for explainability

- Bias detection and mitigation standards

Without governance policies, data scientists spend 80% of their time cleaning data instead of building models.

The 10 Core Data Governance Policies Every Organization Needs

| Policy | Purpose | Key Requirements | Priority | Implementation Time |

|---|---|---|---|---|

| Data Classification | Categorize data by sensitivity | 4 levels, handling standards, labeling | High (Foundation) | 6-8 weeks |

| Data Quality | Ensure accuracy, completeness | Quality dimensions, thresholds, monitoring | High (Business Pain) | 8-12 weeks |

| Data Access | Control who sees what data | RBAC, approval workflows, recertification | High (Security) | 6-10 weeks |

| Data Retention | Define how long to keep data | Retention schedules, disposal methods | Medium (Compliance) | 8-12 weeks |

| Data Privacy | Protect PII per regulations | Consent, subject rights, breach notification | Medium (Regulatory) | 10-16 weeks |

| Master Data | Single source of truth | Golden records, survivorship rules | Medium (Operational) | 12-16 weeks |

| Data Lineage | Document data flow | Source-to-target mapping, metadata | Medium (Transparency) | 8-12 weeks |

| Data Sharing | External data exchange | Agreements, de-identification, security | Low (As Needed) | 6-8 weeks |

| Incident Response | Handle data incidents | Severity levels, notification, remediation | Low (Proactive) | 4-6 weeks |

| AI Governance | Responsible AI deployment | Model cards, bias testing, monitoring | Low (If Applicable) | 10-14 weeks |

Effective governance requires multiple policies working together. Here are the essential policies every organization should implement, regardless of industry.

1. Data Classification and Sensitivity Policy

Purpose: Define how data is categorized by sensitivity level and establish handling requirements for each classification.

What It Covers:

- Classification levels (Public, Internal, Confidential, Highly Confidential)

- Criteria for assigning classifications

- Handling requirements for each level (encryption, access controls, transmission methods)

- Labeling standards for documents and datasets

- Classification review and reclassification procedures

Implementation Example:

At Wells Fargo, we implemented a four-tier classification scheme:

- Public: Marketing materials, published reports

- Internal Use Only: Internal communications, operational data

- Confidential: Customer non-public information, financial records

- Restricted: Social Security numbers, account numbers, credentials

Each tier had specific technical controls automatically enforced. A dataset classified as “Restricted” automatically triggered encryption at rest, encryption in transit, role-based access control, and audit logging.

Policy Template Excerpt:

All data assets must be assigned one of four classification levels within

30 days of creation. Data owners are responsible for initial classification.

Data classified as Confidential or Restricted must be reviewed annually.

Restricted Data Requirements:

- Encryption: AES-256 at rest and in transit

- Access: Named individuals only, reviewed quarterly

- Storage: Approved secure environments only

- Transmission: Encrypted channels only

- Retention: Business justification required beyond standard retention

Common Pitfalls:

- Too many classification levels: Organizations create 7-8 levels that nobody understands. Stick to 3-4.

- Unclear criteria: Vague definitions like “sensitive information” lead to inconsistent application. Provide specific examples.

- No automation: Manual classification doesn’t scale. Use automated discovery and classification tools.

Success Metrics:

- % of data assets with assigned classifications

- Time to classify new datasets

- Classification accuracy audit results

- Access violation incidents by classification level

2. Data Quality Management Policy

Purpose: Establish standards for data accuracy, completeness, consistency, timeliness, and validity across the enterprise.

What It Covers:

- Data quality dimensions and definitions

- Quality thresholds by data domain (customer data 95% accuracy, product data 98% completeness)

- Quality measurement and monitoring procedures

- Issue escalation and remediation workflows

- Data steward quality responsibilities

- Quality metrics reporting requirements

Implementation Example:

At the Department of Veterans Affairs, we defined quality dimensions for veteran demographic data:

- Accuracy: Address matches USPS validation – 95% threshold

- Completeness: Email address populated – 80% threshold

- Consistency: Phone number format standardized – 100% threshold

- Timeliness: Updated within 30 days of change – 90% threshold

- Validity: Date of birth logically valid (not future, not before 1900) – 100% threshold

Automated quality profiling ran daily. Issues below thresholds triggered Jira tickets assigned to responsible data stewards with defined SLAs.

Policy Template Excerpt:

Critical Data Elements (CDEs) must meet defined quality thresholds:

Quality Dimension | Measurement | Threshold | Review Frequency

------------------|------------|-----------|------------------

Accuracy | % matching authoritative source | 95% | Daily

Completeness | % required fields populated | 90% | Daily

Consistency | % conforming to standards | 98% | Daily

Timeliness | % updated within SLA | 85% | Weekly

Quality scores below threshold for 3 consecutive measurements trigger

mandatory remediation plan within 5 business days.

Common Pitfalls:

- Same quality standards for all data: Not all data requires 99% quality. Risk-adjust your thresholds.

- Measurement without action: Dashboards showing poor quality don’t fix anything. Require remediation plans.

- IT-only ownership: Business data owners must be accountable for quality, not just IT.

Success Metrics:

- Data quality scores by domain

- % datasets meeting quality thresholds

- Time to remediate quality issues

- Cost of poor quality (rework, returns, errors)

3. Data Access and Security Policy

Purpose: Define who can access what data under what circumstances and how that access is controlled, monitored, and revoked.

What It Covers:

- Role-based access control (RBAC) principles

- Least-privilege access standards

- Access request and approval workflows

- Access recertification requirements (quarterly for sensitive data)

- Privileged access management

- Access logging and monitoring

- Offboarding procedures

Implementation Example:

At Nestle Purina, we implemented attribute-based access control (ABAC) for our product master data in Profisee:

- Product Developers: Read/write access to formulations, not to costing

- Supply Chain: Read access to formulations, read/write to suppliers

- Finance: Read access to everything, write access to costing

- Marketing: Read access to specifications, write access to packaging

Access requests went through workflow: requester submits business justification → data owner approves → IT provisions → quarterly recertification. All access logged for audit.

Policy Template Excerpt:

Access Principles:

1. Least Privilege: Users granted minimum access necessary for job function

2. Need-to-Know: Access requires documented business justification

3. Separation of Duties: No single user has create-and-approve rights

4. Time-Limited: Sensitive data access expires after 90 days unless renewed

Access Request Process:

- Request submitted via ServiceNow with business justification

- Data Owner approval required (3 business day SLA)

- IT provisions within 2 business days of approval

- Access logged and monitored

- Quarterly recertification for Confidential/Restricted data

Access Revocation:

- Immediate upon employee termination or role change

- Automatic expiration after 90 days of non-use

- Data Owner can revoke at any time

Common Pitfalls:

- “Everyone needs access to everything”: No. Implement least privilege ruthlessly.

- No recertification: Access creeps over time. Quarterly reviews are essential.

- Approval rubber-stamping: Data owners who approve every request without review defeat the purpose.

Success Metrics:

- % access requests with documented business justification

- Average time to provision access

- % access reviews completed on time

- Access violations detected and remediated

4. Data Retention and Disposal Policy

Purpose: Specify how long data must be retained to meet regulatory and business needs, and how it should be securely destroyed when retention expires.

What It Covers:

- Retention schedules by data type and classification

- Regulatory retention requirements (GDPR, HIPAA, SOX, industry-specific)

- Active vs. archive storage criteria

- Legal hold procedures

- Secure destruction methods

- Retention audit and enforcement

Implementation Example:

At Wells Fargo, retention requirements varied dramatically:

- Transaction records: 7 years (SOX, FINRA)

- Customer communications: 6 years (SEC)

- Employee records: 7 years post-termination (EEOC)

- Marketing analytics: 3 years (business need)

- System logs: 90 days (operational need)

Automated workflows moved data to cheaper archive storage when active retention ended. Legal holds suspended deletion when litigation or investigation began.

Policy Template Excerpt:

Retention Schedules:

Data Category | Active Retention | Archive Retention | Total | Regulatory Basis

--------------|-----------------|-------------------|-------|------------------

Financial Transactions | 3 years | 4 years | 7 years | SOX, FINRA

Customer PII | As long as relationship + 6 years | N/A | Relationship + 6 years | GDPR, state laws

Employee Records | Employment + 1 year | 6 years | Employment + 7 years | EEOC, IRS

Marketing Data | 1 year | 2 years | 3 years | Business need

System Logs | 90 days | N/A | 90 days | Operational need

Disposal Requirements:

- Confidential/Restricted data: Cryptographic erasure or physical destruction

- Internal data: Standard deletion with overwrite

- All disposal logged with date, method, approver

Common Pitfalls:

- “Keep everything forever”: Storage costs explode and legal exposure increases.

- No automation: Manual deletion doesn’t scale and creates compliance gaps.

- Ignoring legal holds: Destroying data under legal hold creates massive liability.

Success Metrics:

- % data assets with defined retention periods

- Storage cost trends (should decrease)

- Compliance with deletion schedules

- Legal hold responsiveness (time to suspend deletion)

5. Data Privacy and Consent Policy

Purpose: Define how personally identifiable information (PII) is collected, used, shared, and protected in compliance with privacy regulations.

What It Covers:

- PII definition and inventory

- Lawful basis for processing (consent, contract, legitimate interest, legal obligation)

- Consent management (opt-in, opt-out, withdrawal)

- Data subject rights (access, rectification, erasure, portability, restriction)

- Privacy impact assessments

- Third-party data sharing agreements

- Breach notification procedures

- Privacy by design requirements

Implementation Example:

When implementing GDPR compliance at the VA, we created a comprehensive privacy framework:

- PII Inventory: Automated discovery identified 847 datasets containing PII

- Lawful Basis Mapping: Each use case documented under which legal basis (most were “legal obligation” for federal agency)

- Data Subject Rights: Automated workflows for access requests (30-day SLA), erasure (subject to legal retention), portability (structured export)

- Third-Party Agreements: Template data processing agreements required for all vendors receiving PII

Policy Template Excerpt:

PII Processing Principles:

1. Lawfulness: All processing must have documented lawful basis

2. Purpose Limitation: Use only for stated, legitimate purposes

3. Data Minimization: Collect only data necessary for purpose

4. Accuracy: Maintain accurate and up-to-date PII

5. Storage Limitation: Retain only as long as necessary

6. Integrity and Confidentiality: Protect with appropriate security

Data Subject Rights (GDPR/CCPA):

- Right to Access: Provide copy within 30 days

- Right to Rectification: Correct inaccurate data within 30 days

- Right to Erasure: Delete upon request (subject to legal retention)

- Right to Portability: Provide structured, machine-readable export

- Right to Restriction: Suspend processing upon request

- Right to Object: Stop processing for direct marketing

All rights requests logged, tracked, and verified within mandated timeframes.

Common Pitfalls:

- Assuming consent covers everything: Consent must be specific, informed, and freely given. You can’t force consent.

- No consent withdrawal process: If you can collect consent, users must be able to withdraw it.

- Forgetting third-party processors: Your vendors must comply with same privacy requirements.

Success Metrics:

- % PII datasets with documented lawful basis

- Data subject rights request response time

- Privacy assessment completion rate

- Third-party vendor compliance verification

6. Master Data Management Policy

Purpose: Establish standards for managing critical business entities (customers, products, suppliers, locations) as single sources of truth.

What It Covers:

- Master data domains and scope

- Golden record creation and survivorship rules

- Data steward responsibilities for master data

- Match and merge criteria

- Master data change management

- Data quality standards for master data

- MDM system of record designation

Implementation Example:

At Nestle Purina, we implemented product master data management across 47 manufacturing plants:

- Product Domain Scope: Formulations, specifications, packaging, costing, regulatory compliance

- Survivorship Rules: Most recent for packaging specs, most complete for formulations, finance-approved for costing

- Stewardship: Product Development owned formulations, Marketing owned packaging, Finance owned costing

- Match Criteria: 90% similarity in product name AND same category triggered merge review

- Change Management: All specification changes required cross-functional approval before MDM update

Before MDM: 34,000 duplicate product records, 12-week product introduction time. After MDM: <500 duplicates, 6-week introduction time.

Policy Template Excerpt:

Master Data Domains:

1. Customer: Legal entities, contacts, addresses, hierarchies

2. Product: Items, SKUs, formulations, specifications

3. Supplier: Vendors, contracts, certifications

4. Location: Sites, warehouses, distribution centers

5. Employee: Personnel, org structure, roles

Golden Record Principles:

- Single authoritative record per entity across enterprise

- Survivorship rules determine which source data "wins" for each attribute

- Automated matching identifies potential duplicates

- Steward review required before merge

- Change history maintained for audit

Data Quality Standards for Master Data:

- Completeness: 100% for critical attributes, 90% for standard attributes

- Accuracy: Monthly verification against authoritative sources

- Uniqueness: <1% duplicates allowed (measured monthly)

Common Pitfalls:

- Trying to master everything: Start with 1-2 critical domains (usually customer and product)

- IT-only ownership: Business must own master data quality

- No survivorship rules: When merging records, which value wins? Document it.

Success Metrics:

- % master data meeting quality thresholds

- Duplicate record counts and trends

- Time to create new master records

- Business process efficiency improvements

7. Data Lineage and Documentation Policy

Purpose: Require documentation of where data comes from, how it transforms, and where it’s used to enable impact analysis and regulatory compliance.

What It Covers:

- Lineage capture requirements (source-to-target mapping)

- Transformation documentation standards

- Impact analysis procedures

- Metadata management requirements

- Business glossary maintenance

- Data catalog population and enrichment

- Documentation quality standards

Implementation Example:

At Wells Fargo, Basel III compliance (BCBS 239) required end-to-end lineage for all regulatory reporting data:

- Automated Lineage: Tools scanned ETL code, database logs, BI reports to build lineage graphs automatically

- Manual Documentation: Business stewards documented business logic and transformation rules in data catalog

- Impact Analysis: Before any source system change, automated lineage showed downstream impact

- Audit Trail: Complete lineage from core banking system → data warehouse → regulatory reports with transformation logic documented

This enabled regulatory auditors to verify accuracy of reported data and reduced audit preparation from 6 weeks to 3 days.

Policy Template Excerpt:

Lineage Documentation Requirements:

For All Critical Data Elements:

- Source system of record identified

- All intermediate transformation points documented

- Business logic for calculations captured

- Target consumption points mapped

- Data quality checks applied at each stage

- Refresh frequency documented

For Regulatory Reporting Data:

- Complete end-to-end lineage required

- Business definitions aligned to regulatory requirements

- Control points for data quality documented

- Audit trail maintained for 7 years

- Quarterly lineage verification

Metadata Standards:

- Business name and technical name

- Business definition (approved by data owner)

- Data owner and data steward assignment

- Classification level

- Quality metrics

- Lineage relationships

- Usage examples

Common Pitfalls:

- Manual lineage only: Doesn’t scale. Automate as much as possible.

- Technical lineage without business context: Knowing data flows from Table A to Table B doesn’t explain what it means

- One-time documentation: Lineage goes stale instantly. It must be maintained.

Success Metrics:

- % critical data elements with documented lineage

- Metadata completeness scores

- Time to perform impact analysis

- Audit preparation efficiency

8. Data Sharing and Exchange Policy

Purpose: Define standards for sharing data with external parties (partners, vendors, researchers) while maintaining security and compliance.

What It Covers:

- External sharing approval requirements

- Data sharing agreements and contracts

- Data minimization for external sharing

- Anonymization and de-identification standards

- Third-party security requirements

- Data breach notification to partners

- Cross-border data transfer compliance

Implementation Example:

At the VA, sharing veteran health data with academic researchers required rigorous controls:

- Approval Workflow: IRB review → Privacy Officer approval → Data Owner approval → Legal review (4-6 week process)

- Data Minimization: Researchers received only minimum necessary data (no SSN, addresses limited to ZIP-3)

- De-identification: Safe harbor method (remove 18 HIPAA identifiers) or expert determination

- Security Requirements: Encrypted transfer, secure storage attestation, annual security assessments

- Breach Notification: Researchers contractually required to notify VA within 24 hours

Policy Template Excerpt:

External Data Sharing Requirements:

Pre-Sharing Assessment:

1. Business justification documented

2. Data minimization applied (minimum necessary data only)

3. De-identification performed when possible

4. Risk assessment completed

5. Legal review for cross-border transfers

Required Agreements:

- Data Sharing Agreement defining permitted uses

- Security requirements and audit rights

- Breach notification obligations (24-hour notification)

- Return or destruction obligations when purpose complete

- Indemnification provisions

De-Identification Standards:

- Safe Harbor: Remove 18 HIPAA identifiers

- Expert Determination: Statistical disclosure risk < 0.05

- Differential Privacy: Epsilon < 1.0 for privacy-sensitive releases

Common Pitfalls:

- Sharing more than necessary: Always minimize. Don’t share entire customer database when partner needs only email addresses.

- No contractual protections: Data sharing agreements must specify permitted uses, security requirements, breach notification

- Forgetting about cross-border transfers: GDPR, CCPA have specific requirements for international transfers

Success Metrics:

- % external sharing with approved agreements

- Time to approve sharing requests

- Third-party security assessment completion

- Data breach incidents from external parties

9. Data Incident Management and Response Policy

Purpose: Define procedures for detecting, responding to, and recovering from data quality incidents, security breaches, and privacy violations.

What It Covers:

- Incident classification and severity levels

- Detection and reporting procedures

- Incident response team roles

- Investigation and containment procedures

- Notification requirements (internal, regulatory, affected individuals)

- Remediation and recovery procedures

- Post-incident review and lessons learned

Implementation Example:

At Wells Fargo, we classified incidents into 4 severity levels with corresponding response procedures:

- Critical: PII breach affecting >1,000 individuals → Immediate containment, executive notification within 1 hour, regulatory notification within 72 hours, affected individual notification

- High: Quality issue affecting regulatory reporting → Containment within 4 hours, corrective action plan within 24 hours

- Medium: Quality issue affecting business operations → Remediation within 2 business days

- Low: Minor quality issue → Logged for trending, addressed in normal workflow

Policy Template Excerpt:

Incident Severity Levels:

Critical (P1):

- Data breach with PII exposure

- Regulatory reporting data incorrect

- Complete system outage affecting production

Response SLA: Containment within 1 hour, resolution within 4 hours

Notification: CISO, Legal, Compliance within 1 hour

High (P2):

- Quality issue affecting customer-facing systems

- Unauthorized access attempt

- Partial system outage

Response SLA: Containment within 4 hours, resolution within 1 day

Notification: Data Owner, IT Security within 4 hours

Medium (P3):

- Quality issue affecting internal operations

- Policy violation

Response SLA: Resolution within 2 business days

Notification: Data Steward within 8 hours

Low (P4):

- Minor quality issue

- Documentation gap

Response SLA: Resolution within 5 business days

Notification: Logged for trending

Common Pitfalls:

- No clear severity definitions: Ambiguity delays response. Define levels clearly.

- Missing notification timelines: GDPR requires breach notification within 72 hours. Build that into procedures.

- No post-incident review: Every P1/P2 incident should produce lessons learned to prevent recurrence

Success Metrics:

- Incident detection time

- Time to containment

- Time to resolution by severity

- Repeat incident rate

10. AI and Model Governance Policy

Purpose: Establish standards for developing, deploying, and monitoring AI models to ensure fairness, transparency, and accountability.

What It Covers:

- Model development and approval workflows

- Training data quality and bias testing requirements

- Model documentation (model cards)

- Fairness and bias metrics

- Explainability requirements for high-stakes decisions

- Model monitoring and drift detection

- Model retirement procedures

- Ethical AI principles

Implementation Example:

Modern AI governance has become critical. Organizations deploying AI at scale require policies addressing:

Model Cards: Documented intended use, training data characteristics, performance metrics, known limitations, fairness assessments

Bias Testing: Required fairness metrics across protected classes before production deployment. Models with disparate impact >20% require bias mitigation.

Explainability: High-stakes decisions (credit, employment, healthcare) require explanation of factors driving model output

Monitoring: Production models monitored for drift (input distribution changes), performance degradation, fairness metric changes

Policy Template Excerpt:

AI Model Development Requirements:

Pre-Deployment Checklist:

- Training data quality assessment completed

- Bias testing across protected characteristics

- Model card documentation complete

- Explainability mechanisms implemented for high-stakes uses

- Security assessment (adversarial testing, input validation)

- Data Owner approval for use of training data

- Model Risk Committee approval for high-risk models

Fairness Requirements:

- Disparate impact ratio must be > 0.80 across protected classes

- False positive/negative rates within 10% across demographic groups

- If thresholds not met, document mitigation steps or business justification

Model Monitoring:

- Prediction distribution monitored weekly

- Fairness metrics evaluated monthly

- Model retraining when performance degrades >5%

- Incident response for model failures

Common Pitfalls:

- Treating AI like traditional software: AI models degrade over time and require continuous monitoring

- No training data documentation: You can’t debug model bias if you don’t know what data was used

- Deploying first, governing later: AI governance must be part of model development, not bolted on afterward

Success Metrics:

- % models with completed model cards

- Fairness assessment completion rate

- Model drift detection and retraining rate

- AI incident rate

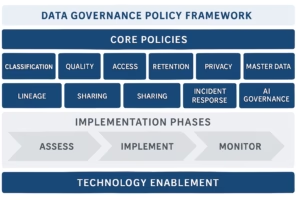

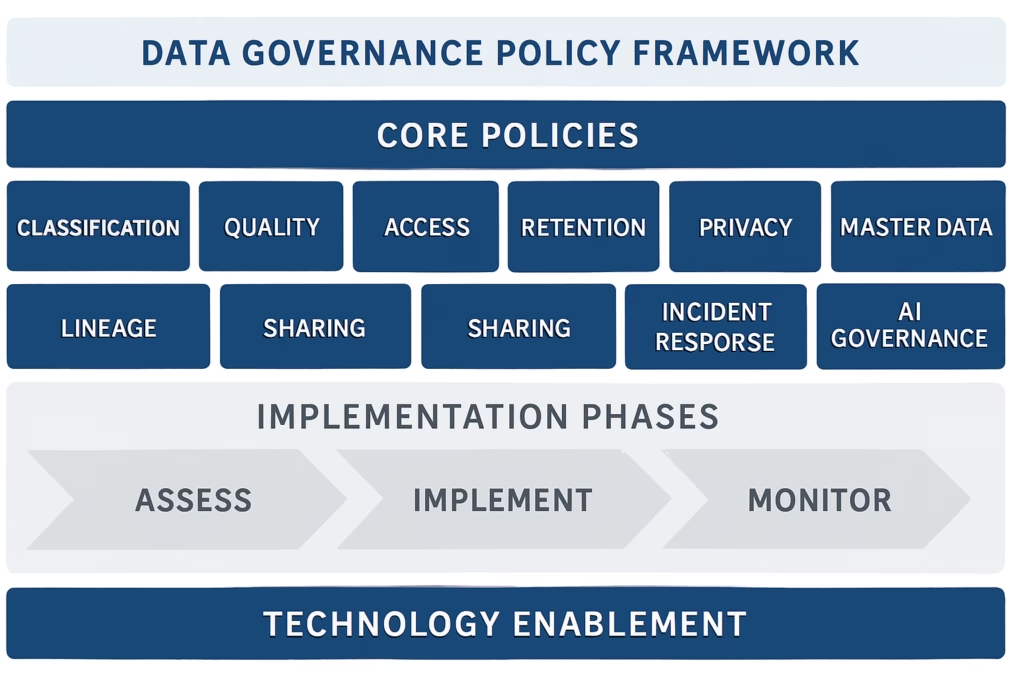

Building Your Data Governance Policy Framework

Having individual policies isn’t enough—they must work together as a cohesive framework. Here’s how to architect your policy ecosystem.

Policy Hierarchy and Relationships

Three-Tier Structure:

- Principles (Strategic level): High-level commitments applicable enterprise-wide

- Example: “Data is a strategic asset managed with same rigor as financial assets”

- Audience: Executives, board

- Review cycle: Annual

- Policies (Tactical level): Specific rules governing data management activities

- Example: Data Classification Policy, Data Quality Policy

- Audience: Data owners, stewards, consumers

- Review cycle: Annual or upon regulatory change

- Standards and Procedures (Operational level): Detailed implementation guidance

- Example: “How to classify a dataset,” “How to request data access”

- Audience: Practitioners, IT

- Review cycle: Quarterly or as needed

Policy Interdependencies:

Your policies should reference each other to create a cohesive framework:

- Data Quality Policy references Data Classification Policy (quality thresholds vary by classification)

- Data Access Policy references Data Classification Policy (access controls vary by classification)

- Data Retention Policy references Data Privacy Policy (retention periods driven by privacy law)

- AI Governance Policy references Data Quality and Data Lineage Policies (models require quality data with documented lineage)

Writing Effective Policies

Policy Structure Template:

1. Purpose and Scope

- Why this policy exists

- What it covers (and doesn't cover)

- Who must comply

2. Policy Statement

- Core requirements (shall/must language)

- Specific standards and thresholds

- Prohibited actions

3. Roles and Responsibilities

- Who owns this policy

- Who enforces it

- Who is accountable for compliance

4. Procedures

- How to comply (or reference separate procedures document)

- Workflow diagrams if complex

5. Compliance and Enforcement

- How compliance is measured

- Audit requirements

- Consequences of non-compliance

6. Exceptions

- Exception request process

- Who can approve exceptions

- Time limits on exceptions

7. Related Policies

- Cross-references to other policies

- External regulations addressed

8. Revision History

- Version number

- Date approved

- Changes from previous version

Writing Best Practices:

Use Clear Language: Write for your audience, not lawyers. If finance teams must comply, write in language finance teams understand.

BAD: "Data custodians shall implement appropriate technical and

organizational measures to ensure data integrity."

GOOD: "Database administrators must run automated quality checks

daily and investigate failures within 24 hours."

Be Specific: Vague policies create confusion and non-compliance.

BAD: "Data must be retained for an appropriate period."

GOOD: "Customer transaction data must be retained for 7 years

from transaction date per SOX and FINRA requirements."

Make It Actionable: Every policy requirement should have a clear action and owner.

BAD: "Data quality is important and should be monitored."

GOOD: "Data stewards must review quality dashboards weekly and

create remediation tickets for metrics below threshold

within 2 business days."

Policy Implementation Roadmap

Creating policies is one thing. Getting them adopted is another. Here’s a proven implementation approach.

Phase 1: Assessment and Prioritization (Weeks 1-4)

Conduct Gap Analysis

Compare your current state to required policies:

- List all policies you currently have (even informal ones)

- Identify regulatory requirements (GDPR, HIPAA, industry-specific)

- Inventory business pain points (data quality issues, access delays, security incidents)

- Map pain points and regulatory requirements to needed policies

- Prioritize based on risk and business impact

Build Business Case

Quantify the value of policies:

- Cost of Current State: Data quality issues, breach risk, regulatory fines, operational inefficiency

- Value of Policies: Risk reduction, efficiency gains, compliance assurance, faster decision-making

- Implementation Cost: Staff time, technology, training

- ROI Calculation: Organizations typically see 3-5x ROI from governance policies

Phase 2: Policy Development (Weeks 5-12)

Assemble Policy Working Group

- Data Governance Office: Facilitates, writes drafts

- Legal/Compliance: Ensures regulatory coverage

- Business Data Owners: Provide business context

- IT/Security: Ensure technical feasibility

- Privacy Office: Privacy and ethics perspective

Start with Quick Wins

Don’t create all 10 policies simultaneously. Start with 2-3 addressing highest-priority pain points:

High-Priority Policies (Start Here):

- Data Classification (foundation for most other policies)

- Data Quality (addresses most common business pain)

- Data Access (addresses security and compliance requirements)

Medium-Priority Policies (Phase 2):

- Data Retention

- Data Privacy

- Master Data Management

Lower-Priority Policies (Phase 3):

- Data Lineage

- Data Sharing

- Incident Management

- AI Governance

Draft and Review Cycle

- Working group drafts policy (2 weeks)

- Stakeholder review and feedback (2 weeks)

- Revise based on feedback (1 week)

- Legal review (1 week)

- Governance council approval (1 week)

- Executive sign-off (1 week)

Total: ~8 weeks per policy group (overlap possible)

Phase 3: Socialization and Training (Weeks 13-16)

Communication Campaign

People don’t comply with policies they don’t know exist:

- Executive Announcement: C-level sponsor sends enterprise communication explaining why policies matter

- Department Briefings: 30-minute sessions with each business unit explaining relevant policies

- Intranet Resources: Policy documents, FAQs, quick reference guides

- Ongoing Reminders: Quarterly newsletter, lunch-and-learns, policy spotlights

Role-Based Training

Tailor training to what each role needs to know:

- Data Owners: Policy accountability, exception approval, compliance verification

- Data Stewards: Day-to-day policy execution, quality monitoring, issue escalation

- Data Consumers: What policies mean for their work, how to request access, classification awareness

- IT/Security: Technical implementation, monitoring, enforcement automation

Make It Easy to Comply

The best policy is one that’s easier to follow than to violate:

- Templates: Provide classification decision trees, access request templates, quality check templates

- Automation: Automated classification, automated quality checks, automated workflows

- Integration: Embed policies into existing tools and workflows (not separate compliance exercises)

Phase 4: Implementation and Enforcement (Weeks 17-24)

Pilot with One Domain

Test policies with a manageable scope before enterprise rollout:

- Select high-value, engaged stakeholder (typically customer or financial data domain)

- Implement policies with close data steward support

- Document what works and what needs adjustment

- Collect success metrics to share broadly

- Use pilot team as champions for broader rollout

Gradual Enterprise Rollout

Expand to additional domains in waves:

- Wave 1: Pilot domain (complete)

- Wave 2: 2-3 additional critical domains (Month 2-3)

- Wave 3: Remaining domains (Month 4-6)

- Wave 4: Long-tail data and edge cases (Month 7-12)

Enable Compliance Through Technology

Manual policy compliance doesn’t scale:

- Data Catalog: Houses policies, classifications, ownership, lineage

- Quality Tools: Automated profiling, rule execution, monitoring dashboards

- Access Management: Automated RBAC, access request workflows, recertification

- Policy Engines: Automated policy enforcement (e.g., can’t move Restricted data to unapproved cloud)

Measure and Report

You can’t improve what you don’t measure. Track:

- Coverage Metrics: % data assets with assigned classifications, % assets with documented lineage

- Compliance Metrics: % access requests following process, % quality thresholds met

- Efficiency Metrics: Time to classify data, time to approve access, time to remediate quality issues

- Impact Metrics: Data quality scores, security incidents, audit findings, business value delivered

Report quarterly to governance council and executives showing trends and accomplishments.

Phase 5: Continuous Improvement (Ongoing)

Policy Review Cycle

Policies aren’t “set and forget”:

- Annual Review: Every policy reviewed annually for relevance and effectiveness

- Trigger-Based Review: Review when regulations change, technologies evolve, or business needs shift

- Exception Analysis: If many exceptions are granted, the policy may need revision

- Incident Learning: Update policies based on lessons from incidents

Benchmarking

Compare your policies to industry peers and standards:

- DAMA-DMBOK best practices

- Industry frameworks (NIST, ISO)

- Peer organizations through industry groups

- Consulting firm benchmarking studies

User Feedback

The people living with policies daily know what works and what doesn’t:

- Quarterly data steward feedback sessions

- Annual policy satisfaction survey

- Policy suggestion process (anyone can propose improvements)

- Retrospectives after major incidents

Industry-Specific Policy Considerations

While core policies apply universally, implementation varies by industry. Here’s what to emphasize in different sectors.

Banking and Financial Services

Regulatory Drivers: Basel III (BCBS 239), SOX, GLBA, AML/KYC, Dodd-Frank

Policy Emphasis:

Data Lineage Policy: BCBS 239 requires demonstrable end-to-end lineage for all regulatory reporting data. Financial institutions must document complete data flow from source systems through transformation to regulatory reports with full transparency of business logic.

Data Quality Policy: Risk data aggregation requirements mandate specific accuracy and completeness thresholds for regulatory reporting. Quality metrics must be monitored continuously with automated alerts for threshold violations.

Data Retention Policy: Complex retention requirements driven by multiple regulations (7 years for transaction records under FINRA, 6 years for customer communications under SEC). Must include legal hold procedures for litigation and investigations.

Model Governance Policy: Credit models, trading models, and fraud detection models face strict regulatory oversight. Must document model validation, backtesting, and ongoing monitoring with clear governance over model changes.

Example from Wells Fargo:

Our BCBS 239 compliance program required end-to-end automated lineage for all risk reporting data. We implemented Collibra for metadata management and lineage tracking. Any change to source systems, transformations, or reports triggered impact analysis showing all downstream effects. This enabled regulatory auditors to verify accuracy of reported data and reduced audit preparation from 6 weeks to 3 days.

Healthcare

Regulatory Drivers: HIPAA, HITECH, 21 CFR Part 11 (clinical trials), state privacy laws

Policy Emphasis:

Data Privacy Policy: HIPAA creates specific requirements for Protected Health Information (PHI) including minimum necessary standard, authorization requirements, breach notification within 60 days. Policy must address electronic PHI (ePHI) security with specific technical safeguards.

Data Access Policy: Role-based access tied directly to patient care relationships. “Break the glass” emergency access procedures. Comprehensive audit logging of all PHI access with regular reviews for inappropriate access.

Data Retention Policy: Generally 6 years post final date of service but varies by state (some require 7-10 years). Medical records for minors often retained until age of majority plus retention period. Research data has different retention requirements.

Data Sharing Policy: Health Information Exchange (HIE), research data sharing, and clinical trial data all have specific requirements. Must address HIPAA minimum necessary, de-identification standards (Safe Harbor or Expert Determination), and business associate agreements.

Example from VA Healthcare:

Patient privacy is paramount. Our data access policy implemented attribute-based access control where clinicians only access records for patients under their care. Every access is logged and monitored. Quarterly audits review access patterns and flag anomalies (accessing unusual number of records, accessing records for employees, accessing VIP records). Inappropriate access results in immediate investigation and disciplinary action up to termination.

Manufacturing

Regulatory Drivers: FDA (pharmaceutical, medical devices), EPA (environmental data), OSHA (safety data)

Policy Emphasis:

Master Data Management Policy: Product specifications, formulations, bill of materials, and supplier data must be single source of truth across global operations. Product introductions require consistent part numbering, specification management, and quality standards.

Data Quality Policy: Quality control data, testing results, and compliance certifications must meet stringent accuracy requirements. Pharmaceutical and medical device manufacturing face FDA requirements for data integrity (ALCOA+ principles: Attributable, Legible, Contemporaneous, Original, Accurate, Complete, Consistent, Enduring, Available).

Data Lineage Policy: Traceability from raw materials through production to finished goods. Enables rapid response to quality issues, product recalls, and supplier problems. Critical for pharmaceutical chain of custody and medical device adverse event reporting.

Data Retention Policy: FDA requires electronic records retention for duration of device/drug on market plus additional years (typically 2 years minimum). Batch records, quality testing, and validation documentation face 20+ year retention in some cases.

Example from Nestle Purina:

Product data governance was critical for new product introduction. Before MDM implementation, 47 manufacturing plants had their own product specifications with inconsistent naming and conflicting formulations. We implemented Profisee MDM with Product Development owning formulations, Supply Chain owning supplier data, and Finance owning costing. Clear survivorship rules determined which source data “won” when conflicts existed. Product introduction time dropped from 12 weeks to 6 weeks through consistent specifications and automated approvals.

Government and Public Sector

Regulatory Drivers: FISMA, Privacy Act, FOIA, FedRAMP, state open data laws

Policy Emphasis:

Data Classification Policy: Government data has unique classifications (Unclassified, Controlled Unclassified Information, Confidential, Secret, Top Secret) with specific handling requirements for each level. CUI marking and handling based on NIST SP 800-171.

Data Privacy Policy: Privacy Act restrictions on PII for federal agencies. FOIA transparency requirements create tension with privacy protection. Policy must address appropriate balance and redaction procedures.

Data Retention Policy: Records management driven by NARA schedules specifying retention for different record types. Some records permanent (requiring preservation in National Archives), others temporary with specific destruction timelines.

Data Sharing Policy: Interagency data sharing agreements required for sharing across government agencies. Memoranda of Understanding (MOUs) specify permitted uses, security requirements, and responsibilities. Open data initiatives require thoughtful balancing of transparency and privacy.

Example from Department of Veterans Affairs:

Federal privacy requirements are extensive. Our Privacy Act compliance included System of Records Notices (SORNs) published in Federal Register describing what PII we collect, why we collect it, how we use it, and with whom we share it. Privacy Impact Assessments (PIAs) required for any system collecting PII. Comprehensive training on Privacy Act requirements for all employees handling PII. Annual compliance assessments and external audits.

Common Policy Implementation Challenges

Every governance program encounters obstacles. Here’s how to navigate the most common challenges.

Challenge 1: Policy Bureaucracy

Symptom:

- Policies so restrictive they block legitimate work

- Weeks to get data access

- Lengthy approval chains that delay decisions

- Workarounds becoming standard practice

Solution:

- Risk-Based Approach: Heavy process for high-risk activities, lightweight for low-risk. Public data needs minimal controls; Restricted data requires rigorous approval.

- Pre-Approved Patterns: Define common scenarios that can proceed with minimal review. “Analysts accessing customer data for standard reporting” is pre-approved; “Marketing sharing customer data with vendor” requires review.

- Time Limits: Build SLAs into policies. Access requests approved within 2 business days or automatically escalated. Quality issues remediated within defined timeframes based on severity.

- Automate Everything Possible: Manual approvals don’t scale. Automated workflows, automated quality checks, automated compliance verification.

Example:

At Nestle Purina, original access request process took 8-12 business days (submit request → manager approval → data owner approval → IT provisioning → security review). We redesigned with pre-approved roles (analysts in defined departments automatically approved for standard datasets), automated workflow (eliminated manual handoffs), and SLAs (2 business day total or escalate). Average time dropped to 1.5 business days with higher compliance.

Challenge 2: Business Resistance

Symptom:

- “Governance slows us down”

- “We’ve always done it this way”

- Low policy compliance

- Shadow IT workarounds

Solution:

- Show the Value: Frame policies as enablers, not obstacles. Quality policies enable trusted analytics. Access policies enable self-service. Classification policies protect from breaches.

- Start with Pain Points: Implement policies solving actual business problems. If marketing complains about duplicate customer records, start with Master Data Policy. If finance struggles with report reconciliation, start with Data Quality Policy.

- Include Business in Design: Policies designed by IT alone fail. Business data owners must co-create policies addressing real business needs within reasonable controls.

- Celebrate Wins: When policies deliver value (faster reporting, fewer quality issues, successful audit), publicize it widely.

Example:

At Wells Fargo, business units initially resisted data quality policies as “IT overhead.” We reframed by showing cost of poor quality: $2.3 million annually in billing errors from bad customer addresses, weeks of analyst time reconciling conflicting reports, regulatory findings from incomplete data. Business leadership championed quality policies when they understood financial impact. We implemented quality metrics dashboards visible to executives. Within 6 months, billing error rate dropped 43% and reconciliation time dropped 65%.

Challenge 3: Unclear Accountability

Symptom:

- Nobody owns policy compliance

- Policies exist but nobody enforces them

- Responsibility diffused across too many people

- Quality issues and violations go unaddressed

Solution:

- Named Owners: Every policy must have a named owner (person, not department). Every data domain must have a named data owner. Every critical dataset must have a named data steward.

- RACI Matrix: Document who is Responsible (does the work), Accountable (ultimately answerable), Consulted (provides input), and Informed (kept updated) for each policy requirement.

- Performance Integration: Data ownership and stewardship become part of job descriptions and performance reviews. Accountability without consequences is hollow.

- Escalation Paths: Clear process for escalating unresolved issues to governance council or executives.

Example:

At the VA, we initially had “Finance Department owns financial data”—too vague. We redefined: Chief Financial Officer is accountable for financial data quality. Budget Director owns budget data specifically. Budget Analyst serves as data steward for budget data, executing daily quality monitoring. RACI matrix documented for every data management activity. CFO performance objectives included data quality metrics. When quality dropped, CFO held accountable—and authority to hold budget teams accountable flowed from there.

Challenge 4: Technology Limitations

Symptom:

- Manual policy compliance (spreadsheets, emails)

- No visibility into policy violations

- Can’t scale governance as data grows

- Compliance gaps discovered only during audits

Solution:

- Build Business Case: Quantify cost of manual compliance and risk of gaps. ROI from governance tools typically 3-5x through efficiency gains and risk reduction.

- Start with High-Impact Tools: You don’t need everything simultaneously. Data catalog provides immediate value through discovery and documentation. Quality tools automate monitoring that’s impossible manually.

- SaaS Options: Modern governance platforms available as cloud SaaS reduce upfront investment and accelerate deployment. Collibra, Alation, Atlan, Microsoft Purview all offer SaaS options. For a detailed comparison, see our Collibra vs Alation guide.

- Automation Priorities: Focus on automating high-volume, repetitive activities: quality profiling and monitoring, access workflows, classification recommendation, lineage capture.

Example:

At Nestle Purina, we started with Profisee MDM addressing immediate pain point (product data chaos). Success with MDM built credibility for broader governance investment. Next implemented Collibra for enterprise data catalog and lineage. Finally added Informatica for data quality. Each tool solved specific business problem and demonstrated ROI before next investment.

Challenge 5: Keeping Policies Current

Symptom:

- Policies written years ago, never updated

- Policies don’t reflect current technology (mention “data warehouse” but not “data lake”)

- Policies don’t address current regulations (written before GDPR)

- Disconnect between policies and practice

Solution:

- Formal Review Cycle: Calendar triggers for annual policy review. Governance office maintains policy review schedule and drives updates.

- Trigger-Based Review: Major regulatory change, significant technology adoption, or organizational change triggers policy review outside annual cycle.

- Version Control: Maintain policy history showing what changed and why. Communicate changes broadly to affected stakeholders.

- Living Documentation: Consider wiki or knowledge base format allowing continuous updates rather than formal document revisions. Balance structure with agility.

Example:

When GDPR took effect in 2018, our privacy policies written in 2015 were outdated. We implemented structured review: Privacy Policy reviewed upon any major privacy regulation change (GDPR, CCPA, state laws). Quality and Classification Policies reviewed annually. Technology-specific standards (cloud security, API governance) reviewed quarterly given rapid cloud evolution. Policy review becomes standing agenda item in quarterly governance council meetings.

Measuring Policy Effectiveness

You can’t improve what you don’t measure. Track these metrics to demonstrate policy value and identify improvement opportunities.

Coverage Metrics

Measure: How much of your data is governed by policies?

- % data assets with assigned classification

- % datasets with identified data owner

- % critical data elements with documented lineage

- % access following defined approval process

- % data sharing with executed agreements

Target: 90%+ for critical data within 12 months, 80%+ for all data within 24 months

Compliance Metrics

Measure: Are people following the policies?

- % data quality checks executed on schedule

- % access requests approved within SLA

- % quarterly access recertifications completed

- % policy exceptions formally documented and approved

- % data retained per retention schedules

Target: 95%+ compliance with critical policy requirements

Efficiency Metrics

Measure: Do policies improve operational efficiency?

- Time to classify new dataset (target: <1 day)

- Time to approve data access request (target: <2 days)

- Time to resolve data quality issue by severity (P1: <4 hours, P2: <1 day, P3: <2 days)

- Analyst time spent searching for data (should decrease 30-50%)

- Report preparation time (should decrease 20-40% through quality improvements)

Impact Metrics

Measure: Business outcomes from policies

- Data quality scores: Should improve 20-40% within 12 months

- Security incidents: Unauthorized access should decrease 50%+ within 6 months

- Compliance audit findings: Should decrease 60%+ within 12 months

- Operational costs: Data quality improvements should reduce rework costs 30-50%

- Decision speed: Trusted data should accelerate decision-making 15-25%

Example Metrics Dashboard:

At Wells Fargo, our quarterly governance metrics dashboard showed:

Coverage Metrics (as of Q4 2025)

- Data Classification: 94% of datasets classified

- Data Ownership: 89% of critical datasets with assigned owners

- Data Lineage: 76% of regulatory reporting data with documented lineage

- Access Management: 98% of access requests following approval workflow

Compliance Metrics (Q4 2025)

- Quality Monitoring: 97% of scheduled quality checks executed

- Access Approval SLA: 94% of requests approved within 2 days

- Access Recertification: 91% of quarterly reviews completed on time

- Retention Compliance: 88% of data disposed per schedule

Efficiency Metrics (Q4 2025)

- Average Access Approval Time: 1.3 days (down from 5.2 days pre-policy)

- P1 Quality Issue Resolution: 3.2 hours average (target <4 hours)

- Time to Classify Dataset: 0.8 days average (target <1 day)

Business Impact (Q4 2025 vs. Q4 2024)

- Customer Data Quality Score: 91% (up from 73%)

- Unauthorized Access Incidents: 3 (down from 14)

- Regulatory Audit Findings: 2 (down from 11)

- Report Reconciliation Time: 4 hours (down from 18 hours)

These metrics demonstrated clear ROI and maintained executive support for continued governance investment.

Policy Templates and Resources

Creating policies from scratch is challenging. Use these templates and resources as starting points.

Publicly Available Policy Examples

Several organizations have published their data governance policies for public reference:

State of Oklahoma Office of Management and Enterprise Services

- Comprehensive policy coverage including roles, responsibilities, standards

- Strong focus on governance structure and decision rights

- Available: Google “Oklahoma data governance policy”

New Hampshire Department of Education

- Detailed job duties for data stewards and owners

- Clear policy outcomes and success metrics

- Available: Google “New Hampshire DOE data governance policy”

University of New South Wales Sydney

- Multi-policy approach separating standard governance from research data

- Good example of tailoring policies to different data contexts

- Available: Google “UNSW data governance policy”

East Carolina University

- Concise yet comprehensive (scope, roles, accountability in 8 pages)

- Excellent for organizations wanting brief, focused policy

- Available: Google “ECU data governance policy”

Policy Template Structure

Use this structure for any governance policy:

[POLICY NAME]

Version X.X | Effective Date | Next Review Date

1. PURPOSE AND SCOPE

What this policy covers and why it exists

2. DEFINITIONS

Key terms used in this policy

3. POLICY STATEMENT

Core requirements (shall/must language)

4. ROLES AND RESPONSIBILITIES

Who owns, enforces, and complies with this policy

5. STANDARDS AND REQUIREMENTS

Specific technical and procedural standards

6. PROCEDURES

How to comply (or reference separate procedures document)

7. COMPLIANCE AND ENFORCEMENT

How compliance is measured and enforced

8. EXCEPTIONS

Exception request and approval process

9. RELATED POLICIES AND REGULATIONS

Cross-references to other policies and regulations

10. REVISION HISTORY

Version control and change log

Building Your Policy Library

Start Small, Build Systematically

Don’t create all 10 policies simultaneously:

Phase 1 (Months 1-3): Foundation Policies

- Data Classification Policy

- Data Quality Management Policy

- Data Access and Security Policy

Phase 2 (Months 4-6): Compliance Policies

- Data Privacy and Consent Policy

- Data Retention and Disposal Policy

- Data Incident Management Policy

Phase 3 (Months 7-12): Advanced Policies

- Master Data Management Policy

- Data Lineage and Documentation Policy

- Data Sharing and Exchange Policy

- AI and Model Governance Policy (if applicable)

Technology Enablement for Policy Enforcement

Manual policy compliance doesn’t scale. Modern governance platforms automate policy enforcement and monitoring.

Data Catalog Platforms

Leading Platforms: Collibra, Alation, Atlan, Microsoft Purview

Policy Support:

- Classification: Automated recommendation of classifications based on data patterns and content

- Ownership: Documented data owners and stewards with contact information

- Policies: Policy documents attached to data assets showing which policies apply

- Lineage: Visual lineage graphs showing data flow and transformation

- Quality: Quality metrics and trends displayed with data assets

- Workflows: Access request workflows, classification approval, policy exception requests

Example from VA:

We implemented Collibra for enterprise data catalog. Policies embedded directly into catalog:

- Each dataset showed assigned classification, owner, steward, applicable policies

- Quality metrics displayed showing compliance with quality policy thresholds

- Lineage graphs documented per lineage policy requirements

- Access request workflow integrated directly into catalog

- Policy violations flagged automatically with assigned remediation tasks

Data Quality Platforms

Leading Platforms: Informatica Data Quality, Talend, Ataccama, Precisely

Policy Support:

- Automated Profiling: Discovery of data patterns, quality issues, outliers

- Rule Engine: Configure quality rules based on policy requirements

- Monitoring: Continuous monitoring with automated alerts when thresholds violated

- Remediation: Workflows for investigating and remediating quality issues

- Dashboards: Executive dashboards showing quality trends and compliance

Example from Wells Fargo:

Informatica Data Quality automated our quality monitoring:

- Daily profiling scanned 2,000+ tables for completeness, accuracy, consistency

- Quality rules configured per quality policy standards

- Dashboards showed red/yellow/green status per domain

- Violations below threshold auto-created Jira tickets assigned to data stewards

- Monthly trend reports showed improvement from 73% quality to 94% over 18 months

Master Data Management Platforms

Leading Platforms: Profisee, Informatica MDM, SAP MDM, Stibo STEP

Policy Support:

- Golden Records: Single source of truth per MDM policy requirements

- Survivorship Rules: Configured rules determining which source data “wins”

- Match and Merge: Automated duplicate detection and steward-reviewed merging

- Workflows: Change approval workflows per MDM governance requirements

- Audit Trail: Complete history of changes for compliance and lineage

Example from Nestle Purina:

Profisee MDM enforced our product master data policy:

- Survivorship rules: Finance-approved costing, most recent packaging specs, most complete formulations

- Automated matching flagged potential duplicates using configured similarity thresholds

- Steward review required before merge (prevented false matches)

- Change workflow: specification changes required cross-functional approval before MDM update

- Audit trail maintained complete history for regulatory compliance

Identity and Access Management

Leading Platforms: Okta, Microsoft Entra ID (Azure AD), SailPoint, Saviynt

Policy Support:

- RBAC/ABAC: Automated role-based and attribute-based access control

- Access Request Workflows: Integrated workflows with business owner approval

- Recertification: Automated quarterly access reviews

- Provisioning/Deprovisioning: Automated access grants and revocations

- Audit Logging: Complete access audit trail for compliance

Example from VA:

SailPoint identity governance automated access management:

- Role-based access: Analysts automatically received standard dataset access

- Sensitive data: Required data owner approval via automated workflow

- Quarterly recertification: Automated emails to data owners listing current access

- Auto-revocation: Access automatically revoked 90 days after last use

- SOC audit: Complete access audit trail reduced audit prep from weeks to days

The Future of Data Governance Policies

Data governance policies must evolve as technology and regulations change. Here are emerging trends shaping policy frameworks in 2026 and beyond.

AI and LLM Governance

Challenge: Large language models (LLMs) and generative AI introduce new governance challenges:

- Training data provenance and quality

- Model bias and fairness

- Explainability of AI-generated outputs

- Intellectual property and copyright considerations

- Hallucination and accuracy concerns

Policy Evolution:

Organizations are extending governance policies to cover AI:

- AI Development Policy: Standards for model development including training data documentation, bias testing, model cards, and approval workflows

- AI Deployment Policy: Production deployment requirements including monitoring, drift detection, and human oversight

- AI Ethics Policy: Principles for responsible AI including fairness, transparency, accountability, and privacy protection

- Synthetic Data Policy: Standards for AI-generated data including quality assessment, bias testing, and appropriate use cases

Example:

Financial institutions deploying credit models now require fairness assessments showing disparate impact ratios >0.80 across protected classes. If models fail fairness thresholds, they require documented mitigation or business justification before deployment approval.

Privacy-Enhancing Technologies

Challenge: GDPR, CCPA, and proliferating privacy laws create tension between data utility and privacy protection.

Policy Evolution:

Organizations are updating privacy policies to incorporate privacy-enhancing technologies (PETs):

- Differential Privacy: Adding statistical noise to protect individual privacy while enabling analytics

- Federated Learning: Training models on distributed data without centralizing sensitive data

- Homomorphic Encryption: Computing on encrypted data without decrypting

- Secure Multi-Party Computation: Multiple parties computing on combined data without revealing individual data

Example:

Healthcare organizations sharing data for research are implementing differential privacy policies. Data released for research has epsilon values <1.0, ensuring individual patients cannot be re-identified while enabling population-level insights for medical research.

Data Mesh and Federated Governance

Challenge: Centralized data platforms and centralized governance don’t scale in large, distributed organizations.

Policy Evolution:

Organizations are shifting to federated governance models:

- Domain Ownership: Business domains own their data as products with clear SLAs

- Federated Policies: Central governance sets principles and standards; domains implement within their context

- Data Contracts: Formal agreements between data producers and consumers on quality, availability, schema

- Self-Service Infrastructure: Domains manage their own data platforms within enterprise guardrails

Example:

Large enterprises are implementing “data mesh” architectures where domains (Marketing, Sales, Finance) own their data as products. Central governance defines standards (data must be classified, quality metrics must be published, lineage must be documented), but domains implement within their own technology choices and processes.

Real-Time Governance

Challenge: Batch-oriented governance processes don’t work for real-time data and streaming analytics.

Policy Evolution:

Policies are evolving to govern streaming data:

- Stream Quality Monitoring: Real-time quality checks on streaming data with automated alerts

- Event-Based Access Control: Access decisions made at event ingestion time based on event attributes

- Real-Time Policy Enforcement: Policies enforced as data flows, not after data lands

- Stream Lineage: Tracking data provenance in real-time streaming architectures

Example:

IoT deployments with sensor data streaming from thousands of devices require real-time quality checks. Sensors reporting physically impossible values (temperature >200°F in climate-controlled warehouse) trigger immediate alerts and automated quarantine of suspect data before it contaminates analytics.

Conclusion: Policies as the Foundation of Governance Success

Data governance policies are the practical expression of your governance framework—the operating instructions that transform governance from concept to reality.

Organizations that master policy implementation turn data into competitive advantage, ensure regulatory compliance, mitigate risks, and enable innovation. Those that neglect governance policies face mounting costs from poor quality, regulatory penalties, security breaches, and teams that don’t trust their own data.

The path to effective governance policies requires sustained commitment, but the journey is achievable with the right approach:

Start Small: Implement 2-3 high-priority policies addressing your biggest pain points, not all 10 simultaneously

Build Buy-In: Include business stakeholders in policy design. Policies created by IT alone fail.

Show Value: Frame policies as enablers solving business problems, not compliance overhead

Enable Compliance: Make it easier to follow policies than violate them through automation and integration

Measure Progress: Track coverage, compliance, efficiency, and impact metrics showing policy value

Evolve Continuously: Review and update policies as regulations, technologies, and business needs change

Your data is one of your most valuable assets. Govern it with clear policies accordingly.

Frequently Asked Questions About Data Governance Policies

What is a data governance policy?

A data governance policy is a formal framework of principles, roles, processes, standards, and controls that ensures data is accurate, secure, compliant, and usable throughout its lifecycle. It defines specific rules governing how data is managed, who is accountable, and what happens when violations occur.

What’s the difference between data governance policies and data governance frameworks?

A data governance framework is the overall structure defining how your organization approaches governance (roles, committees, processes). Policies are the specific rules operating within that framework. The framework defines what you’ll govern; policies define how you’ll govern it with specific requirements and standards.

How many data governance policies should we have?

Most organizations need 7-10 core policies covering classification, quality, access, retention, privacy, master data, lineage, sharing, incident response, and AI governance. Start with 2-3 addressing highest-priority risks and pain points, then expand over 12-18 months rather than creating all policies simultaneously.

Who should own data governance policies?

Each policy should have a named owner typically from the business, not IT. The Data Governance Office facilitates policy development, but business data owners must own policies for their domains. For example, the Chief Privacy Officer owns the Data Privacy Policy, the CFO owns policies governing financial data.

How do we enforce data governance policies?

Effective enforcement combines technology automation, clear accountability, measurement, and consequences. Automate policy enforcement where possible (automated quality checks, automated access controls). Measure compliance through metrics and audits. Hold data owners accountable for compliance in their domains. Address violations through escalation processes with defined consequences.

How often should policies be reviewed and updated?

Policies should be reviewed at minimum annually, with trigger-based reviews when regulations change, technologies evolve, or business needs shift. Technology-specific policies (cloud security, API governance) may require quarterly reviews given rapid evolution. Privacy policies should be reviewed whenever major privacy regulations change.

Can data governance policies work in agile/fast-moving environments?

Yes, with the right approach. Use risk-based policies (heavy controls for high-risk data, lightweight for low-risk). Define pre-approved patterns for common scenarios. Automate policy enforcement so compliance happens without manual gates. Embed governance into workflows rather than separate compliance exercises. Modern “governance-as-code” approaches enable agile development with automated policy checks.

What’s the biggest mistake organizations make with data governance policies?

Creating comprehensive, perfect policies that nobody follows. Policies that are too complex, too restrictive, or disconnected from business reality fail. Effective policies start small, solve real business problems, include business stakeholders in design, enable compliance through automation, and evolve based on feedback rather than attempting perfection upfront.

How do we get buy-in for data governance policies from business stakeholders?

Frame policies as enablers solving business problems, not compliance overhead. Quantify cost of current state (data quality issues, security incidents, audit findings). Show how policies reduce those costs. Include business stakeholders in policy design. Start with policies addressing their pain points. Demonstrate quick wins. Make compliance easier than violation through automation.

Where can I find data governance policy templates?

Several organizations publish their policies publicly including State of Oklahoma Office of Management and Enterprise Services, New Hampshire Department of Education, University of New South Wales Sydney, and East Carolina University. Search for “[organization name] data governance policy” to find examples. Adapt templates to your specific industry, regulations, and business needs rather than using verbatim.

About The Data Governor

The Data Governor provides expert guidance on data governance, master data management, and data quality from a practitioner with extensive experience implementing governance programs across banking (Wells Fargo), government (Department of Veterans Affairs), and manufacturing (Nestle Purina) sectors.

Specializing in Collibra, Profisee, and Azure data platforms, we help organizations transform data chaos into strategic advantage through practical, implementable governance policies.