Photo by Lara Jameson on <a href="https://www.pexels.com/photo/europe-seen-on-a-globe-8828640/" rel="nofollow">Pexels.com</a>

EU AI Act data governance encompasses the policies, processes, and controls required to ensure AI systems meet European Union regulatory standards for training data quality, model documentation, bias mitigation, and transparency. Organizations deploying AI systems in the EU must implement comprehensive data governance before the August 2, 2026 enforcement deadline.

The clock is ticking. Organizations have less than five months to ensure their AI systems comply with the most comprehensive artificial intelligence regulation ever enacted. The EU AI Act, which takes full effect on August 2, 2026, fundamentally changes how organizations must govern data used to train, validate, and operate AI systems.

This isn’t just another compliance obligation. The AI Act introduces fines up to €35 million or 7% of global annual revenue for serious violations. More importantly, it mandates data governance practices that many organizations haven’t implemented — detailed training data documentation, complete model lineage tracking, systematic bias detection, and transparent decision-making trails.

I’ve built data governance programs at Wells Fargo, the Department of Veterans Affairs, and Nestle Purina — three organizations where regulatory compliance wasn’t optional. Financial services compliance taught me that regulations with real teeth force organizations to actually implement controls rather than create compliance theater. Government compliance taught me that auditors want evidence, not promises. Manufacturing compliance taught me that good governance enables innovation rather than blocking it.

The EU AI Act requires all three perspectives: financial-grade rigor, government-grade documentation, and manufacturing-grade process control. This guide translates AI Act requirements into actionable data governance controls that compliance teams and data governance offices can implement before the August deadline.

You’ll learn what the AI Act actually requires from data governance teams, how to classify your AI systems by risk level, what documentation auditors will demand, how existing governance capabilities help versus what’s genuinely new, and how to implement compliance controls in the time remaining.

- What Is the EU AI Act

- AI Act Risk Classification Framework

- Data Governance Requirements for High-Risk AI Systems

- Governance Controls Required by August 2, 2026

- Control 1: AI System Inventory and Risk Classification

- Control 2: Training Data Quality Framework

- Control 3: Bias Detection and Mitigation Process

- Control 4: Model Lineage and Documentation System

- Control 5: Operational Logging and Monitoring

- Control 6: Governance Policies and Procedures

- Control 7: Human Oversight Mechanisms

- Leveraging Existing Data Governance Capabilities

- What's Genuinely New for AI Compliance

- Implementation Timeline: April to August 2026

- Common Implementation Challenges

- Vendor AI Systems and Third-Party Compliance

- Industry-Specific Considerations

- Preparing for Regulatory Audits

- Long-Term AI Governance Beyond Compliance

- Frequently Asked Questions

- Summary

What Is the EU AI Act

The EU Artificial Intelligence Act is the world’s first comprehensive legal framework for artificial intelligence, establishing harmonized rules for the development, deployment, and use of AI systems across the European Union. The regulation takes a risk-based approach, imposing stricter requirements on AI systems that pose higher risks to fundamental rights and safety.

The AI Act was formally adopted by the European Parliament in March 2024 and enters into force in stages. Most provisions, including those governing high-risk AI systems, become enforceable on August 2, 2026. Organizations have used the grace period to implement necessary controls. That grace period ends in four months.

The regulation applies to providers placing AI systems on the EU market, deployers using AI systems within the EU, and providers and deployers of AI systems located outside the EU where the output produced by the AI system is used in the EU. If your AI system affects people in Europe, the AI Act applies regardless of where your organization is headquartered.

From a data governance perspective, the AI Act is fundamentally about demonstrating that AI systems make decisions based on appropriate data, using documented processes, with traceable outcomes. Organizations must prove that training data was suitable, that models behave as intended, that bias was identified and mitigated, and that decisions can be explained.

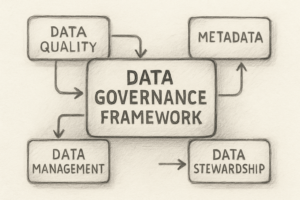

These requirements map directly to data governance capabilities: data quality, metadata management, lineage tracking, access controls, and documentation. Organizations with mature data governance programs have a head start. Those without such programs face implementing governance and AI compliance simultaneously.

The regulation distinguishes between prohibited practices (AI systems that are banned outright), high-risk systems (requiring strict compliance), limited-risk systems (requiring transparency), and minimal-risk systems (no specific obligations). Data governance requirements scale with risk classification.

AI Act Risk Classification Framework

Understanding how the AI Act classifies AI systems is essential because data governance requirements differ dramatically by risk level. The regulation uses a four-tier classification that determines compliance obligations.

Prohibited AI Practices

Certain AI applications are banned entirely regardless of safeguards. These include systems deploying subliminal techniques to manipulate behavior, exploiting vulnerabilities of specific groups, social scoring by public authorities for general purposes, and real-time remote biometric identification in publicly accessible spaces for law enforcement (with narrow exceptions).

From a data governance standpoint, prohibited systems should not be developed. Any training data, models, or infrastructure supporting such systems must be decommissioned. Organizations should audit existing AI projects to confirm none fall into prohibited categories.

High-Risk AI Systems

High-risk systems face the most stringent requirements. The AI Act defines high-risk systems in two categories: AI systems used as safety components of products covered by EU harmonized legislation (medical devices, aviation, automotive), and AI systems in specific domains listed in Annex III of the regulation.

Annex III domains include biometric identification and categorization, management and operation of critical infrastructure, education and vocational training (determining access or assignment), employment and worker management (recruitment, promotion, termination), access to essential private and public services (creditworthiness assessment, emergency response prioritization), law enforcement (evaluating reliability of evidence, assessing recidivism risk), migration and border control (examining applications, detecting document fraud), and administration of justice (assisting judicial research, influencing court decisions).

High-risk AI systems must comply with extensive requirements including risk management systems, data governance and management practices, technical documentation, record-keeping, transparency and provision of information to deployers, human oversight, and accuracy, robustness and cybersecurity measures.

Data governance requirements for high-risk systems are comprehensive. Organizations must document training data sources and provenance, implement quality management for training and validation data, detect and mitigate bias in datasets, maintain complete lineage from data through model to decision, ensure data representativeness for intended use, implement validation data strategies, and create audit trails for all data processing.

Most enterprise AI applications in regulated industries fall into high-risk categories. If your AI system makes decisions about people (hiring, lending, resource allocation) or operates in regulated domains (healthcare, finance, government), assume high-risk classification until proven otherwise.

Limited-Risk AI Systems

Limited-risk systems include AI that interacts with humans (chatbots, virtual assistants) or generates synthetic content (deepfakes, AI-generated images and text). These systems face transparency obligations rather than comprehensive compliance requirements.

Users must be informed they are interacting with AI. AI-generated content must be clearly labeled as artificially generated. Deepfakes and manipulated content must be marked as such.

Data governance requirements for limited-risk systems focus on content provenance tracking. Organizations must be able to identify which content was AI-generated, when it was created, what model produced it, and what training data influenced outputs. This requires metadata management and content labeling infrastructure.

While compliance burden is lighter than high-risk systems, transparency requirements still demand governance controls. Organizations cannot simply deploy chatbots or content generation without tracking systems.

Minimal-Risk AI Systems

The majority of AI applications fall into minimal-risk categories: spam filters, AI-powered search engines, recommendation systems for entertainment, inventory management optimization, and similar applications that don’t significantly impact fundamental rights.

Minimal-risk systems have no specific AI Act obligations beyond general data protection requirements (GDPR). However, organizations should still apply basic data governance to these systems for quality and operational reasons.

Even minimal-risk AI benefits from training data documentation, model lineage, and quality monitoring. The difference is that AI Act enforcement doesn’t mandate these practices for minimal-risk systems.

Organizations should formally classify each AI system by risk level and document that classification. The classification determines compliance requirements, implementation priority, and resource allocation. Misclassifying high-risk systems as minimal-risk creates enforcement exposure.

Data Governance Requirements for High-Risk AI Systems

High-risk AI systems face the most demanding data governance requirements under the AI Act. These requirements operationalize what “appropriate data governance” means in practice.

Training Data Quality Requirements

Article 10 of the AI Act mandates that training, validation, and testing datasets must be subject to appropriate data governance and management practices. Data must be relevant, representative, free of errors, and complete for the intended purpose.

Relevant means training data actually relates to the problem the AI system solves. A credit risk model trained on employment data from a single industry isn’t relevant for general lending. A hiring algorithm trained on historical hiring patterns from biased processes isn’t relevant for fair recruiting.

Representative means training data covers the full diversity of scenarios the AI system will encounter in production. If an AI system will make decisions about people across age ranges, genders, ethnicities, and geographies, training data must represent all those populations proportionally. Underrepresented groups in training data lead to poor model performance for those groups in production.

Free of errors means data quality standards apply to training datasets just as they do to operational data. Incorrect labels, missing values, duplicate records, and inconsistent formats corrupt model training. Organizations must implement data quality validation for AI training data with the same rigor as transaction processing data.

Complete means datasets contain sufficient information for the AI system to make sound decisions. Incomplete data leads to models that fill gaps with incorrect assumptions. A fraud detection model trained only on confirmed fraud cases without corresponding legitimate transaction data cannot distinguish patterns effectively.

Organizations must establish data quality thresholds for training data: minimum completeness percentages, maximum error rates, required representativeness across demographic dimensions, and acceptable staleness/timeliness standards. These thresholds must be documented and validated before model training begins.

Data quality for AI is not a one-time assessment. As models are retrained with new data, quality validation must repeat. Organizations need automated data quality pipelines that validate every training dataset before it flows to model development.

Training Data Documentation Requirements

The AI Act requires detailed documentation of training data characteristics, provenance, and limitations. This documentation must be sufficient for auditors to assess whether training data was appropriate for the AI system’s intended purpose.

Required documentation includes data sources and acquisition methods (where did the data come from, how was it collected, what sampling methods were used), data preprocessing and transformation steps (how was raw data cleaned, normalized, encoded, or augmented), data labeling procedures and quality controls (who labeled the data, what instructions did they follow, how was labeling accuracy validated), demographic and statistical properties (what populations are represented, in what proportions, with what distributions), data limitations and known biases (what scenarios are underrepresented, what systemic biases exist, where might the data mislead), data versioning and change history (which dataset version trained which model version, what changed between versions), and data retention and disposal policies.

This level of documentation doesn’t happen accidentally. Organizations need data catalog systems that capture training data metadata, labeling platforms that track who labeled what and when, version control systems that link dataset versions to model versions, and quality assessment tools that profile data characteristics.

Financial services organizations already document data lineage for regulatory reporting. AI Act compliance extends that documentation rigor to training datasets. Every training dataset becomes a governed data asset with metadata, ownership, quality metrics, and lineage.

Bias Detection and Mitigation

Article 10 explicitly requires that training datasets be examined for possible biases that could lead to discrimination. Organizations must identify potential biases, assess their impact, and implement appropriate mitigation measures.

Bias detection requires analyzing training data demographics. Does the dataset underrepresent protected groups? Does historical data reflect discriminatory practices? Do labels encode biased human judgments? Organizations must profile training data across sensitive attributes (even though those attributes may not be features in the model) to understand representation.

Common sources of training data bias include historical bias (data reflects past discrimination), representation bias (some groups are underrepresented), measurement bias (data collection methods disadvantage certain groups), aggregation bias (combining data from different populations masks within-group variation), and evaluation bias (validation data doesn’t match deployment context).

Mitigation strategies include resampling to balance representation, removing biased features, adjusting weights to equalize group treatment, post-processing model outputs to ensure fairness across groups, and collecting additional data to fill representation gaps.

The AI Act doesn’t mandate specific bias mitigation techniques. It requires that organizations identify biases, document their potential impact, and implement reasonable mitigations. What’s reasonable depends on the AI system’s risk level and impact on fundamental rights.

Organizations should establish bias review procedures for high-risk AI systems. Before training begins, data science teams and governance teams jointly review training data for bias indicators. Identified biases are documented. Mitigation approaches are selected and justified. Post-training, model outputs are tested for disparate impact across demographic groups.

This isn’t optional fairness engineering. It’s regulatory compliance. Auditors will ask: what biases did you find in training data, how did you mitigate them, how do you know mitigation was effective?

Model Lineage and Traceability

The AI Act requires that AI systems maintain logs recording their operation. For high-risk systems, this means complete traceability from input data through model processing to output decisions.

Lineage tracking must connect training datasets to trained models, trained models to deployed model versions, input data records to model predictions, and model predictions to business decisions or actions taken.

Technical documentation requirements include model architecture and hyperparameters, training process and convergence metrics, validation results and performance benchmarks, deployment environment and dependencies, and model version history and change logs.

Organizations need ML operations (MLOps) platforms that automatically track model lineage. Manual documentation doesn’t scale and creates gaps. Modern ML platforms capture training parameters, dataset versions, model artifacts, and deployment metadata automatically.

From a governance perspective, model lineage is metadata management applied to AI systems. The same data catalog that tracks database schemas and data flows must extend to model artifacts and training datasets. Models become first-class data assets with discoverable metadata.

Data Retention and Auditability

High-risk AI systems must retain logs for at least six months or longer based on applicable legal obligations. Organizations must be able to demonstrate compliance upon request by authorities.

Required retention includes training data or references to training data sources, training code and configuration, trained model artifacts and versions, validation results and test outputs, deployment logs showing model usage, prediction inputs and outputs, and bias assessment results and mitigation steps.

This creates significant storage requirements. Training datasets for large models are terabytes or petabytes. Model artifacts and logs accumulate continuously. Organizations need data retention strategies that balance compliance requirements with storage costs.

Retention policies should distinguish between training data (which might have separate retention requirements under GDPR), model artifacts (which need retention for compliance and rollback), and operational logs (which document system behavior).

For truly large datasets, organizations may retain data lineage and quality metrics rather than raw data itself. If training data came from a stable source with its own retention policies, documenting the source and acquisition date may suffice rather than duplicating storage.

Auditability means regulators can request evidence of compliance. Organizations must be able to produce training data documentation, bias assessments, validation results, and operational logs on demand. This requires organized artifact storage with efficient retrieval.

Governance Controls Required by August 2, 2026

Organizations have four months to implement AI Act compliance. Here are the specific data governance controls required for high-risk AI systems before the deadline.

Control 1: AI System Inventory and Risk Classification

Organizations must inventory all AI systems in use or development, classify each system by AI Act risk level, document the classification rationale, identify which systems require compliance by August 2, and prioritize implementation accordingly.

The inventory must include AI systems developed internally, AI systems procured from vendors, AI capabilities embedded in enterprise software, and AI services accessed via APIs.

Many organizations don’t actually know how many AI systems they have. Data science teams build models for specific use cases. IT teams deploy vendor products with embedded AI. Business units subscribe to SaaS platforms with AI features. No central inventory exists.

Creating the inventory requires cross-functional collaboration. Data governance teams lead the effort but need input from data science, IT, procurement, and business units. The inventory becomes a governed artifact maintained ongoing as new AI systems are developed or acquired.

For each AI system, document its purpose and use case, the risk classification (prohibited, high-risk, limited-risk, minimal-risk), the populations or domains affected, current compliance status, and required implementation actions.

This inventory feeds priority decisions. Organizations should focus compliance efforts on high-risk systems first, then limited-risk transparency requirements, then enhancement of minimal-risk systems.

Control 2: Training Data Quality Framework

Organizations must establish and enforce data quality standards for AI training data. This includes defining quality dimensions (completeness, accuracy, consistency, representativeness), setting measurable thresholds for each dimension, implementing validation processes that check quality before training, and rejecting or remediating data that fails quality checks.

Quality frameworks should differentiate requirements by AI system risk level. High-risk systems demand higher quality standards than minimal-risk systems. Representativeness matters more for systems affecting people than for inventory optimization.

Automated quality validation is essential. Manual quality checks don’t scale to the data volumes used in modern AI training. Organizations need data quality platforms that profile training datasets, check quality dimensions, and produce quality scorecards.

Quality frameworks must address both structured and unstructured training data. Image datasets require different quality checks than tabular data. Text data requires different validation than time series data. Organizations cannot use one-size-fits-all quality approaches.

Quality validation must happen early in the ML pipeline. By the time a model is trained and deployed, fixing training data quality problems is expensive. Quality gates before training begins prevent wasted effort.

Control 3: Bias Detection and Mitigation Process

Organizations must implement systematic bias review for high-risk AI systems. This includes analyzing training data demographics and representation, identifying potential sources of bias (historical, measurement, representation), assessing bias impact on protected groups, selecting and implementing mitigation techniques, and documenting bias findings and mitigation steps.

Bias review should be a formal gate in the AI development process. Before model training begins, data science teams conduct bias assessment with governance team oversight. Assessment results are documented. Mitigation approaches are selected based on findings. Post-training, model outputs are tested for fairness.

Organizations need tooling for bias detection. Fairness metrics libraries, demographic profiling tools, and disparate impact testing frameworks help quantify bias. Manual bias assessment is subjective and incomplete.

Bias mitigation techniques should be standardized. Organizations should not reinvent mitigation approaches for each model. Standard techniques (resampling, feature removal, fairness constraints, post-processing adjustments) should be documented with guidance on when each applies.

Documentation must capture what biases were identified, what impact they could have, what mitigation was attempted, and how effectiveness was measured. Auditors will review this documentation to assess whether organizations took bias seriously.

Control 4: Model Lineage and Documentation System

Organizations must implement systems that automatically track model lineage from training data through deployment. This includes capturing training dataset versions and metadata, recording model training parameters and code, linking models to training data, tracking model versions and deployment history, and maintaining technical documentation.

Modern MLOps platforms provide lineage tracking capabilities. Organizations should not attempt to build lineage tracking from scratch. Platforms like MLflow, Kubeflow, Azure ML, or SageMaker capture lineage automatically as part of model development workflows.

Lineage must be queryable. When an auditor asks “what training data produced this model,” the answer must be retrievable in minutes, not days of forensic investigation. When a model behaves unexpectedly in production, lineage enables root cause analysis.

Documentation templates should standardize what information is captured for each model. Organizations need model cards or model sheets that document intended use, training data characteristics, performance metrics, known limitations, bias assessment results, and deployment guidance.

Documentation is metadata. It belongs in the data catalog alongside data asset documentation. Models are governed assets requiring the same metadata rigor as critical datasets.

Control 5: Operational Logging and Monitoring

High-risk AI systems must log operation to enable traceability. Organizations must implement logging that captures input data to models, model predictions or decisions, timestamp and model version used, and user or system that triggered the prediction.

Logging requirements differ by AI system type. A batch credit scoring model needs different logs than a real-time fraud detection system. Organizations should define logging standards by AI system category.

Log retention for at least six months is mandatory. Organizations need storage infrastructure that handles log volumes economically. Hot storage for recent logs, warm storage for older logs, and cold storage for archived logs balance accessibility with cost.

Logs must be tamper-evident. Regulatory compliance demands proof that logs weren’t altered after creation. Append-only storage, cryptographic signing, or blockchain-based logging provide tamper evidence.

Monitoring should analyze logs for compliance and operational issues. Are models making decisions as expected? Are prediction distributions shifting over time? Are certain populations experiencing different outcomes?

Control 6: Governance Policies and Procedures

Organizations must document governance policies for AI systems. Required policies include AI system risk classification procedures, training data quality standards and validation, bias assessment and mitigation requirements, model validation and approval gates, deployment authorization and rollback procedures, and incident response for AI system failures.

Policies must be accessible to everyone involved in AI development and deployment. They should not be buried in legal documents. Practical policy guidance integrated into development workflows drives compliance.

Procedures should be specific enough to be implementable. A policy stating “assess bias in training data” without explaining how to assess bias or what constitutes acceptable bias leaves teams guessing. Procedures must provide concrete steps.

Governance policies should integrate with existing data governance frameworks. Organizations that have data quality policies, metadata management policies, and data classification policies extend those to cover AI-specific requirements. AI governance is an extension of data governance, not a separate program.

Control 7: Human Oversight Mechanisms

Article 14 requires that high-risk AI systems be designed to enable human oversight. From a data governance perspective, this means ensuring humans can access the data supporting AI decisions, understand model reasoning, and override AI outputs when appropriate.

Human oversight requires technical capabilities: explainability interfaces that show why a model made a prediction, access to input data and model outputs for review, override mechanisms that let humans change AI decisions, and audit trails capturing human interventions.

Organizations must define who has oversight responsibility for each AI system. Is it the business user receiving recommendations? A specialized review team? Compliance officers? Oversight responsibility must be clear and empowered.

Training for oversight personnel is essential. Humans cannot provide effective oversight without understanding how AI systems work, what limitations they have, and what errors to watch for. Organizations need training programs for AI oversight roles.

Governance ensures oversight happens in practice, not just in theory. Policies must define when oversight is required, who is responsible, what actions oversight personnel can take, and how interventions are documented.

Leveraging Existing Data Governance Capabilities

Organizations with mature data governance programs have capabilities that apply directly to AI Act compliance. Understanding what transfers and what’s genuinely new helps optimize implementation effort.

Data Quality Management

Established data quality programs provide significant advantage. Organizations that already validate data completeness, accuracy, consistency, and timeliness can extend those capabilities to training data.

Existing quality rules and validation logic apply. A rule that validates customer address completeness works for training datasets containing customer addresses. A consistency check between related fields transfers to training data.

What’s new is validating representativeness and bias. Traditional data quality programs don’t typically check whether data represents diverse populations proportionally. Demographic profiling and bias detection extend quality programs into new dimensions.

Organizations should inventory existing quality rules and tools. Which rules apply to training data? Which quality dimensions are already monitored? Where are gaps? This assessment shows what capabilities transfer versus what needs building.

Quality tooling often supports training data with minor configuration. Data quality platforms that profile databases can profile data files used in model training. The same validation logic runs in different contexts.

The main adaptation is making quality validation a required gate in ML pipelines. Quality checks that are optional for analytical datasets must be mandatory for high-risk AI training data.

Metadata Management and Data Catalogs

Data catalog investments pay dividends for AI compliance. Organizations with catalogs that track data lineage, document data definitions, capture business glossaries, and record data ownership extend those capabilities to training datasets.

Training datasets become cataloged assets. Each dataset gets metadata entries documenting source, purpose, quality metrics, ownership, and access controls. The catalog shows which datasets trained which models.

Lineage tracking extends from operational data flows to model training flows. A lineage graph showing how source data flows through transformation to analytical marts extends to show how those marts flow into model training.

What’s new is tracking ML-specific metadata: model architectures, hyperparameters, validation metrics, and bias assessment results. Data catalogs need ML extensions to capture this information alongside traditional data metadata.

Organizations should evaluate whether existing catalogs support ML metadata. Some catalogs have native ML capabilities. Others integrate with MLOps platforms. Organizations may need catalog extensions or complementary ML tracking tools.

The goal is unified metadata. Data governance teams should be able to query one system to understand data from source systems through transformation to analytical use to ML training to model deployment. Fragmented metadata across multiple tools creates compliance gaps.

Access Controls and Data Protection

Existing access control frameworks apply to training data. Organizations that restrict access to sensitive data in operational systems must apply the same restrictions to training datasets containing that data.

If customer financial data has access controls limiting who can view it, training datasets containing customer financial data inherit those controls. If patient health information requires specific authorization, training datasets with health information require the same authorization.

What’s new is managing access to model artifacts and predictions. Models trained on sensitive data may themselves be sensitive. Model outputs may reveal information about training data. Access controls extend beyond data to models and predictions.

Organizations should review existing data classification schemes. Do classifications cover AI use cases? If data is classified as confidential, does that classification restrict use in model training? Organizations need clear policies on whether data classification constrains AI usage.

Training data derived from production data should inherit production data’s access controls. If a training dataset is extracted from a governed database, extraction should preserve access control metadata and apply it to the training dataset.

Data Lineage Tracking

Organizations tracking operational data lineage have infrastructure that extends to model training lineage. Lineage tools that map data flows from source to consumption can map training data flows from source to model.

The same questions apply: where did this data originate, how was it transformed, who touched it, when did changes occur? For training data, lineage shows data journey from production systems through extraction and preprocessing to model training.

What’s new is capturing model training as a lineage step. Traditional lineage shows data moving between systems. AI lineage shows data being consumed by model training, producing model artifacts, which then consume input data to produce predictions.

Lineage tools must track model versions alongside data versions. Which dataset version trained model version 2.1? When model version 2.1 produces a prediction, lineage traces back through the model to its training data.

Organizations should extend lineage tracking to include ML platforms. If lineage currently tracks data movement through Informatica, dbt, or data factory pipelines, it should also track data movement into TensorFlow, PyTorch, or scikit-learn training jobs.

Complete lineage enables compliance evidence. When auditors ask about a specific AI decision, lineage should trace that decision to the model that made it, to the training data that produced that model, to the source systems that provided that training data.

Data Stewardship and Ownership

Established data stewardship programs assign ownership and accountability for data assets. This model extends naturally to training datasets and AI systems.

Training datasets need data stewards just like operational datasets. Stewards are responsible for data quality, metadata completeness, access control enforcement, and compliance with governance policies.

AI systems need owners accountable for the system’s operation, compliance with AI Act requirements, and remediation when issues arise. Ownership shouldn’t default to data science teams who built models. Business owners who deploy AI systems should own compliance.

What’s new is defining stewardship for model artifacts and predictions. Are models governed like code or like data? Who is accountable for model behavior in production? Organizations need clarity on AI asset ownership.

Governance councils should expand scope to include AI governance. The council that resolves data governance issues can resolve AI governance issues using the same decision-making processes and authority.

Stewardship programs already have training, support, and community infrastructure. Extending that infrastructure to AI stewards is more efficient than building parallel AI-specific programs.

What’s Genuinely New for AI Compliance

While existing governance capabilities help significantly, AI Act compliance requires some genuinely new capabilities that most organizations haven’t implemented.

Bias Assessment and Fairness Testing

Few organizations systematically assess training data bias or test model fairness. This is new territory requiring new skills, new tools, and new processes.

Bias assessment requires demographic analysis even for organizations that avoid collecting demographic data. Understanding representation in training data means profiling data by race, gender, age, and other protected attributes even if models don’t use those features.

Organizations need frameworks for defining fairness. Equal outcome fairness, equal opportunity fairness, demographic parity, and calibration across groups represent different fairness definitions. Which definition applies depends on use case. Organizations must choose and justify their fairness approach.

Fairness testing requires comparing model behavior across groups. Does the model have similar accuracy for all demographic groups? Do false positive rates differ? Do predicted outcomes distribute equitably?

This work requires new skills. Data scientists need training in fairness metrics and bias mitigation techniques. Governance teams need training to oversee bias assessment. Organizations may need to hire fairness specialists.

New tooling is required. Fairness libraries (AI Fairness 360, Fairlearn, What-If Tool) provide bias metrics and mitigation techniques. Organizations should standardize on fairness tools rather than building from scratch.

Model Explainability and Interpretability

The AI Act requires that deployers of high-risk systems understand how models make decisions. This pushes organizations toward interpretable models or explainability techniques for complex models.

Explainability requirements vary by AI system risk and impact. A fraud detection model may need less explainability than a loan approval model if fraud decisions are reviewed by humans. Organizations must determine explainability requirements per system.

Technical approaches include using inherently interpretable models (linear models, decision trees, rule sets) when possible, applying post-hoc explanation techniques (LIME, SHAP, counterfactual explanations) to complex models, and building explanation interfaces that present model reasoning to oversight personnel.

Governance policies must define explainability standards. What level of explanation is required for high-risk systems? Who needs access to explanations? How are explanations validated for accuracy?

Explainability infrastructure is new for most organizations. Data science teams may use explanation techniques in model development but haven’t operationalized explanations for production systems. Deploying explanations to end users or oversight personnel requires new interfaces and infrastructure.

Continuous Monitoring and Model Drift Detection

The AI Act requires ongoing monitoring of AI system performance. Models that degrade over time or behave differently than during validation create compliance risk.

Organizations need monitoring that detects prediction distribution shifts (is the model making different types of predictions than expected), performance degradation (is accuracy or other metrics declining), fairness drift (are fairness metrics changing over time), and data drift (is input data distribution changing).

Monitoring requires baselines. Organizations must establish expected model behavior during validation, then detect deviation from those expectations in production. Baselines come from validation datasets and early production operation.

Alerting must trigger investigation when monitoring detects issues. Organizations cannot simply collect monitoring data without acting on it. Governance policies must define thresholds for investigation and escalation.

Retraining processes must address drift. When models degrade, organizations need processes to retrain with updated data, revalidate performance and fairness, obtain approval for deployment, and roll out updated models while maintaining compliance.

This continuous monitoring and retraining represents new operational burden. Organizations that view ML models as static artifacts once deployed must shift to treating models as living systems requiring ongoing governance.

Comprehensive Technical Documentation

While organizations document data systems, AI Act technical documentation requirements exceed typical data documentation. The regulation demands detailed technical documentation including model architecture and design, training methodology and parameters, validation approach and results, known limitations and error analysis, and human oversight procedures.

Documentation must be sufficient for competent authority assessment. Regulators who are not AI specialists must be able to understand what the AI system does, how it was developed, what limitations it has, and how oversight functions.

This requires translating technical details into accessible language. Documentation written for data scientists must be supplemented with documentation for compliance reviewers and auditors who lack ML expertise.

Organizations need documentation templates that standardize what’s captured. Ad-hoc documentation created per project creates gaps and inconsistency. Templates ensure complete documentation across all high-risk AI systems.

Documentation generation should be partially automated. MLOps platforms can extract model architecture, parameters, and metrics automatically. Manual documentation should focus on context, limitations, and oversight procedures that platforms cannot infer.

AI-Specific Governance Roles

While data stewardship transfers to AI governance, some roles are genuinely new. Organizations may need AI ethics officers who assess AI system impact on fundamental rights, model validators who independently verify AI system performance and fairness, AI compliance officers who ensure AI Act requirements are met, and human oversight coordinators who manage human review of AI decisions.

These roles overlap with but differ from traditional governance roles. A data steward ensures data quality. An AI ethics officer assesses whether using that data for AI is appropriate. Both are necessary.

Organizations should clarify role boundaries. Who is accountable when an AI system produces biased outcomes — the data steward, the data scientist, the model validator, or the business owner? Clear accountability prevents gaps and finger-pointing.

Small organizations cannot create specialist roles for every function. In smaller organizations, existing roles expand to include AI responsibilities. Data stewards add AI data quality. Compliance officers add AI compliance. The key is clearly assigning accountability even when roles combine.

Implementation Timeline: April to August 2026

Organizations have approximately four months remaining until the August 2, 2026 enforcement deadline. Here is a realistic implementation timeline for high-risk AI systems.

April 2026: Assessment and Prioritization

Week 1-2: Conduct comprehensive AI system inventory across all business units, IT, and data science teams. Document every AI system regardless of development stage. Include internal systems, vendor products, and SaaS services.

Week 2-3: Classify each AI system by risk level using AI Act criteria. Document classification rationale. Identify all high-risk systems requiring immediate compliance. Create prioritized implementation list.

Week 3-4: Assess current compliance status for each high-risk system. What documentation exists? What controls are in place? What gaps must be closed? Create detailed gap analysis per system.

Week 4: Develop implementation plan with resource allocation, responsibility assignment, and milestone dates. Secure executive sponsorship and budget if not already obtained.

Deliverable: Comprehensive AI system inventory, risk classifications, gap analysis, and implementation plan.

May 2026: Foundation Building

Week 1-2: Implement or enhance data quality framework for training data. Define quality dimensions and thresholds. Configure quality validation tools. Create quality gates in ML pipelines.

Week 2-3: Establish bias detection and mitigation process. Select fairness metrics and tools. Create bias review templates and procedures. Train data science teams on bias assessment.

Week 3-4: Deploy or configure model lineage tracking. Implement MLOps platform if not already present. Configure lineage capture. Establish metadata management for models and training data.

Week 4: Document governance policies for AI systems covering risk classification, data quality, bias assessment, validation, and deployment authorization.

Deliverable: Data quality framework, bias assessment process, lineage tracking system, and documented policies.

June 2026: System-Specific Implementation

Week 1-4: Apply governance controls to highest-priority high-risk AI systems. For each system conduct training data quality validation, perform bias assessment, document model lineage, create technical documentation, implement logging and monitoring, and establish human oversight procedures.

Work through priority list systematically. Complete highest-risk systems first. Organizations with many high-risk systems may not complete all by deadline — prioritize systems with highest regulatory exposure.

Week 4: Conduct internal compliance review for completed systems. Validate that documentation is complete, controls are operating, and systems meet AI Act requirements.

Deliverable: Compliant high-risk AI systems with complete documentation and operational controls.

July 2026: Validation and Remediation

Week 1-2: Conduct comprehensive compliance validation across all high-risk systems. Simulate regulatory review. Identify remaining gaps or weaknesses.

Week 2-3: Remediate identified gaps. Complete missing documentation. Fix control deficiencies. Validate bias mitigation effectiveness.

Week 3-4: Finalize documentation packages for each high-risk AI system. Organize evidence for potential regulatory requests. Ensure documentation is retrievable and presentable.

Week 4: Train personnel on AI Act requirements and compliance procedures. Ensure data science teams, governance teams, and business owners understand obligations.

Deliverable: Validated compliance for all high-risk systems with complete documentation packages.

August 1, 2026: Final Preparation

Final review of all high-risk AI systems. Confirm controls are operational. Verify documentation is complete and accurate. Ensure teams know compliance procedures.

Prepare for enforcement beginning August 2. High-risk systems not compliant should be taken offline or have human review layers added to reduce risk pending completion.

Organizations should conduct post-implementation review to assess what went well, what challenges arose, and how future AI systems can be developed with compliance built in from the start rather than retrofitted.

Post-August Implementation: Limited and Minimal Risk Systems

After high-risk compliance is achieved, organizations should address limited-risk transparency requirements and enhance minimal-risk systems.

Limited-risk systems need AI disclosure mechanisms for chatbots and virtual assistants, clear labeling for AI-generated content, and deepfake detection and marking.

Minimal-risk systems benefit from basic governance — training data documentation, quality monitoring, and lineage tracking — even though not legally required. Good governance improves AI system reliability regardless of regulatory mandate.

Organizations should establish ongoing governance for new AI systems. Future development should integrate governance from inception rather than retrofitting compliance. Policies should require governance review before AI systems reach production.

Common Implementation Challenges

Organizations implementing AI Act compliance encounter predictable challenges. Understanding these challenges helps plan mitigation strategies.

Challenge 1: Lack of Training Data Documentation

Many existing AI systems have poor training data documentation. Data scientists remember roughly what data they used but lack detailed documentation of sources, preprocessing, or version control.

Recreating documentation for deployed models is difficult. If training data is no longer available or has changed, precise documentation may be impossible. Organizations face tough decisions about whether to redevelop models with proper documentation or retrofit documentation for existing systems.

Mitigation requires accepting that some existing systems will have incomplete documentation. Organizations should document what is knowable (model architecture, validation results, current performance) and note where historical information is unavailable. For critical systems with poor documentation, consider retraining with proper governance rather than attempting to document undocumented training data.

Going forward, organizations must mandate documentation during development. Policies should require that training data is cataloged, versioned, and profiled before training begins. No model reaches production without complete training data documentation.

Challenge 2: Distributed AI Development

AI development happens across organizations. Data science teams, business analysts with AutoML tools, and IT teams deploying vendor products all create AI systems. Central governance teams may not know what exists.

Organizations that lack central oversight of AI development struggle to create comprehensive inventories. Business units deploy AI-enabled SaaS applications without governance review. Data science teams experiment with models that drift into production use.

Mitigation requires establishing governance authority over AI development. Policies must require that all AI systems, regardless of development source, undergo classification and compliance review before production deployment. Procurement processes must flag AI-enabled software for governance review.

Organizations should implement AI system registries where all development teams register their work. The registry feeds the compliance inventory. Registration should be required, not optional.

For discovery of unregistered systems, organizations may need to scan infrastructure for ML frameworks, review software licenses for AI capabilities, and survey business units for shadow AI.

Challenge 3: Insufficient Tooling

Many organizations lack tooling for bias detection, fairness testing, and model explainability. Data science teams use open-source libraries in research but haven’t operationalized those tools for production compliance.

Building enterprise-grade tooling from scratch is expensive and slow. Organizations cannot build comprehensive MLOps platforms in four months. Buying platforms takes time for procurement, implementation, and integration.

Mitigation focuses on minimum viable tooling for August deadline. Organizations should implement basic capabilities using open-source tools and manual processes, then enhance with enterprise platforms post-deadline. A bias assessment conducted with open-source fairness libraries and documented in spreadsheets meets compliance better than no assessment because platforms weren’t ready.

Organizations should prioritize tooling investments on highest-impact capabilities: lineage tracking provides broad value, bias detection is required for high-risk systems, and quality validation prevents training on bad data.

Cloud ML platforms (Azure ML, AWS SageMaker, Google Vertex AI) provide integrated governance capabilities. Organizations using cloud ML should leverage native governance features rather than building separate tools.

Challenge 4: Unclear Risk Classification

The AI Act’s risk classification criteria include gray areas. Organizations disagree on whether specific use cases are high-risk. Conservative classification creates compliance burden for lower-risk systems. Aggressive classification creates enforcement exposure.

Determining whether an AI system “significantly affects” access to essential services or “evaluates” individuals requires interpretation. Organizations need legal guidance but find that lawyers are also navigating new regulation.

Mitigation involves documenting classification rationale. If an organization classifies a system as limited-risk rather than high-risk, that decision and its justification must be documented. If regulators disagree, documentation shows thoughtful assessment rather than careless avoidance.

Organizations should err toward classifying borderline systems as high-risk. The cost of over-compliance is implementation effort. The cost of under-compliance is €35 million fines.

Industry groups and trade associations may provide classification guidance as implementations proceed. Organizations should monitor guidance from relevant industries and regulatory authorities.

Challenge 5: Resource Constraints

Implementing AI governance across many systems in four months requires significant resources. Data science teams are already busy with model development. Governance teams have other compliance obligations. Organizations lack specialized AI governance expertise.

Small organizations face particularly severe constraints. A 200-person company cannot hire dedicated AI ethics officers and model validators. Existing staff must absorb AI compliance alongside current responsibilities.

Mitigation requires ruthless prioritization. Not every AI system can receive equal attention. Organizations must focus resources on truly high-risk systems and accept lighter compliance for lower-risk systems.

Organizations should consider external support. Consultants specializing in AI governance can accelerate implementation, though they don’t replace internal accountability. Vendors of governance platforms often provide implementation services.

Training existing staff creates capacity. Data stewards can learn AI governance. Data scientists can learn governance requirements. Compliance officers can learn AI Act specifics. Training is faster and cheaper than hiring.

Organizations must set realistic expectations. Perfect compliance across all systems by August 2 may not be achievable. Getting high-risk systems to acceptable compliance and having plans for remaining systems is realistic.

Vendor AI Systems and Third-Party Compliance

Organizations using AI systems from vendors face unique compliance challenges. The AI Act places obligations on both providers (who develop AI systems) and deployers (who use AI systems). Organizations deploying vendor AI must understand their compliance obligations.

Provider vs Deployer Responsibilities

Providers of high-risk AI systems must ensure systems meet AI Act requirements before market release: implement risk management, ensure training data quality and bias mitigation, create technical documentation, maintain quality management systems, and undergo conformity assessment.

Deployers of high-risk AI systems must use systems according to instructions, ensure human oversight, monitor operation and performance, report serious incidents, and maintain logs for six months.

Organizations deploying vendor AI cannot assume vendors handle all compliance. Deployers have independent obligations even when using compliant vendor systems.

Vendor Assessment and Due Diligence

Organizations should assess vendors’ AI Act compliance before procuring or deploying vendor AI systems. Required vendor documentation includes AI Act conformity assessment results, technical documentation demonstrating compliance, instructions for use including deployment requirements, and transparency information for affected individuals.

Organizations should require vendors to contractually warrant AI Act compliance and indemnify deployers for vendor compliance failures. Contracts should specify that vendors maintain compliance as regulations evolve.

For existing vendor systems deployed before the AI Act, organizations should request updated documentation demonstrating compliance. Vendors of high-risk AI systems must achieve compliance or remove systems from EU market.

SaaS and Cloud AI Services

Organizations using AI-as-a-service offerings (OpenAI APIs, Google Cloud AI, AWS ML services) must understand how service providers handle compliance. Some services are general-purpose tools where deployers are providers (organizations training custom models on cloud platforms). Other services are pre-built AI where service providers are providers (organizations using vendor-trained models via API).

Cloud platform providers are building AI governance capabilities into their services. Organizations should leverage native compliance features rather than building parallel systems. Azure AI Content Safety, AWS AI Service Cards, and Google Model Cards provide vendor-implemented governance.

Organizations must still implement deployer obligations even when using compliant cloud services: classify their use case risk level, implement human oversight, monitor performance, and maintain logs.

Custom vs Vendor AI Governance

Organizations using both custom-developed and vendor AI need consistent governance across both. Governance policies should apply equally regardless of development source. Risk classification, human oversight, monitoring, and logging requirements apply to vendor and custom AI.

Different governance controls may implement across custom and vendor systems. Custom systems need full development lifecycle governance (training data quality, bias assessment, model validation). Vendor systems need procurement governance (vendor assessment, contract compliance, deployment controls).

Organizations should inventory vendor and custom AI in the same registry. The compliance status, risk level, and governance controls should be visible regardless of source. Governance teams need unified view across all AI systems.

Industry-Specific Considerations

Different industries face unique AI Act challenges based on their AI use cases and existing regulatory environment.

Financial Services

Banks and financial institutions extensively use AI for credit decisions, fraud detection, customer service, trading, and risk management. Many applications are high-risk under the AI Act.

Financial services already operate in heavily regulated environments. GDPR, Basel III, MiFID II, and national banking regulations create existing compliance infrastructure. Financial institutions should integrate AI Act requirements into existing compliance frameworks rather than creating separate AI compliance programs.

Credit scoring and lending decisions are explicitly high-risk under the AI Act. Banks must document training data, assess bias in credit models, ensure fairness across demographic groups, and maintain complete decision traceability. These requirements overlap with existing fair lending obligations in many jurisdictions.

Fraud detection models require careful classification. If fraud models merely flag transactions for human review, they may be lower risk than models that automatically block transactions. Banks should evaluate actual deployment context not just model capability.

Financial regulators increasingly focus on AI governance. Banks should expect regulatory examinations to include AI Act compliance review alongside traditional financial regulation compliance.

Healthcare

Healthcare providers use AI for diagnosis support, treatment planning, patient monitoring, and administrative optimization. Medical AI faces strict regulation as products under EU medical device regulations in addition to AI Act requirements.

AI systems that qualify as medical devices must comply with both medical device regulations and AI Act. Organizations cannot choose one or the other. Both apply simultaneously.

Training data for healthcare AI often contains protected health information requiring GDPR compliance and healthcare-specific data protection. Organizations must reconcile AI Act training data documentation with patient privacy obligations.

Bias in healthcare AI can lead to differential health outcomes across populations. Healthcare organizations should conduct rigorous bias assessment given the stakes. Algorithmic health disparities create both compliance risk and patient harm.

Many healthcare AI systems assist but don’t replace human clinicians. Organizations should document physician oversight procedures to satisfy AI Act human oversight requirements.

Manufacturing

Manufacturing uses AI for predictive maintenance, quality control, supply chain optimization, and autonomous systems. Safety-critical applications in industrial settings are high-risk under AI Act.

Manufacturing organizations with experience in ISO 9001 quality management and ISO safety standards can apply similar rigor to AI governance. Quality management disciplines transfer well to AI compliance.

Industrial IoT generates enormous training data volumes from sensors and systems. Manufacturing organizations need scalable data quality validation for IoT data used in AI training. Traditional quality approaches designed for small datasets don’t handle petabyte-scale sensor data.

Autonomous systems in manufacturing (robots, automated vehicles) require careful risk classification. Autonomous systems that can cause physical harm are explicitly high-risk requiring comprehensive compliance.

Supply chain AI often uses data from external partners. Manufacturing organizations must ensure data quality and bias assessment for third-party data used in AI training.

Retail and E-Commerce

Retailers use AI for recommendation systems, pricing optimization, inventory management, and customer service automation. Some applications are high-risk while others are minimal-risk.

Recommendation systems are generally minimal-risk unless they significantly affect access to essential services. Entertainment recommendations are minimal-risk. Recommendations affecting access to housing, healthcare, or financial services could be high-risk.

Dynamic pricing using AI requires careful analysis. Pricing that results in discrimination based on protected characteristics violates existing law and could constitute high-risk AI requiring compliance.

Customer service chatbots are limited-risk requiring transparency. Retailers must disclose that customers are interacting with AI. Virtual assistants must be clearly identified as automated.

Retailers using AI for hiring, performance management, or workplace monitoring face high-risk classification. HR applications of AI carry significant compliance obligations.

Government and Public Sector

Government agencies use AI for benefit determination, law enforcement, public safety, resource allocation, and administrative efficiency. Many government AI applications are explicitly high-risk under AI Act.

Public sector AI receives particular scrutiny because government decisions affect fundamental rights. Benefit eligibility, law enforcement, and resource allocation AI all fall under high-risk categories.

Government agencies often lack resources for compliance implementation. Public sector organizations should seek guidance from national AI regulatory authorities and coordinate across agencies rather than each implementing independently.

Transparency requirements apply especially strongly to government AI. Citizens have right to understand how government makes decisions affecting them. Government agencies should prioritize explainability and human oversight.

AI used in law enforcement faces restrictions. Predictive policing, recidivism assessment, and similar applications require careful implementation with strong bias mitigation and human oversight.

Preparing for Regulatory Audits

Organizations should expect that regulatory authorities will audit AI Act compliance. Preparation now reduces risk when auditors arrive.

What Auditors Will Request

Based on financial services and data protection audits, AI Act auditors will likely request AI system inventory and risk classifications, technical documentation for high-risk systems, training data quality and bias assessment results, model validation reports and performance metrics, human oversight procedures and evidence of operation, incident logs and serious incident reports, and policies and procedures governing AI development and deployment.

Organizations should prepare evidence packages for each high-risk AI system. When auditors request documentation for a specific system, evidence should be retrievable within hours, not days of scrambling.

Documentation Best Practices

Auditors value clear, organized, complete documentation more than volume. A 10-page document clearly explaining model training beats a 100-page document that obscures key information in technical detail.

Documentation should follow standard templates. Every high-risk system should have documentation following the same structure. Consistent documentation helps auditors review efficiently and shows systematic compliance.

Documentation should be version-controlled. When models are retrained or updated, new documentation versions are created. Auditors may want to see documentation from when a model was deployed, not just current documentation.

Documentation should distinguish facts from interpretations. Factual statements (training dataset contained 50,000 records) are verifiable. Interpretive statements (training data was representative) require supporting evidence. Documentation should provide both.

Evidence Organization

Organizations should create centralized repositories for compliance evidence. Scattered documentation across SharePoint sites, wikis, and local drives creates audit risk. Centralized repositories with clear organization enable efficient evidence production.

Evidence should be indexed and searchable. Auditors may request evidence for specific topics across multiple systems. Organizations should be able to retrieve all bias assessment reports or all model validation results efficiently.

Compliance evidence should be preserved even after AI systems are decommissioned. Organizations may need to defend past decisions years later. Archival storage for compliance evidence protects against future audits.

Demonstrating Ongoing Compliance

AI Act compliance is not one-time implementation. Organizations must demonstrate continuous compliance through monitoring, model retraining with continued quality and bias assessment, incident investigation and remediation, and policy updates as regulations evolve.

Auditors will review evidence of ongoing governance, not just initial deployment compliance. Organizations should maintain audit trails showing that governance controls operate continuously.

Regular internal compliance reviews create audit readiness. Organizations should periodically review their own compliance, identify gaps, and remediate before external audits discover issues.

Long-Term AI Governance Beyond Compliance

AI Act compliance is a floor, not a ceiling. Organizations should build AI governance that exceeds regulatory minimums to enable responsible AI innovation.

Governance as Enabler, Not Blocker

Well-designed AI governance accelerates AI adoption by reducing risk, building trust, and preventing failures. Poor governance that focuses only on control slows innovation and creates workarounds.

Governance should provide clear paths for compliant AI development. When data science teams know exactly what documentation, validation, and approval is required, they can build those steps into development from the start rather than discovering requirements at the end.

Governance should differentiate requirements by risk. Minimal-risk AI should have lightweight governance enabling rapid experimentation. High-risk AI should have rigorous governance reflecting serious consequences. One-size-fits-all governance creates friction for low-risk innovation.

Ethical AI Beyond Legal Requirements

The AI Act addresses fundamental rights and safety but doesn’t cover all ethical considerations. Organizations should consider whether AI systems align with organizational values, respect human dignity beyond legal requirements, promote fairness and equity, avoid harm even when not prohibited, and create positive social impact.

Some organizations establish AI ethics boards that review high-stakes AI beyond legal compliance. Ethics review considers whether organizations should build AI systems even if they legally could.

Ethical AI governance complements regulatory compliance. Compliance is mandatory floor. Ethics is voluntary ceiling. Organizations competing on trust and reputation benefit from governance exceeding legal minimums.

Building AI Governance Maturity

Organizations should evolve AI governance maturity over time. Initial compliance is reactive — implementing controls to meet deadline. Mature governance is proactive — embedding governance in development from inception.

Maturity evolution includes standardizing governance processes across all AI development, automating compliance checks and documentation, integrating governance into ML tooling and platforms, building organizational AI governance culture, and continuously improving based on incidents and near-misses.

Organizations at maturity level 1 manually document compliance. Organizations at maturity level 5 automatically generate compliance evidence as byproduct of standard development workflows.

Investment in governance tooling and automation pays dividends. Initial manual implementation is necessary for August deadline. Post-deadline, organizations should automate what’s repeatable and focus human effort on judgment requiring decisions.

Competitive Advantage Through Governance

Organizations with superior AI governance gain competitive advantages through faster AI deployment enabled by clear processes, lower compliance costs through efficient governance, reduced AI incident risk through systematic controls, stronger customer and regulator trust, and ability to attract AI talent seeking responsible employers.

AI governance is becoming a competitive differentiator like data governance and cybersecurity before it. Organizations that treat governance as strategic capability rather than compliance burden will lead their industries in AI adoption.

Frequently Asked Questions

What is the EU AI Act? The EU AI Act is the world’s first comprehensive legal framework for artificial intelligence, establishing risk-based rules for AI system development, deployment, and use across the European Union. The regulation became law in 2024 and most provisions, including requirements for high-risk AI systems, become enforceable on August 2, 2026. The AI Act uses a tiered approach with prohibited practices (AI systems that are banned), high-risk systems (requiring strict compliance with data governance, documentation, and oversight requirements), limited-risk systems (requiring transparency about AI use), and minimal-risk systems (no specific AI obligations). Organizations deploying AI systems affecting people in the EU must comply regardless of where the organization is located.

Does the EU AI Act apply to my organization? The AI Act applies to providers placing AI systems on the EU market, deployers using AI systems within the EU, and organizations located outside the EU whose AI system outputs are used in the EU. If your AI systems affect people in European countries — through direct service delivery, products sold in EU markets, or outputs consumed by EU residents — the AI Act likely applies. The regulation has extraterritorial reach similar to GDPR. Organizations should assess each AI system individually since some systems may have EU reach while others do not. When in doubt, assume the AI Act applies and conduct formal scoping rather than assuming exemption.

What data governance requirements apply to high-risk AI systems? High-risk AI systems must implement comprehensive data governance covering training data quality (relevant, representative, free of errors, and complete), bias detection and mitigation (examining datasets for biases that could lead to discrimination), model lineage and traceability (tracking data through training to deployment to decisions), technical documentation (detailed records of data sources, preprocessing, labeling, and limitations), human oversight capabilities (enabling humans to access decision data and override AI outputs), and operational logging (maintaining six months of logs showing AI system operation). Organizations must establish quality thresholds, implement validation processes, document bias assessments, maintain model lineage from training data to production decisions, create comprehensive technical documentation, and enable human review and override of AI decisions.

How do I classify my AI system by risk level? AI Act risk classification depends on the system’s purpose and potential impact. Prohibited systems include those manipulating behavior through subliminal techniques, exploiting vulnerable groups, social scoring by public authorities, and most real-time biometric identification. High-risk systems include AI in critical infrastructure safety components, biometric identification and categorization, education access and evaluation, employment and worker management, essential service access (credit, benefits, emergency services), law enforcement, migration and border control, and administration of justice. Limited-risk systems include chatbots, virtual assistants, and synthetic content generation. Minimal-risk systems include most other applications like spam filters and recommendation systems. Organizations should document their classification rationale and err toward higher-risk classification for borderline cases since the cost of non-compliance far exceeds compliance effort.

What documentation must I maintain for compliance? Required documentation includes an AI system inventory with risk classifications and compliance status, training data documentation covering sources, preprocessing, quality metrics, bias assessment, and limitations, model technical documentation including architecture, training methodology, validation results, and known limitations, bias assessment reports showing identified biases and mitigation approaches, model lineage connecting training datasets to models to production decisions, operational logs capturing at least six months of AI system activity, human oversight procedures and evidence of oversight operation, incident reports for AI system failures or unexpected behavior, and governance policies covering risk classification, quality standards, bias assessment, and deployment authorization. Documentation should be organized by AI system with central indexing for efficient retrieval during audits.

What happens if I’m not compliant by August 2, 2026? Organizations operating non-compliant high-risk AI systems after August 2, 2026 face enforcement action from national regulatory authorities. Penalties include fines up to €35 million or 7% of global annual revenue for serious violations (prohibited AI practices, non-compliant high-risk systems), fines up to €15 million or 3% of revenue for providing incorrect information to authorities, and administrative measures including system suspension or withdrawal from market. Beyond financial penalties, non-compliance damages reputation, creates customer trust issues, limits AI deployment capability, and exposes organizations to legal liability if AI systems cause harm. Organizations that cannot achieve full compliance by August should prioritize highest-risk systems, implement human review layers as interim controls for non-compliant systems, document good-faith compliance efforts, and create remediation plans with clear timelines.

Can I use existing data governance for AI Act compliance? Existing data governance capabilities provide significant advantage for AI Act compliance. Data quality programs extend to training data validation, metadata management systems catalog training datasets and models, access controls apply to sensitive training data and model artifacts, lineage tracking maps training data flows to model deployment, and stewardship programs assign accountability for AI data assets. However, genuinely new capabilities are required including bias detection and fairness testing across demographic groups, model explainability and interpretability for human oversight, continuous monitoring for model drift and performance degradation, comprehensive technical documentation exceeding typical data documentation, and AI-specific governance roles like model validators and ethics officers. Organizations should leverage existing governance infrastructure while adding AI-specific extensions rather than building parallel governance programs.