A data catalog is a searchable, organized inventory of an organization’s data assets — including databases, files, reports, APIs, and streaming data — along with the metadata that describes each asset’s meaning, origin, quality, and usage.

Think of it as a library card system for your enterprise data. Just as a library catalog tells you what books exist, where they’re shelved, and what they’re about, a data catalog tells your analysts, data engineers, and business users exactly what data your organization has, where it lives, how trustworthy it is, and how to access it.

Modern enterprise data catalogs go far beyond simple inventory. They provide data lineage visualization, automated metadata discovery, collaboration features, data quality scoring, and governance policy enforcement — making them one of the most critical tools in a modern data governance program.

In this comprehensive guide, you’ll learn everything you need to know about data catalogs: what they are, how they work, why organizations need them, what features to look for, how they differ from data dictionaries and data inventories, and which tools lead the market in 2026.

- What Is a Data Catalog? (Definition)

- Why Organizations Need a Data Catalog

- How a Data Catalog Works

- Key Components of a Data Catalog

- Data Catalog vs. Data Dictionary vs. Data Inventory

- Types of Data Catalogs

- Benefits of a Data Catalog

- Data Catalog Features to Look For

- Top Data Catalog Tools in 2026

- How a Data Catalog Supports Data Governance

- Data Catalog Use Cases by Industry

- How to Implement a Data Catalog

- Common Data Catalog Challenges

- The Future of Data Catalogs: AI and Automation

- Frequently Asked Questions

- Summary

- ✅ AIOSEO SETTINGS — PASTE DIRECTLY INTO WORDPRESS

- 📋 FEATURED SNIPPET OPTIMIZATION

- What Is a Data Catalog? The Complete Guide for 2026

- Table of Contents

- What Is a Data Catalog? (Definition)

- Why Organizations Need a Data Catalog

- How a Data Catalog Works

- Key Components of a Data Catalog

- Data Catalog vs. Data Dictionary vs. Data Inventory

- Types of Data Catalogs

- Benefits of a Data Catalog

- Data Catalog Features to Look For

- Top Data Catalog Tools in 2026

- How a Data Catalog Supports Data Governance

- Data Catalog Use Cases by Industry

- How to Implement a Data Catalog

- Common Data Catalog Challenges

- The Future of Data Catalogs: AI and Automation

- Frequently Asked Questions

- Summary

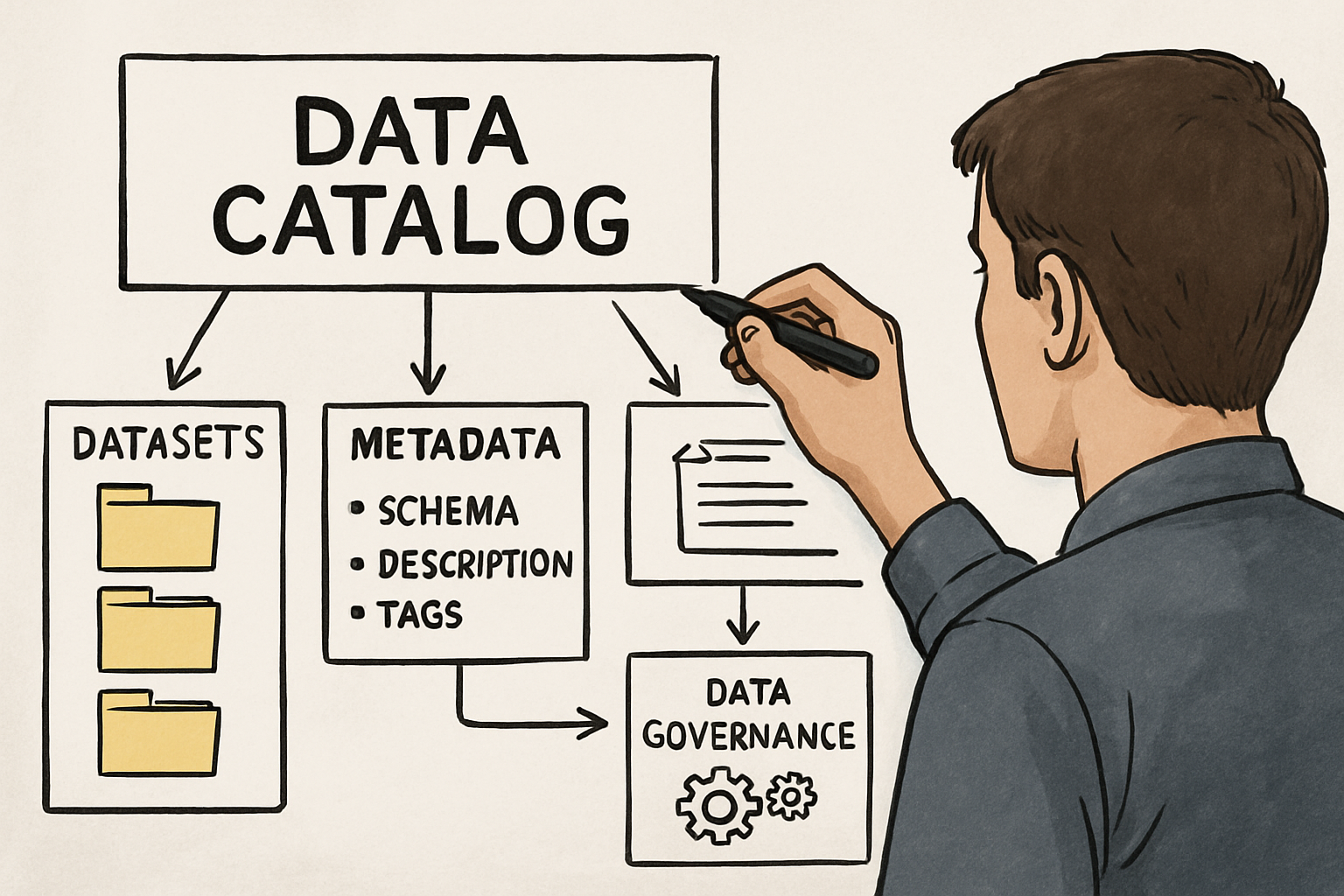

What Is a Data Catalog? (Definition)

At its core, a data catalog is a metadata management tool that creates and maintains an inventory of available data assets within an organization. It combines automated data discovery, human-contributed metadata, and search functionality to help users find, understand, and trust the data they need.

The term “data catalog” emerged in the 2010s as organizations grappled with data sprawl — the explosive growth of data across cloud platforms, on-premise databases, data warehouses, data lakes, SaaS applications, and streaming pipelines. Before data catalogs, data professionals spent an estimated 30–40% of their time simply trying to find the right data. A data catalog solves this discovery problem by creating a centralized, searchable metadata layer across all data systems.

The Library Analogy

Imagine a large university library with millions of books spread across dozens of buildings. Without a catalog, finding a specific book would require physically searching every shelf. With a catalog, you search by title, author, subject, or keyword and immediately see where the book lives, whether it’s available, and related titles that might also help you.

A data catalog works the same way for enterprise data. Instead of books, the assets are tables, reports, APIs, and data pipelines. Instead of authors and subjects, the metadata includes data owners, business definitions, technical attributes, update frequency, and quality scores.

What Does a Data Catalog Contain?

A well-built data catalog contains two broad categories of information:

Technical metadata is automatically harvested from source systems and includes table names, column names and data types, schema structures, row counts, null percentages, update timestamps, system of origin, and access permissions.

Business metadata is contributed by human data stewards and subject matter experts and includes plain-language definitions of what each dataset means, who owns it, which business processes use it, data quality expectations, related glossary terms, and usage guidelines.

Together, these layers of metadata transform raw technical information into actionable, trusted data knowledge that any user — technical or non-technical — can leverage.

Why Organizations Need a Data Catalog

The modern enterprise data landscape is more complex than ever. The average organization uses hundreds of data sources, runs multiple cloud platforms, maintains legacy on-premise systems, and generates petabytes of data annually. Without a data catalog, this complexity creates serious problems.

The Data Discovery Crisis

Research consistently shows that data professionals spend enormous amounts of time on data discovery rather than analysis. A 2023 survey by Atlan found that data engineers spend up to 40% of their time searching for data, understanding it, and confirming it’s appropriate for their use case. Analysts report similar frustrations — discovering a dataset exists only to find they don’t know what its columns mean, where it came from, or whether it’s accurate.

This discovery crisis has real business consequences: delayed decision-making, duplicate data collection efforts, inconsistent metrics across departments, and regulatory compliance risk when the wrong data gets used in reports.

The Trust Problem

Beyond discovery, organizations struggle with data trust. When multiple versions of a “customer count” or “monthly revenue” metric exist across different systems, business users lose confidence in data-driven decisions. A data catalog addresses the trust problem by surfacing data quality scores, lineage information showing where data originated and how it was transformed, and usage information showing which trusted reports and dashboards use each dataset.

Regulatory Compliance Requirements

Regulations like GDPR, CCPA, HIPAA, Basel III, and SOX require organizations to know exactly where sensitive data lives, who can access it, and how it flows through systems. A data catalog provides the audit trail and data lineage documentation that compliance teams need. Financial services firms, healthcare organizations, and government agencies in particular face strict requirements that a data catalog helps satisfy.

Enabling Self-Service Analytics

One of the biggest promises of modern data platforms is self-service analytics — enabling business users to answer their own data questions without waiting for IT. But self-service fails without good metadata. If analysts can’t find data, don’t understand what it means, or can’t trust its quality, self-service becomes self-service chaos. A data catalog is the enabling layer that makes self-service analytics actually work.

How a Data Catalog Works

Understanding how a data catalog works helps you evaluate tools and plan implementations. While implementations vary, most enterprise data catalogs follow a similar operational model.

Step 1: Automated Metadata Harvesting (Crawling)

The data catalog connects to your data sources — data warehouses like Snowflake and Databricks, databases like Oracle and SQL Server, cloud storage like S3 and Azure Data Lake, BI tools like Tableau and Power BI, and data pipelines — through native connectors or APIs. It then crawls these systems to extract technical metadata: table structures, column names and types, sample data, statistics, and schema relationships.

This crawling process runs on a schedule (daily, hourly, or near-real-time depending on the tool) so the catalog stays current as data systems evolve.

Step 2: Automated Classification and Profiling

Modern data catalogs apply machine learning and natural language processing to the harvested metadata to automatically classify data. The catalog identifies that a column named “ssn” likely contains Social Security Numbers, that a field with nine-digit patterns is probably a ZIP code, or that a table receiving updates every five minutes is probably a transactional system rather than a historical archive.

Data profiling goes a step further, calculating statistical summaries: min/max values, average lengths, null percentages, unique value counts, and value distributions. These statistics help users quickly assess data quality without running their own queries.

Step 3: Business Metadata Enrichment

Automated metadata discovery captures what the data looks like technically, but it can’t capture what the data means to the business. This is where data stewards and subject matter experts contribute business metadata through the catalog’s user interface.

Business enrichment includes writing plain-language definitions (“This table contains one record per active customer account as of the end of the prior business day”), tagging datasets with business domain classifications, linking columns to business glossary terms, rating data quality, documenting known issues, and assigning data ownership.

Step 4: Lineage Tracking

Data lineage — the ability to trace data from its origin through every transformation to its final destination — is one of the most valuable features of a data catalog. Lineage is typically captured through a combination of automated parsing of SQL and ETL code, runtime observation of data pipeline execution, and manual documentation.

Lineage serves multiple critical purposes: impact analysis (what downstream reports will break if I change this column?), root cause analysis (where did this bad data value come from?), and compliance documentation (can I prove to regulators where this financial figure originated?).

Step 5: Search and Discovery Interface

With metadata harvested, classified, enriched, and tracked, the catalog presents a search interface that lets users find data assets using natural language queries. Unlike database query tools that require knowing exact table names, a catalog lets users search conceptually: “customer transactions in the last 90 days” or “HIPAA-sensitive patient data in the data lake.”

Search results surface not just matching datasets but also metadata about each one: ownership, quality scores, usage frequency, related assets, and lineage context — giving users everything they need to evaluate whether a dataset fits their needs before they invest time querying it.

Key Components of a Data Catalog

While features vary between products, well-designed enterprise data catalogs share several core components.

Metadata Repository

The metadata repository is the catalog’s central database, storing all technical and business metadata harvested from or contributed about data assets. This repository must handle massive scale — a large enterprise may have millions of data assets — while supporting fast, flexible search and query.

Business Glossary

A business glossary is an integrated dictionary of business terms and their official definitions. The glossary serves as the authoritative vocabulary for the organization, resolving the ambiguity that arises when different departments use different words for the same concept (is a “customer” an active account, an account that has ever placed an order, or a person who has logged into the website?).

Data catalog glossaries link business terms to the specific data assets that represent them, creating a bridge between business language and technical data. This is a cornerstone of effective data governance — you cannot govern data you cannot consistently describe.

Data Lineage Engine

The lineage engine tracks how data flows from source systems through transformations, pipelines, and queries to downstream consumers like reports and dashboards. Lineage is typically visualized as a directed acyclic graph (DAG) showing upstream sources and downstream dependencies for any given dataset.

Advanced lineage engines track lineage at the column level, not just the table level — essential for understanding exactly how a calculated metric like “adjusted gross revenue” is derived from raw transaction data.

Data Quality Profiling

Quality profiling components automatically measure and score data quality across multiple dimensions: completeness (are required fields populated?), accuracy (do values fall within expected ranges?), consistency (does the same customer ID mean the same thing across systems?), timeliness (was the data updated as recently as expected?), and uniqueness (are there unexpected duplicates?).

Quality scores are displayed prominently in search results so users can immediately see whether a dataset is trusted before using it.

Collaboration and Workflow Tools

Modern data catalogs are social platforms as much as technical tools. They include features for users to ask questions about datasets, flag data quality issues, review and approve metadata changes, and track data requests. Some catalogs integrate directly with Slack, Microsoft Teams, and Jira to embed data collaboration into existing team workflows.

Access Control and Governance Policies

Enterprise data catalogs integrate with identity management systems to display access permissions for each dataset and, in some cases, to enforce access policies directly. Users can see not just that a dataset exists but whether they can access it and who to contact to request access.

Governance-aware catalogs can automatically flag datasets containing sensitive data classifications (PII, PHI, financial data) and display the applicable governance policies, making compliance a built-in part of the data discovery experience rather than an afterthought.

Data Catalog vs. Data Dictionary vs. Data Inventory

These three terms are often used interchangeably but represent distinct concepts. Understanding the differences is important for data governance planning.

Data Dictionary

A data dictionary is a document or repository that defines the technical attributes of data elements: column names, data types, allowed values, constraints, and relationships between tables. Data dictionaries are typically narrow in scope (focused on a single database or application), maintained manually, and contain technical rather than business definitions.

A data dictionary answers: “What are the technical specifications of this database?” It is a technical reference document, not a discovery or governance tool.

Data Inventory

A data inventory is a list of the data assets an organization holds, typically including the type of data, its location, who owns it, and how long it’s retained. Data inventories are often compliance-driven — GDPR requires organizations to maintain a record of processing activities, which is essentially a data inventory.

A data inventory answers: “What data do we have and where is it?” It is broader than a data dictionary but shallower — it catalogs what exists without explaining what it means or how it’s used.

Data Catalog

A data catalog combines and extends both concepts. It maintains technical metadata like a dictionary, inventories all data assets like an inventory, and adds business context, lineage, quality scoring, collaboration, and search. A data catalog is a living, dynamic, searchable system — not a static document.

A data catalog answers: “What data do we have, where is it, what does it mean, how trustworthy is it, how does it flow through our systems, and who can help me use it?”

The key distinction: A data dictionary is a technical specification document. A data inventory is a compliance record. A data catalog is an active data knowledge platform that drives data discovery, trust, and governance across the enterprise.

Types of Data Catalogs

Not all data catalogs are alike. Different architectural approaches suit different organizational needs.

Enterprise Data Catalogs

Enterprise data catalogs are comprehensive, full-featured platforms designed for large organizations with complex, multi-cloud data environments. They support thousands of data assets across dozens of connected systems, provide robust governance workflows, and offer enterprise-grade security and access control. Collibra, Alation, and Informatica Axon are leading examples.

Cloud-Native Data Catalogs

Cloud-native catalogs are built specifically for cloud data platforms and tight integration with cloud-native data services. Microsoft Purview for Azure environments, AWS Glue Data Catalog for AWS, and Google Dataplex for GCP are examples. These tools offer deep native integration but may be less effective in multi-cloud or hybrid environments.

Open-Source Data Catalogs

Open-source options like Apache Atlas, Amundsen (developed by Lyft), DataHub (developed by LinkedIn), and OpenMetadata offer significant flexibility and no licensing costs, but require more technical investment to deploy and maintain. They are popular in data-engineering-heavy organizations with the technical resources to manage them.

Embedded Data Catalogs

Some modern data platforms embed catalog capabilities directly into the data warehouse or lakehouse layer. Snowflake, Databricks Unity Catalog, and dbt’s semantic layer all provide native metadata and discovery features. These embedded catalogs offer tight integration and zero additional licensing but may not match the depth of dedicated enterprise catalog tools.

Benefits of a Data Catalog

Organizations that successfully implement data catalogs consistently report measurable improvements across several dimensions.

Faster Data Discovery

The most immediate and universally cited benefit is dramatic reduction in the time data professionals spend finding data. Organizations report reductions from days or weeks of searching to minutes using catalog search. The Atlan-referenced retail case study in our AI and data governance guide showed catalog usage jumping from 12% to 61% after implementation, with IT data request tickets dropping 73%.

Improved Data Trust

When datasets carry quality scores, lineage information, and usage statistics (showing that a trusted dashboard uses this dataset), analysts feel confident using them. Data-driven decisions improve in quality because they’re based on vetted, understood data rather than whichever spreadsheet someone happened to have on hand.

Enhanced Regulatory Compliance

Regulators increasingly expect organizations to demonstrate not just that they comply with data privacy regulations, but that they can prove it. A data catalog creates the documentation trail — who owns each dataset, what governance policies apply, where sensitive data flows — that compliance audits require. GDPR Article 30 records of processing activities, HIPAA minimum necessary requirements, and CCPA consumer rights requests all benefit from the visibility a data catalog provides.

Reduced Redundant Data Collection

Without a catalog, teams frequently collect the same data independently because they don’t know it already exists elsewhere. A searchable catalog surfaces existing datasets before teams invest in building new pipelines, reducing engineering costs and the inconsistencies that arise from having multiple versions of the same data.

Faster Onboarding for New Data Professionals

New data engineers and analysts often spend their first weeks simply learning what data exists and what it means. A well-maintained data catalog dramatically shortens this ramp-up time by providing documented, searchable metadata — turning weeks of tribal knowledge acquisition into days of catalog exploration.

Enabling True Data Democratization

Business users without SQL skills can search for and understand data assets, read their definitions, see quality scores, and request access — all without engineering support. This democratization of data access is only possible when the catalog provides enough context for non-technical users to evaluate data independently.

Data Catalog Features to Look For

When evaluating data catalog tools, these are the capabilities that separate enterprise-grade solutions from basic metadata repositories.

Automated Connectivity and Crawling

The catalog should connect natively to the data sources in your environment — cloud data warehouses, relational databases, data lakes, BI tools, streaming platforms, and SaaS applications — and automatically harvest metadata without requiring manual entry. The breadth of connectors is critical: a catalog that can’t reach your systems is useless.

Active Metadata and Usage Intelligence

Beyond passive metadata storage, leading catalogs provide active metadata — real-time intelligence about how data is being used. Which users queried this table in the last 30 days? Which dashboards depend on this dataset? What queries are most commonly run against this column? Usage intelligence helps users identify popular, trusted datasets and helps governance teams prioritize their curation efforts.

Column-Level Data Lineage

Table-level lineage is a baseline requirement. Column-level lineage — showing exactly how each field in a downstream report is derived from specific columns in source tables — is the gold standard. Without column-level lineage, root cause analysis of data quality issues and impact analysis of schema changes remain painful and imprecise.

Machine Learning-Powered Classification

Modern catalogs use machine learning to automatically classify sensitive data (PII, PHI, financial), suggest business metadata based on patterns in technical metadata, identify similar or related datasets, and detect anomalies in data profiles. AI-assisted classification dramatically reduces the manual effort required to maintain a healthy catalog. See our deep dive on AI transforming data governance for more on how machine learning is changing catalog capabilities.

Business Glossary Integration

A first-class glossary that links business terms to physical data assets is essential for organizations serious about data governance. The glossary should support approval workflows (so term definitions are reviewed and certified), term hierarchies (parent-child relationships between concepts), and automatic linking (the catalog suggests which assets match a given term based on naming patterns).

Collaboration and Social Features

Data catalogs are only as good as the metadata they contain, and that metadata quality depends on human contributions. Look for features that make contribution easy and rewarding: inline commenting on datasets, crowdsourced ratings, question-and-answer threads attached to assets, and gamification elements that recognize active contributors. Catalogs that feel collaborative drive higher adoption rates than those that feel like bureaucratic documentation tools.

Governance Workflow Integration

If you’re implementing a data catalog as part of a broader data governance program, the catalog should support governance workflows: data access request routing, data quality issue tracking, policy acknowledgment, and stewardship task assignment. Leading enterprise platforms like Collibra provide complete governance workflow engines integrated directly with the catalog.

API and Integration Ecosystem

Your data catalog should integrate with your existing data stack rather than replacing it. Look for robust REST APIs that allow other tools to read and write catalog metadata, native integrations with popular data engineering tools (dbt, Airflow, Databricks, Spark), and marketplace ecosystems that extend catalog functionality.

Top Data Catalog Tools in 2026

The data catalog market has matured significantly, with clear leaders in the enterprise segment and strong challengers in the cloud-native and open-source spaces.

Collibra

Collibra is widely regarded as the market leader for enterprise data governance and cataloging. It provides a comprehensive platform combining data catalog, business glossary, data lineage, governance workflows, and policy management. Collibra’s strength is its governance depth — no other platform matches its workflow engine and policy management capabilities. It integrates with virtually every enterprise data system and has a strong partner ecosystem.

Best for: Large enterprises with complex governance requirements, regulated industries (financial services, healthcare), organizations already invested in formal data governance programs.

Alation

Alation pioneered the “active metadata” approach, using behavioral intelligence — analyzing how users actually search for and use data — to surface the most relevant and trusted datasets. Its collaborative features are among the strongest in the market, and its natural language search capabilities make it accessible to non-technical users. Alation has strong adoption in analytics-heavy organizations.

Best for: Analytics-driven organizations prioritizing data discovery and self-service, organizations where cultural adoption is as important as governance depth.

Microsoft Purview

Microsoft Purview (formerly Azure Purview) has rapidly matured into a serious enterprise catalog option, particularly for organizations heavily invested in the Microsoft Azure ecosystem. Native integration with Azure Data Factory, Azure Synapse, Microsoft Fabric, and Office 365 is a significant advantage. Purview also provides strong data loss prevention (DLP) and compliance features that align well with Microsoft’s security and compliance portfolio.

Best for: Microsoft-centric organizations, Azure-first cloud strategies, organizations that want catalog and data compliance in a single Microsoft-licensed platform.

Informatica Intelligent Data Management Cloud (IDMC)

Informatica’s catalog offering is part of its broader IDMC platform, providing deep integration with Informatica’s data quality, MDM, and integration tools. The CLAIRE AI engine provides sophisticated automated classification and metadata suggestion. For organizations already using Informatica for ETL and data quality, the catalog is a natural extension.

Best for: Organizations with existing Informatica investments, complex MDM and data quality requirements alongside cataloging needs.

Atlan

Atlan has emerged as a strong challenger, particularly popular among modern data teams using dbt, Airflow, and cloud-native data stacks. Its Slack-first collaboration model and deep integration with the modern data stack resonate with data engineering teams. Atlan’s active metadata capabilities and developer-friendly APIs make it a natural fit for organizations with strong data engineering practices.

Best for: Modern data teams, dbt-heavy environments, organizations prioritizing collaboration and developer experience.

DataHub (Open Source)

LinkedIn’s open-source DataHub has become one of the most popular catalog options for organizations with strong technical teams. It provides solid automated metadata ingestion, lineage, and search capabilities without licensing costs. The active open-source community contributes connectors and features regularly.

Best for: Organizations with strong data engineering teams, cost-sensitive environments, organizations that want to build custom catalog integrations.

AWS Glue Data Catalog

For AWS-native organizations, the Glue Data Catalog provides integrated metadata management for data in S3, Redshift, and other AWS services. It’s less feature-rich than dedicated enterprise catalogs but provides frictionless integration with the AWS ecosystem and zero additional licensing costs.

Best for: AWS-first organizations, teams already using Glue for ETL, organizations wanting minimal additional tooling.

How a Data Catalog Supports Data Governance

A data catalog and a data governance program are deeply complementary. The catalog is often described as the “operational system” for data governance — it’s where governance policies are documented, where data stewardship happens in practice, and where governance outcomes are measured.

Enabling Data Stewardship

Data stewards are responsible for the quality and proper use of specific data domains. Without a catalog, stewardship is largely theoretical — stewards may define policies in documents, but those policies have no direct connection to the actual data they govern. With a catalog, stewards work directly on data assets: writing definitions, assigning quality ratings, documenting known issues, responding to user questions, and approving access requests. The catalog transforms data stewardship from a documentation exercise into an active, data-connected role.

Implementing Data Policies

Data governance programs define policies — rules about how data should be collected, stored, used, and protected. A catalog operationalizes those policies by attaching them to specific data assets. A HIPAA minimum necessary policy becomes an annotation on specific PHI tables. A retention policy becomes a documented attribute of each dataset. A data quality standard becomes a profiling rule that runs against specific columns.

This connection between policy and data asset is what separates governance that exists on paper from governance that actually influences behavior. As we explored in our guide to data governance implementation scenarios, the most successful programs embed governance into the tools data professionals use every day — and the data catalog is the primary such tool.

Supporting Data Quality Programs

Data catalogs provide the visibility needed to prioritize and track data quality improvement. Quality profiling surfaces which datasets have quality issues, lineage shows where bad data originated, and collaboration features provide a channel for users to report quality problems they discover in practice. Over time, the catalog becomes the single source of truth for data quality status across the enterprise.

Facilitating Compliance and Audit

When regulators ask for evidence of compliance — “show us where all PII data lives and who can access it” — the data catalog is where you find the answers. Classifications applied during automated crawling, business metadata contributed by stewards, access control integrations, and lineage documentation together provide a comprehensive compliance evidence package that would take weeks to assemble manually without a catalog.

Aligning Business and IT

One of the most persistent challenges in data governance vs. data management programs is the disconnect between business stakeholders who define what data should mean and IT teams who manage how it’s stored and processed. The data catalog bridges this divide by providing a shared platform where business definitions live alongside technical specifications. When a business analyst searches for “customer” in the catalog, they see both the official business definition (contributed by a business steward) and the technical location (contributed by an engineer) — in the same interface.

Data Catalog Use Cases by Industry

Data catalogs find application across virtually every industry, but certain sectors have particularly pressing needs.

Financial Services

Financial institutions face a convergence of factors that make data catalogs essential: massive data volumes across legacy and modern systems, strict regulatory requirements (Basel III, BCBS 239, CCAR, SOX, GDPR), and growing pressure to monetize data through analytics and AI. Data catalogs enable banks and insurers to comply with regulatory data lineage requirements, support the risk data aggregation standards of BCBS 239, and accelerate data-driven product development.

Organizations with the Wells Fargo profile — large multi-system environments with complex regulatory reporting requirements — particularly benefit from enterprise data catalogs that track lineage from raw transaction data through to regulatory reports.

Healthcare

Healthcare organizations must balance data accessibility (enabling better patient outcomes through analytics) with strict privacy requirements (HIPAA, state privacy laws, patient consent frameworks). Data catalogs help healthcare organizations identify where PHI lives across all systems, enforce minimum necessary access principles, support population health analytics, and document the data lineage required for FDA submissions and clinical trial reporting.

Government and Public Sector

Government agencies increasingly operate open data programs that require them to publish data assets for public use. Data catalogs provide the metadata foundation for these programs, helping agencies document what data they hold, manage access controls between public and restricted datasets, and comply with data management requirements from OMB, FISMA, and data governance mandates. Organizations with Department of Veterans Affairs experience will recognize the challenge of managing patient and benefits data across dozens of legacy systems — a challenge that data catalogs directly address.

Manufacturing

Manufacturers collect vast operational data from IoT sensors, manufacturing execution systems, ERP platforms, and quality management systems. Understanding what operational data exists, how it relates to product quality outcomes, and how to combine it with supply chain and customer data for analytics is a significant challenge. Data catalogs help manufacturers build the metadata foundation for Industry 4.0 initiatives, product quality analytics, and supply chain optimization.

How to Implement a Data Catalog

A successful data catalog implementation is as much a change management challenge as a technical one. Many organizations have purchased catalog tools that went unused because adoption was treated as optional. Here is a practical implementation approach.

Phase 1: Foundation (Weeks 1–8)

Start by selecting your target data sources. Don’t try to catalog everything at once — choose two or three high-value, high-visibility data domains that have clear business champions. Common starting points are customer data, financial reporting data, or the data powering your most critical dashboards.

Connect the catalog to these source systems and let automated crawling run. Review the initial metadata harvest and identify gaps — what automated harvesting captured versus what manual enrichment is needed. Establish a small group of inaugural data stewards from the business and train them on contributing metadata through the catalog interface.

Launch an internal “data catalog awareness” campaign, particularly targeting analysts who struggle with data discovery today. Frame the catalog as solving their pain point, not as a compliance obligation.

Phase 2: Expansion (Months 3–6)

With initial domains cataloged and stewards established, expand to additional data sources. Prioritize based on where data discovery pain is greatest and where governance requirements are most pressing. Begin building the business glossary, starting with the most frequently disputed or ambiguous terms across the organization.

Integrate the catalog with your self-service analytics platform (Tableau, Power BI, Looker) so users encounter catalog links naturally as they work. Measure and publicize early wins: reduction in “where is this data?” IT tickets, reduction in time to find data for new analyses.

Phase 3: Governance Integration (Months 7–12)

By this phase, the catalog should be established enough to begin integrating it with formal governance workflows. Connect catalog data quality scores to your data quality management program. Establish access request workflows through the catalog. Integrate catalog lineage with your data quality issue resolution process.

Begin using catalog adoption metrics (how many users search, how many assets are viewed, how many metadata contributions are made) as governance KPIs. A catalog that isn’t used isn’t providing value — measure and drive adoption actively.

Critical Success Factors

The most important factor in data catalog success is executive sponsorship. Without visible commitment from leadership — and ideally, a Chief Data Officer who treats the catalog as a strategic platform — catalog initiatives tend to stall after initial deployment. Teams need to see that cataloging data is valued, not just another documentation burden.

Data stewardship must be treated as a real role with time allocation, not a side responsibility added to someone’s existing job. Stewards who are asked to enrich metadata on top of full workloads will deprioritize it. Organizations that dedicate stewardship time and recognize stewardship contributions achieve far better catalog quality.

Common Data Catalog Challenges

Understanding common failure modes helps organizations avoid them.

The Empty Catalog Problem

Many organizations deploy a data catalog only to find that metadata quality remains low because no one contributes to it. The tool exists but contains little useful information beyond the raw technical metadata harvested automatically. Solving the empty catalog problem requires treating metadata contribution as a workflow-integrated activity, not a separate documentation task.

Governance Without Adoption

A catalog that governance teams use but data practitioners don’t is not serving its purpose. Governance teams can maintain beautiful metadata in the catalog, but if analysts aren’t searching it before building reports, the catalog isn’t influencing data quality or decision-making. Adoption by data consumers — not just data stewards — is the true measure of catalog success.

Keeping Metadata Current

Data systems evolve constantly: schemas change, new datasets appear, old ones are deprecated. A catalog with stale metadata is worse than no catalog at all — it gives users false confidence in information that no longer reflects reality. Automated crawling handles technical metadata freshness, but business metadata requires active stewardship to remain current. Organizations must build metadata maintenance into standard data management workflows.

Scope Creep

Ambitious catalog implementations that try to catalog everything at once often fail to deliver value quickly enough to maintain organizational enthusiasm. Start focused, deliver visible value early, and expand systematically. A catalog with excellent metadata coverage for 20% of your data assets is far more valuable than a catalog with thin coverage across 100% of assets.

Tool vs. Program Confusion

A data catalog is a tool that enables a data governance program — it is not a data governance program by itself. Organizations that buy a catalog expecting it to automatically solve their data governance problems are consistently disappointed. The tool provides the platform; the program provides the people, processes, and policies that make the platform valuable.

The Future of Data Catalogs: AI and Automation

The data catalog market is undergoing its most significant transformation since the concept was invented, driven by large language models, generative AI, and advances in automated machine learning.

AI-Powered Metadata Generation

Large language models are beginning to automate the most labor-intensive part of catalog maintenance: writing business metadata. By analyzing table names, column names, data samples, and existing documentation, AI systems can generate draft business definitions, suggest related glossary terms, and identify likely data owners — dramatically reducing the manual effort required to build a high-quality catalog. As we detailed in our article on AI transforming data governance, AI metadata generation is moving from experimental to production-ready in leading platforms.

Conversational Data Discovery

The next generation of data catalogs will enable conversational search. Instead of typing keywords and browsing results, users will ask natural language questions: “Show me all customer transaction data from the last 90 days that’s used in our monthly revenue reports.” The catalog’s AI layer will interpret the intent, search across metadata, surface relevant results, and explain why each result is a good match.

This conversational approach dramatically lowers the barrier to catalog use for non-technical users, turning data discovery from a skill that requires training into an intuitive process accessible to any business user.

Proactive Data Intelligence

Future catalogs will shift from reactive (users search for data) to proactive (the catalog surfaces relevant data to users before they ask). By analyzing usage patterns, knowing what projects users are working on, and understanding business context, AI-powered catalogs will recommend datasets, flag quality issues before they affect downstream users, and suggest governance policies for new data assets the moment they’re discovered.

AI Governance Integration

As organizations deploy more AI and machine learning models, data catalogs are expanding to catalog AI assets alongside traditional data assets. Model cards documenting training data, performance characteristics, known biases, and approved use cases are being integrated into catalog platforms. The data catalog is becoming the metadata layer for the full AI lifecycle, not just for traditional data.

Frequently Asked Questions

What is a data catalog in simple terms? A data catalog is a searchable directory of all the data your organization has, where it lives, what it means, and whether it can be trusted. It’s like a library catalog but for corporate data instead of books.

What is the difference between a data catalog and a data warehouse? A data warehouse stores the actual data for analytics. A data catalog stores metadata about data — descriptions, definitions, quality scores, and lineage — across all your data sources, including the data warehouse. The catalog doesn’t replace the warehouse; it provides the discovery and context layer that makes the warehouse easier to use.

What is the difference between a data catalog and a data dictionary? A data dictionary defines the technical specifications of a specific database or application. A data catalog is much broader — it covers all data assets across the organization, includes business context and lineage, provides search and discovery, and is actively maintained by both technical teams and business stewards.

Do small organizations need a data catalog? Organizations with fewer than a few hundred data assets can often manage with well-maintained data dictionaries and documentation. As data volume, complexity, and the number of data consumers grow, a dedicated catalog tool becomes increasingly valuable. Most organizations find catalog investment worthwhile once they reach 10–15 active data sources and a dozen or more data consumers.

How much does a data catalog cost? Enterprise data catalog pricing varies widely. Open-source options like DataHub and Amundsen are free but require engineering investment to deploy and maintain. Cloud-native catalogs from AWS, Azure, and GCP are included or low-cost within their respective ecosystems. Commercial enterprise platforms like Collibra and Alation typically range from $100,000 to $500,000+ annually for large enterprises, depending on the number of data assets, users, and connectors.

How long does it take to implement a data catalog? Basic implementation — connecting the tool to primary data sources and enabling search — can be accomplished in two to four weeks. Building meaningful metadata coverage, establishing stewardship workflows, and achieving broad user adoption typically takes six to eighteen months. The technical deployment is the easy part; the organizational change management is the long-term investment.

What is an active metadata catalog? Active metadata catalogs (pioneered by Alation and now embraced by most leading platforms) go beyond passively storing metadata to actively analyzing usage patterns, automatically suggesting relevant datasets, and triggering governance actions based on metadata events. Active metadata turns the catalog from a static reference into a dynamic intelligence platform.

How does a data catalog relate to a data mesh architecture? In a data mesh architecture, individual domain teams own and manage their data as products. A data catalog provides the federated discovery layer that allows consumers to find data products across domains without knowing the internal structure of each domain’s infrastructure. The catalog in a data mesh context is the universal search interface for a distributed data ecosystem.

Which data catalog tool is best? There is no single “best” data catalog — the right choice depends on your data environment, governance maturity, technical team capabilities, and budget. Collibra is the strongest for governance-heavy enterprises. Alation excels for analytics-driven organizations. Microsoft Purview is optimal for Azure-centric environments. DataHub and Amundsen are best for technically strong teams with limited budget. Evaluating two or three tools against your specific requirements with a proof-of-concept is the recommended approach.

Is a data catalog part of data governance? Yes — a data catalog is one of the primary tools that operationalizes data governance. It’s where governance policies are documented, where data stewardship happens, where data quality is tracked, and where compliance documentation is maintained. A data governance program without a catalog is largely theoretical; a catalog without a governance program lacks the processes and accountability to keep it useful. The two are most effective together. Learn more in our comprehensive guide to what is data governance.

Summary

A data catalog is far more than a technical inventory — it’s the foundation of a data-driven, trustworthy, and compliant enterprise data environment. By combining automated metadata discovery, human-contributed business context, data lineage tracking, quality profiling, and collaborative search, data catalogs solve the data discovery and trust problems that hold organizations back from realizing the full value of their data assets.

The most successful data catalog implementations treat the tool as the operational system for their data governance program — the place where policies connect to practice, where stewards do their work, and where data consumers find and evaluate data before using it. Organizations that invest in both the tool and the governance program around it consistently achieve measurable improvements: faster analytics, better compliance posture, reduced data engineering redundancy, and higher confidence in data-driven decisions.

Whether you’re evaluating enterprise platforms like Collibra and Alation, exploring cloud-native options from AWS, Azure, or GCP, or building on open-source foundations like DataHub, the critical success factor is the same: connect the catalog to real data governance workflows, invest in data stewardship, and treat adoption as an ongoing initiative rather than a one-time deployment.

Ready to go deeper? Explore our related guides:

- What Is Data Governance? — the foundational framework that a data catalog operationalizes

- Data Governance vs. Data Management — understanding the full ecosystem

- How AI Is Transforming Data Governance — the future of intelligent data catalogs

Published: February 2026 | Author: Clinton (The Data Governor) | Category: Data Governance Fundamentals

Clinton is a Senior Data Governance Engineer with hands-on experience implementing enterprise data catalog programs at Wells Fargo, the Department of Veterans Affairs, and Nestle Purina using platforms including Collibra and Profisee.

✅ AIOSEO SETTINGS — PASTE DIRECTLY INTO WORDPRESS

Focus Keyphrase: data catalog

SEO Title: What Is a Data Catalog? Complete Guide for 2026 | The Data Governor

Meta Description (158 chars): A data catalog is a searchable inventory of your organization's data assets. Learn what it does, how it works, and why it's essential for data governance.

URL Slug: what-is-a-data-catalog

Canonical URL: https://thedatagovernor.info/what-is-a-data-catalog/

Additional Keywords (paste into AIOSEO keyphrases field): data catalog definition, what does a data catalog do, data catalog examples, data catalog vs data dictionary, enterprise data catalog, data catalog tools, data catalog benefits

Tags: data catalog, data governance, metadata management, data discovery, data lineage, data stewardship, enterprise data management, data dictionary, data quality, Collibra, Alation, Microsoft Purview, data catalog tools

Content Category: Data Governance Fundamentals

Publish Date: February 2026

Schema Type: Article (AIOSEO auto-generates — verify datePublished and dateModified in source)

Internal Links to Add (minimum 8):

- What is Data Governance →

/what-is-data-governance/ - Data Governance vs. Data Management →

/data-governance-vs-data-management/ - Data Governance Implementation →

/which-scenario-best-illustrates-the-implementation-of-data-governance/ - AI Transforming Data Governance →

/ai-transforming-data-governance-2026/ - What is a Data Steward → (future article)

- Data Governance Framework → (future article)

- Data Governance Roles → (future article)

Reading Time Estimate: ~28 minutes Word Count Target: 7,000–8,000 words

📋 FEATURED SNIPPET OPTIMIZATION

Target snippet format: Paragraph (Google, ChatGPT, Perplexity, Claude)

Optimized definition paragraph (place immediately after H1):

A data catalog is a searchable, organized inventory of an organization’s data assets — including databases, files, reports, APIs, and streaming data — along with the metadata that describes each asset’s meaning, origin, quality, and usage. Think of it as a library card system for your data: just as a library catalog tells you what books exist, where they’re shelved, and what they contain, a data catalog tells analysts and data engineers exactly what data your organization has, where it lives, how trustworthy it is, and how to access it. Modern enterprise data catalogs go beyond simple inventory to provide data lineage visualization, automated metadata discovery, collaboration features, and governance policy enforcement.

What Is a Data Catalog? The Complete Guide for 2026

A data catalog is a searchable, organized inventory of an organization’s data assets — including databases, files, reports, APIs, and streaming data — along with the metadata that describes each asset’s meaning, origin, quality, and usage.

Think of it as a library card system for your enterprise data. Just as a library catalog tells you what books exist, where they’re shelved, and what they’re about, a data catalog tells your analysts, data engineers, and business users exactly what data your organization has, where it lives, how trustworthy it is, and how to access it.

Modern enterprise data catalogs go far beyond simple inventory. They provide data lineage visualization, automated metadata discovery, collaboration features, data quality scoring, and governance policy enforcement — making them one of the most critical tools in a modern data governance program.

In this comprehensive guide, you’ll learn everything you need to know about data catalogs: what they are, how they work, why organizations need them, what features to look for, how they differ from data dictionaries and data inventories, and which tools lead the market in 2026.

Table of Contents

- What Is a Data Catalog? (Definition)

- Why Organizations Need a Data Catalog

- How a Data Catalog Works

- Key Components of a Data Catalog

- Data Catalog vs. Data Dictionary vs. Data Inventory

- Types of Data Catalogs

- Benefits of a Data Catalog

- Data Catalog Features to Look For

- Top Data Catalog Tools in 2026

- How a Data Catalog Supports Data Governance

- Data Catalog Use Cases by Industry

- How to Implement a Data Catalog

- Common Data Catalog Challenges

- The Future of Data Catalogs: AI and Automation

- Frequently Asked Questions

What Is a Data Catalog? (Definition)

At its core, a data catalog is a metadata management tool that creates and maintains an inventory of available data assets within an organization. It combines automated data discovery, human-contributed metadata, and search functionality to help users find, understand, and trust the data they need.

The term “data catalog” emerged in the 2010s as organizations grappled with data sprawl — the explosive growth of data across cloud platforms, on-premise databases, data warehouses, data lakes, SaaS applications, and streaming pipelines. Before data catalogs, data professionals spent an estimated 30–40% of their time simply trying to find the right data. A data catalog solves this discovery problem by creating a centralized, searchable metadata layer across all data systems.

The Library Analogy

Imagine a large university library with millions of books spread across dozens of buildings. Without a catalog, finding a specific book would require physically searching every shelf. With a catalog, you search by title, author, subject, or keyword and immediately see where the book lives, whether it’s available, and related titles that might also help you.

A data catalog works the same way for enterprise data. Instead of books, the assets are tables, reports, APIs, and data pipelines. Instead of authors and subjects, the metadata includes data owners, business definitions, technical attributes, update frequency, and quality scores.

What Does a Data Catalog Contain?

A well-built data catalog contains two broad categories of information:

Technical metadata is automatically harvested from source systems and includes table names, column names and data types, schema structures, row counts, null percentages, update timestamps, system of origin, and access permissions.

Business metadata is contributed by human data stewards and subject matter experts and includes plain-language definitions of what each dataset means, who owns it, which business processes use it, data quality expectations, related glossary terms, and usage guidelines.

Together, these layers of metadata transform raw technical information into actionable, trusted data knowledge that any user — technical or non-technical — can leverage.

Why Organizations Need a Data Catalog

The modern enterprise data landscape is more complex than ever. The average organization uses hundreds of data sources, runs multiple cloud platforms, maintains legacy on-premise systems, and generates petabytes of data annually. Without a data catalog, this complexity creates serious problems.

The Data Discovery Crisis

Research consistently shows that data professionals spend enormous amounts of time on data discovery rather than analysis. A 2023 survey by Atlan found that data engineers spend up to 40% of their time searching for data, understanding it, and confirming it’s appropriate for their use case. Analysts report similar frustrations — discovering a dataset exists only to find they don’t know what its columns mean, where it came from, or whether it’s accurate.

This discovery crisis has real business consequences: delayed decision-making, duplicate data collection efforts, inconsistent metrics across departments, and regulatory compliance risk when the wrong data gets used in reports.

The Trust Problem

Beyond discovery, organizations struggle with data trust. When multiple versions of a “customer count” or “monthly revenue” metric exist across different systems, business users lose confidence in data-driven decisions. A data catalog addresses the trust problem by surfacing data quality scores, lineage information showing where data originated and how it was transformed, and usage information showing which trusted reports and dashboards use each dataset.

Regulatory Compliance Requirements

Regulations like GDPR, CCPA, HIPAA, Basel III, and SOX require organizations to know exactly where sensitive data lives, who can access it, and how it flows through systems. A data catalog provides the audit trail and data lineage documentation that compliance teams need. Financial services firms, healthcare organizations, and government agencies in particular face strict requirements that a data catalog helps satisfy.

Enabling Self-Service Analytics

One of the biggest promises of modern data platforms is self-service analytics — enabling business users to answer their own data questions without waiting for IT. But self-service fails without good metadata. If analysts can’t find data, don’t understand what it means, or can’t trust its quality, self-service becomes self-service chaos. A data catalog is the enabling layer that makes self-service analytics actually work.

How a Data Catalog Works

Understanding how a data catalog works helps you evaluate tools and plan implementations. While implementations vary, most enterprise data catalogs follow a similar operational model.

Step 1: Automated Metadata Harvesting (Crawling)

The data catalog connects to your data sources — data warehouses like Snowflake and Databricks, databases like Oracle and SQL Server, cloud storage like S3 and Azure Data Lake, BI tools like Tableau and Power BI, and data pipelines — through native connectors or APIs. It then crawls these systems to extract technical metadata: table structures, column names and types, sample data, statistics, and schema relationships.

This crawling process runs on a schedule (daily, hourly, or near-real-time depending on the tool) so the catalog stays current as data systems evolve.

Step 2: Automated Classification and Profiling

Modern data catalogs apply machine learning and natural language processing to the harvested metadata to automatically classify data. The catalog identifies that a column named “ssn” likely contains Social Security Numbers, that a field with nine-digit patterns is probably a ZIP code, or that a table receiving updates every five minutes is probably a transactional system rather than a historical archive.

Data profiling goes a step further, calculating statistical summaries: min/max values, average lengths, null percentages, unique value counts, and value distributions. These statistics help users quickly assess data quality without running their own queries.

Step 3: Business Metadata Enrichment

Automated metadata discovery captures what the data looks like technically, but it can’t capture what the data means to the business. This is where data stewards and subject matter experts contribute business metadata through the catalog’s user interface.

Business enrichment includes writing plain-language definitions (“This table contains one record per active customer account as of the end of the prior business day”), tagging datasets with business domain classifications, linking columns to business glossary terms, rating data quality, documenting known issues, and assigning data ownership.

Step 4: Lineage Tracking

Data lineage — the ability to trace data from its origin through every transformation to its final destination — is one of the most valuable features of a data catalog. Lineage is typically captured through a combination of automated parsing of SQL and ETL code, runtime observation of data pipeline execution, and manual documentation.

Lineage serves multiple critical purposes: impact analysis (what downstream reports will break if I change this column?), root cause analysis (where did this bad data value come from?), and compliance documentation (can I prove to regulators where this financial figure originated?).

Step 5: Search and Discovery Interface

With metadata harvested, classified, enriched, and tracked, the catalog presents a search interface that lets users find data assets using natural language queries. Unlike database query tools that require knowing exact table names, a catalog lets users search conceptually: “customer transactions in the last 90 days” or “HIPAA-sensitive patient data in the data lake.”

Search results surface not just matching datasets but also metadata about each one: ownership, quality scores, usage frequency, related assets, and lineage context — giving users everything they need to evaluate whether a dataset fits their needs before they invest time querying it.

Key Components of a Data Catalog

While features vary between products, well-designed enterprise data catalogs share several core components.

Metadata Repository

The metadata repository is the catalog’s central database, storing all technical and business metadata harvested from or contributed about data assets. This repository must handle massive scale — a large enterprise may have millions of data assets — while supporting fast, flexible search and query.

Business Glossary

A business glossary is an integrated dictionary of business terms and their official definitions. The glossary serves as the authoritative vocabulary for the organization, resolving the ambiguity that arises when different departments use different words for the same concept (is a “customer” an active account, an account that has ever placed an order, or a person who has logged into the website?).

Data catalog glossaries link business terms to the specific data assets that represent them, creating a bridge between business language and technical data. This is a cornerstone of effective data governance — you cannot govern data you cannot consistently describe.

Data Lineage Engine

The lineage engine tracks how data flows from source systems through transformations, pipelines, and queries to downstream consumers like reports and dashboards. Lineage is typically visualized as a directed acyclic graph (DAG) showing upstream sources and downstream dependencies for any given dataset.

Advanced lineage engines track lineage at the column level, not just the table level — essential for understanding exactly how a calculated metric like “adjusted gross revenue” is derived from raw transaction data.

Data Quality Profiling

Quality profiling components automatically measure and score data quality across multiple dimensions: completeness (are required fields populated?), accuracy (do values fall within expected ranges?), consistency (does the same customer ID mean the same thing across systems?), timeliness (was the data updated as recently as expected?), and uniqueness (are there unexpected duplicates?).

Quality scores are displayed prominently in search results so users can immediately see whether a dataset is trusted before using it.

Collaboration and Workflow Tools

Modern data catalogs are social platforms as much as technical tools. They include features for users to ask questions about datasets, flag data quality issues, review and approve metadata changes, and track data requests. Some catalogs integrate directly with Slack, Microsoft Teams, and Jira to embed data collaboration into existing team workflows.

Access Control and Governance Policies

Enterprise data catalogs integrate with identity management systems to display access permissions for each dataset and, in some cases, to enforce access policies directly. Users can see not just that a dataset exists but whether they can access it and who to contact to request access.

Governance-aware catalogs can automatically flag datasets containing sensitive data classifications (PII, PHI, financial data) and display the applicable governance policies, making compliance a built-in part of the data discovery experience rather than an afterthought.

Data Catalog vs. Data Dictionary vs. Data Inventory

These three terms are often used interchangeably but represent distinct concepts. Understanding the differences is important for data governance planning.

Data Dictionary

A data dictionary is a document or repository that defines the technical attributes of data elements: column names, data types, allowed values, constraints, and relationships between tables. Data dictionaries are typically narrow in scope (focused on a single database or application), maintained manually, and contain technical rather than business definitions.

A data dictionary answers: “What are the technical specifications of this database?” It is a technical reference document, not a discovery or governance tool.

Data Inventory

A data inventory is a list of the data assets an organization holds, typically including the type of data, its location, who owns it, and how long it’s retained. Data inventories are often compliance-driven — GDPR requires organizations to maintain a record of processing activities, which is essentially a data inventory.

A data inventory answers: “What data do we have and where is it?” It is broader than a data dictionary but shallower — it catalogs what exists without explaining what it means or how it’s used.

Data Catalog

A data catalog combines and extends both concepts. It maintains technical metadata like a dictionary, inventories all data assets like an inventory, and adds business context, lineage, quality scoring, collaboration, and search. A data catalog is a living, dynamic, searchable system — not a static document.

A data catalog answers: “What data do we have, where is it, what does it mean, how trustworthy is it, how does it flow through our systems, and who can help me use it?”

The key distinction: A data dictionary is a technical specification document. A data inventory is a compliance record. A data catalog is an active data knowledge platform that drives data discovery, trust, and governance across the enterprise.

Types of Data Catalogs

Not all data catalogs are alike. Different architectural approaches suit different organizational needs.

Enterprise Data Catalogs

Enterprise data catalogs are comprehensive, full-featured platforms designed for large organizations with complex, multi-cloud data environments. They support thousands of data assets across dozens of connected systems, provide robust governance workflows, and offer enterprise-grade security and access control. Collibra, Alation, and Informatica Axon are leading examples.

Cloud-Native Data Catalogs

Cloud-native catalogs are built specifically for cloud data platforms and tight integration with cloud-native data services. Microsoft Purview for Azure environments, AWS Glue Data Catalog for AWS, and Google Dataplex for GCP are examples. These tools offer deep native integration but may be less effective in multi-cloud or hybrid environments.

Open-Source Data Catalogs

Open-source options like Apache Atlas, Amundsen (developed by Lyft), DataHub (developed by LinkedIn), and OpenMetadata offer significant flexibility and no licensing costs, but require more technical investment to deploy and maintain. They are popular in data-engineering-heavy organizations with the technical resources to manage them.

Embedded Data Catalogs

Some modern data platforms embed catalog capabilities directly into the data warehouse or lakehouse layer. Snowflake, Databricks Unity Catalog, and dbt’s semantic layer all provide native metadata and discovery features. These embedded catalogs offer tight integration and zero additional licensing but may not match the depth of dedicated enterprise catalog tools.

Benefits of a Data Catalog

Organizations that successfully implement data catalogs consistently report measurable improvements across several dimensions.

Faster Data Discovery

The most immediate and universally cited benefit is dramatic reduction in the time data professionals spend finding data. Organizations report reductions from days or weeks of searching to minutes using catalog search. The Atlan-referenced retail case study in our AI and data governance guide showed catalog usage jumping from 12% to 61% after implementation, with IT data request tickets dropping 73%.

Improved Data Trust

When datasets carry quality scores, lineage information, and usage statistics (showing that a trusted dashboard uses this dataset), analysts feel confident using them. Data-driven decisions improve in quality because they’re based on vetted, understood data rather than whichever spreadsheet someone happened to have on hand.

Enhanced Regulatory Compliance

Regulators increasingly expect organizations to demonstrate not just that they comply with data privacy regulations, but that they can prove it. A data catalog creates the documentation trail — who owns each dataset, what governance policies apply, where sensitive data flows — that compliance audits require. GDPR Article 30 records of processing activities, HIPAA minimum necessary requirements, and CCPA consumer rights requests all benefit from the visibility a data catalog provides.

Reduced Redundant Data Collection

Without a catalog, teams frequently collect the same data independently because they don’t know it already exists elsewhere. A searchable catalog surfaces existing datasets before teams invest in building new pipelines, reducing engineering costs and the inconsistencies that arise from having multiple versions of the same data.

Faster Onboarding for New Data Professionals

New data engineers and analysts often spend their first weeks simply learning what data exists and what it means. A well-maintained data catalog dramatically shortens this ramp-up time by providing documented, searchable metadata — turning weeks of tribal knowledge acquisition into days of catalog exploration.

Enabling True Data Democratization

Business users without SQL skills can search for and understand data assets, read their definitions, see quality scores, and request access — all without engineering support. This democratization of data access is only possible when the catalog provides enough context for non-technical users to evaluate data independently.

Data Catalog Features to Look For

When evaluating data catalog tools, these are the capabilities that separate enterprise-grade solutions from basic metadata repositories.

Automated Connectivity and Crawling

The catalog should connect natively to the data sources in your environment — cloud data warehouses, relational databases, data lakes, BI tools, streaming platforms, and SaaS applications — and automatically harvest metadata without requiring manual entry. The breadth of connectors is critical: a catalog that can’t reach your systems is useless.

Active Metadata and Usage Intelligence

Beyond passive metadata storage, leading catalogs provide active metadata — real-time intelligence about how data is being used. Which users queried this table in the last 30 days? Which dashboards depend on this dataset? What queries are most commonly run against this column? Usage intelligence helps users identify popular, trusted datasets and helps governance teams prioritize their curation efforts.

Column-Level Data Lineage

Table-level lineage is a baseline requirement. Column-level lineage — showing exactly how each field in a downstream report is derived from specific columns in source tables — is the gold standard. Without column-level lineage, root cause analysis of data quality issues and impact analysis of schema changes remain painful and imprecise.

Machine Learning-Powered Classification

Modern catalogs use machine learning to automatically classify sensitive data (PII, PHI, financial), suggest business metadata based on patterns in technical metadata, identify similar or related datasets, and detect anomalies in data profiles. AI-assisted classification dramatically reduces the manual effort required to maintain a healthy catalog. See our deep dive on AI transforming data governance for more on how machine learning is changing catalog capabilities.

Business Glossary Integration

A first-class glossary that links business terms to physical data assets is essential for organizations serious about data governance. The glossary should support approval workflows (so term definitions are reviewed and certified), term hierarchies (parent-child relationships between concepts), and automatic linking (the catalog suggests which assets match a given term based on naming patterns).

Collaboration and Social Features

Data catalogs are only as good as the metadata they contain, and that metadata quality depends on human contributions. Look for features that make contribution easy and rewarding: inline commenting on datasets, crowdsourced ratings, question-and-answer threads attached to assets, and gamification elements that recognize active contributors. Catalogs that feel collaborative drive higher adoption rates than those that feel like bureaucratic documentation tools.

Governance Workflow Integration

If you’re implementing a data catalog as part of a broader data governance program, the catalog should support governance workflows: data access request routing, data quality issue tracking, policy acknowledgment, and stewardship task assignment. Leading enterprise platforms like Collibra provide complete governance workflow engines integrated directly with the catalog.

API and Integration Ecosystem

Your data catalog should integrate with your existing data stack rather than replacing it. Look for robust REST APIs that allow other tools to read and write catalog metadata, native integrations with popular data engineering tools (dbt, Airflow, Databricks, Spark), and marketplace ecosystems that extend catalog functionality.

Top Data Catalog Tools in 2026

The data catalog market has matured significantly, with clear leaders in the enterprise segment and strong challengers in the cloud-native and open-source spaces.

Collibra

Collibra is widely regarded as the market leader for enterprise data governance and cataloging. It provides a comprehensive platform combining data catalog, business glossary, data lineage, governance workflows, and policy management. Collibra’s strength is its governance depth — no other platform matches its workflow engine and policy management capabilities. It integrates with virtually every enterprise data system and has a strong partner ecosystem.

Best for: Large enterprises with complex governance requirements, regulated industries (financial services, healthcare), organizations already invested in formal data governance programs.

Alation

Alation pioneered the “active metadata” approach, using behavioral intelligence — analyzing how users actually search for and use data — to surface the most relevant and trusted datasets. Its collaborative features are among the strongest in the market, and its natural language search capabilities make it accessible to non-technical users. Alation has strong adoption in analytics-heavy organizations.

Best for: Analytics-driven organizations prioritizing data discovery and self-service, organizations where cultural adoption is as important as governance depth.

Microsoft Purview

Microsoft Purview (formerly Azure Purview) has rapidly matured into a serious enterprise catalog option, particularly for organizations heavily invested in the Microsoft Azure ecosystem. Native integration with Azure Data Factory, Azure Synapse, Microsoft Fabric, and Office 365 is a significant advantage. Purview also provides strong data loss prevention (DLP) and compliance features that align well with Microsoft’s security and compliance portfolio.

Best for: Microsoft-centric organizations, Azure-first cloud strategies, organizations that want catalog and data compliance in a single Microsoft-licensed platform.

Informatica Intelligent Data Management Cloud (IDMC)

Informatica’s catalog offering is part of its broader IDMC platform, providing deep integration with Informatica’s data quality, MDM, and integration tools. The CLAIRE AI engine provides sophisticated automated classification and metadata suggestion. For organizations already using Informatica for ETL and data quality, the catalog is a natural extension.

Best for: Organizations with existing Informatica investments, complex MDM and data quality requirements alongside cataloging needs.

Atlan

Atlan has emerged as a strong challenger, particularly popular among modern data teams using dbt, Airflow, and cloud-native data stacks. Its Slack-first collaboration model and deep integration with the modern data stack resonate with data engineering teams. Atlan’s active metadata capabilities and developer-friendly APIs make it a natural fit for organizations with strong data engineering practices.

Best for: Modern data teams, dbt-heavy environments, organizations prioritizing collaboration and developer experience.

DataHub (Open Source)

LinkedIn’s open-source DataHub has become one of the most popular catalog options for organizations with strong technical teams. It provides solid automated metadata ingestion, lineage, and search capabilities without licensing costs. The active open-source community contributes connectors and features regularly.

Best for: Organizations with strong data engineering teams, cost-sensitive environments, organizations that want to build custom catalog integrations.

AWS Glue Data Catalog

For AWS-native organizations, the Glue Data Catalog provides integrated metadata management for data in S3, Redshift, and other AWS services. It’s less feature-rich than dedicated enterprise catalogs but provides frictionless integration with the AWS ecosystem and zero additional licensing costs.

Best for: AWS-first organizations, teams already using Glue for ETL, organizations wanting minimal additional tooling.

How a Data Catalog Supports Data Governance

A data catalog and a data governance program are deeply complementary. The catalog is often described as the “operational system” for data governance — it’s where governance policies are documented, where data stewardship happens in practice, and where governance outcomes are measured.

Enabling Data Stewardship