Your pipeline passed every test. The data landed in the warehouse on schedule. The dashboard refreshed at 6:00 AM just like always. And then a downstream data scientist opened a ticket because the customer_id field they’ve been joining on for nine months silently changed from an integer to a string — and nobody told them.

This is not a data quality problem. It is a contract problem.

Data contracts are formal, enforceable agreements between the teams that produce data and the teams that consume it. They define what the data will look like, what guarantees the producer makes about it, and what happens when those guarantees are violated. Done well, they are the difference between a data platform your organization trusts and one your organization tolerates.

This guide covers everything a practicing data governance professional needs to know: what data contracts are, why they matter beyond the hype, how to design and implement them, how they connect to your broader governance program, and what tools the ecosystem currently offers. I’ll draw on implementation experience across the Department of Veterans Affairs, financial services governance work, and master data environments at Nestle Purina to ground the theory in real-world friction.

- What Are Data Contracts?

- Why Data Contracts Matter

- Core Components of a Data Contract

- Implementing Data Contracts: A Practical Framework

- Data Contracts and the Broader Governance Ecosystem

- Data Contracts in Data Mesh Environments

- Data Contracts and Regulatory Compliance

- Building Organizational Buy-In for Data Contracts

- Common Pitfalls in Data Contract Programs

- Pitfall 1: Treating the Contract as a Documentation Exercise

- Pitfall 2: Writing Contracts After the Fact Without Producer Buy-In

- Pitfall 3: Over-Engineering the Contract on Day One

- Pitfall 4: No Process for Contract Violations

- Pitfall 5: Schema Contracts Without Semantic Contracts

- Pitfall 6: No Consumer Registration

- Pitfall 7: Treating Contracts as Immutable

- Tools and Technology for Data Contracts

- Data Contract Maturity Levels

- FAQ

- What is the difference between a data contract and a data SLA?

- Do I need a data contract for every dataset?

- Can data contracts work with real-time streaming data?

- How do you handle schema evolution without breaking consumers?

- What is the relationship between data contracts and data mesh?

- How do you get data engineering teams to adopt contracts?

- How do you measure the success of a data contract program?

- How do data contracts relate to GDPR or other privacy regulations?

- What YAML or open standard should I use for writing data contracts?

- How long does it take to implement a data contract program?

- Can data contracts help with AI/ML model reliability?

- Conclusion

What Are Data Contracts?

A data contract is a versioned, machine-readable agreement between a data producer and one or more data consumers that specifies:

- Schema: field names, data types, nullability, and acceptable value ranges

- Semantics: what the fields actually mean — the business definitions, not just the technical names

- Service Level Agreements (SLAs): freshness guarantees, latency, availability expectations

- Data Quality Rules: completeness thresholds, referential integrity, format constraints

- Ownership: who is accountable for producing the data, who is accountable for consuming it

- Change Management: how breaking changes are communicated, how versioning works, what constitutes a violation

The concept was popularized in data engineering circles through work by Ethan Aaron, Chad Sanderson, and others who recognized that the absence of explicit producer-consumer agreements was the root cause of a massive category of data reliability failures. It has since been formalized into open standards, tooling, and governance frameworks.

The analogy to software APIs is intentional and useful. When a software team publishes an API, consumers know exactly what they’re getting: the endpoint, the response shape, the authentication requirements, the rate limits. A breaking change goes through a deprecation process. Versioning is explicit. The contract model applies this discipline to data pipelines — assets that have historically been treated as write-once, document-never, change-whenever.

What a Data Contract Is Not

Before going further, it’s worth clearing up some misunderstandings that circulate in the governance and engineering communities:

A data contract is not a data quality dashboard. Dashboards report on what happened. A contract specifies what should happen and triggers action when it doesn’t.

A data contract is not just a data dictionary. A data dictionary is documentation. A contract is an operational agreement. The dictionary tells you what a field is. The contract tells you what the producer guarantees about that field and what consequence follows a violation.

A data contract is not exclusively a technical artifact. A purely technical schema document without business ownership, consumption context, and escalation procedures is a schema, not a contract.

A data contract is not a data governance policy. Policy sets the enterprise standard. Contracts operationalize that standard at the asset level. They work together.

Why Data Contracts Matter

The Hidden Cost of the Implicit Contract

Every data pipeline already has a contract. It is just implicit, undocumented, and invisible until it breaks.

When a data engineer builds a pipeline that feeds an analytics report, the consumer of that report has formed a set of expectations: the data will arrive by a certain time, the schema won’t change without notice, the customer count will make sense. None of this is written down anywhere. When the pipeline changes, the consumer finds out through a broken dashboard or a data scientist opening a ticket.

The cost of this implicit contract system is enormous. Studies cited in the Gartner Data Quality Market research have consistently put poor data quality costs in the billions for large enterprises. But the mechanism of that cost is rarely examined. A significant portion of it is not garbage data — it is change-without-communication. Data that was good yesterday is broken today, not because the underlying business reality changed, but because a producer changed something without telling consumers.

Regulatory Pressure is Making Contracts Mandatory in Practice

In financial services, regulatory frameworks have been pushing toward data contracts for years without using that name.

BCBS 239 — the Basel Committee’s principles for effective risk data aggregation and risk reporting — requires financial institutions to demonstrate end-to-end data lineage, accuracy controls, and clearly accountable data ownership for risk data. In practice, what regulators are asking for when they walk into an exam is something very close to a data contract: show me where this data comes from, show me who owns it, show me how you validated it, show me what happens when something goes wrong.

Financial services governance work at organizations like Wells Fargo involves precisely this kind of documentation and control regime. When regulators want to see data lineage for a capital model, an undocumented pipeline with implicit producer-consumer agreements is a material control weakness. Formal data contracts — even informal equivalents — are increasingly the standard that examination teams expect.

Similarly, GDPR and CCPA compliance depends on knowing exactly where personal data flows, who controls it, and what transformations it passes through. A data contract that specifies the presence of PII fields, the applicable retention policy, and the downstream consumers is not a luxury — it is part of a defensible privacy program.

The Data Mesh Driver

The data mesh architectural pattern, popularized by Zhamak Dehghani, has made data contracts foundational rather than optional. In a data mesh, domain teams own and publish their own data products. The platform team provides infrastructure. There is no central team that can enforce consistency by fiat.

In this model, the only way the mesh holds together is if domain data products publish explicit contracts. A consumer team in a data mesh cannot afford to reverse-engineer the schema of another domain’s data product every time they want to use it. The contract is the interface. Without it, the mesh collapses into a more fragmented version of the centralized chaos it was meant to replace.

Trust as Infrastructure

The underlying business case for data contracts, stripped of the technical framing, is this: organizations make decisions on data. The quality of those decisions depends on whether the decision-maker can trust the data they’re looking at. Trust in data is not a feeling — it is a trackable, governable property that is built through explicit commitments, monitored through validation, and maintained through accountability.

Data contracts are the mechanism that converts implicit trust (hope that nothing changed) into structured trust (knowledge that what was agreed is what was delivered).

Core Components of a Data Contract

A production-ready data contract has seven components. The depth of each component varies by the criticality of the data asset and the maturity of your organization’s governance program. A contract for a Tier 1 regulatory dataset will look different from a contract for an internal operational report — but all seven components should be present in some form.

1. Schema Definition

Schema is the foundation. It specifies the fields that will be present in the data, their types, their nullability, and any constraints on values.

A minimal schema definition covers:

fields:

- name: customer_id

type: string

nullable: false

description: "Unique identifier for a customer. UUID format."

- name: account_balance

type: decimal(18,2)

nullable: true

description: "Current account balance in USD. Null if account is pending activation."

- name: last_updated

type: timestamp

nullable: false

description: "UTC timestamp of the most recent record update."

A mature schema definition also specifies:

- Format constraints: patterns (e.g., ISO 8601 dates, E.164 phone numbers), enumerations for categorical fields

- Range constraints: min/max values for numeric fields

- Primary keys and unique constraints

- Foreign key relationships to other data assets (important for MDM contexts)

Schema is also where you specify versioning. Semantic versioning is the most common approach: major version increments for breaking changes, minor version increments for backward-compatible additions, patch increments for metadata corrections. Consumers should declare which version they depend on.

2. Semantic Definitions

Schema tells you the shape of the data. Semantics tell you what it means.

This is one of the most underinvested components of most early-stage data contract programs. Organizations spend extensive effort defining data types and almost no effort defining business meaning. The result is that two teams can look at a field called revenue and mean entirely different things — gross revenue, net revenue, recognized revenue, pipeline revenue — without any mechanism to detect the ambiguity.

Semantic definitions connect the contract to the business glossary. In a Collibra environment, this means linking contract fields to approved business term definitions. In a Profisee MDM context, it means connecting the contract to the canonical domain model that defines what a “product” or “location” or “customer” officially means.

Semantic definitions should specify:

- The approved business definition (pulled from or linked to the governed business glossary)

- The owner business unit who defines what this concept means

- Known ambiguities: other uses of the same term in the organization, and why those are different

- Calculation rules for derived fields (if applicable)

3. Service Level Agreements

SLAs define the operational commitments the producer makes to consumers. They are measurable and monitorable.

Key SLA dimensions include:

Freshness: When will the data be available? “Available by 6:00 AM UTC daily” is a freshness SLA. This is separate from the pipeline schedule — it is the commitment to the consumer, with buffer for acceptable latency.

Completeness: What percentage of expected records must be present? “Greater than or equal to 98% of records from the source system will be present in this dataset.” This protects consumers from silent truncations.

Latency: For near-real-time datasets, what is the maximum acceptable lag between an event occurring and the data being available?

Availability: What is the expected uptime for this dataset? What is the SLA for recovery when the dataset is unavailable?

SLAs should be tied to alerting. When an SLA is breached, there should be an automated notification to both the producer and affected consumers. An SLA without monitoring is a wish, not a commitment.

4. Data Quality Rules

Quality rules are validation conditions that the data must pass for the contract to be considered fulfilled. These go beyond schema — they capture business logic and domain expectations.

Examples of data quality rules:

order_datemust not be in the futurecustomer_idmust match a valid record in the customer master- No more than 5% of

email_addressvalues may be null in any daily delivery transaction_amountmust be non-negative for record types labeledCREDIT

Quality rules are the bridge between the contract and your data quality tooling. In a Great Expectations implementation, each quality rule maps to an expectation suite that runs as part of the pipeline validation step. In a Soda Core implementation, they map to checks in a YAML configuration. The contract specifies what must be true; the quality tool enforces it.

Quality rules should specify:

- The rule condition (the assertion)

- The threshold for compliance (absolute pass/fail, or percentage tolerance)

- The severity (is a violation a blocking error that halts downstream processing, or a warning that triggers notification?)

- The escalation path when the rule fails

5. Ownership and Accountability

A data contract without clear ownership is not a contract — it is a document. Ownership specifies who is accountable for what.

Producer ownership: The team or individual responsible for generating the data, maintaining the pipeline, and honoring the contract commitments. This is usually a data engineering team or a domain team in a data mesh.

Steward ownership: The data steward who is accountable for the semantic accuracy of the data, the business definitions, and the governance compliance of the contract itself. In the VA’s governance model, data stewardship roles are formalized in policy — a data steward is specifically accountable for the accuracy and governed status of the data in their domain.

Consumer registration: Consumers should be registered against the contract. This serves two purposes: it enables impact analysis when the contract changes (the producer knows who will be affected), and it creates accountability on the consumption side (consumers who depend on a data asset should be visible).

Escalation paths: Who gets called when the contract is violated? Who approves a breaking change? Who adjudicates a dispute between a producer and a consumer about whether a change was breaking?

6. Change Management Protocol

How changes to the contract are managed is as important as what the contract contains.

The change management protocol should specify:

Classification of changes:

- Non-breaking: Adding new optional fields, adding new quality rules that don’t affect existing consumers, updating documentation or semantic descriptions

- Breaking: Removing fields, changing field types, changing field names, tightening nullability constraints, changing primary keys

Deprecation timeline: When a producer needs to make a breaking change, what is the required notice period? Ninety days is a common standard for Tier 1 data assets.

Version compatibility window: How long will the previous version of a contract remain supported after a new major version is released?

Change approval process: For breaking changes, who must approve? Typically this requires sign-off from affected downstream consumers and the data steward. In governance-mature organizations, breaking changes go through a formal change control process.

The change management protocol is where governance meets engineering discipline. In the VA’s governance implementation, major changes to governed data assets required documented impact assessments and sign-off from domain stewards before deployment. This process — formalized for regulatory compliance and audit purposes — maps directly to what a contract-based change management protocol looks like in practice.

7. Contract Metadata

Finally, every contract needs its own metadata: the administrative envelope that makes the contract findable, manageable, and auditable.

- Contract ID: Unique identifier

- Version: Current version (semantic versioning)

- Status: Draft, Active, Deprecated, Retired

- Effective Date: When this version became active

- Review Date: Scheduled review of the contract (annually at minimum; quarterly for Tier 1 assets)

- Data Classification: Public, Internal, Confidential, Restricted/PII

- Tags: Relevant domain, subject area, compliance requirement tags

Implementing Data Contracts: A Practical Framework

Theory is easy. Implementation is where programs stall. Based on enterprise implementations across government and regulated industries, the path that works is not big-bang — it is a phased approach that starts with your highest-risk assets and builds organizational muscle before scaling.

Phase 1: Identify and Tier Your Data Assets

Before writing a single contract, you need to know what you’re contracting around. This means conducting a data asset inventory and applying a tiering framework.

Tier 1 — Mission-Critical: Assets that feed regulatory reporting, executive dashboards, or critical operational systems. A contract failure on a Tier 1 asset has material business or compliance impact. These get the full contract treatment immediately.

Tier 2 — Business-Important: Assets that support important business processes but where a brief failure has limited downstream impact. These get contracts in Phase 2.

Tier 3 — Operational/Informational: Internal datasets, exploratory datasets, preliminary data work. Lightweight contracts or documented assumptions are sufficient.

In the VA’s data governance program, this kind of asset tiering was built into the data governance maturity framework. Before the organization could implement controls, it had to know what it was controlling — and which assets deserved the most governance investment. The same logic applies to a data contract program.

Phase 2: Start with the Most Painful Pipelines

Within your Tier 1 assets, start with the pipelines that have the most incident history. The pipelines that break most frequently, that generate the most downstream tickets, that have the most producer-consumer friction — these are your first targets.

This approach has two advantages. First, it produces immediate, visible business value: fixing your most painful pipelines with contracts creates advocates. Second, it produces useful patterns. The specific quality rules, SLAs, and change management challenges you encounter in your first contracts will inform the templates you use for every subsequent contract.

Phase 3: Build the Contract Template

Don’t write contracts from scratch for every asset. Build a standard template that covers all seven components, with guidance on how to fill in each section for different asset types.

At minimum, your template should include:

# Data Contract Template v1.0

# The Data Governor — https://thedatagovernor.info

contract_id: ""

version: "1.0.0"

status: "Draft"

effective_date: ""

review_date: ""

dataset:

name: ""

description: ""

domain: ""

classification: "" # Public | Internal | Confidential | Restricted

tags: []

ownership:

producer_team: ""

producer_contact: ""

data_steward: ""

consumers: []

schema:

fields: []

quality_rules: []

slas:

freshness: ""

completeness_threshold: ""

availability_target: ""

change_management:

deprecation_notice_days: 90

breaking_change_approvers: []

semantics:

business_glossary_references: []

The contract template should be version-controlled in the same repository as the data pipelines it governs. If you change the schema of the pipeline, you update the contract at the same time. The two are coupled.

Phase 4: Automate Validation

A data contract that isn’t validated automatically is documentation, not infrastructure. The goal is to have contract validation run as part of every pipeline execution — either as a pre-publish check or as a post-publish validation that triggers immediate downstream notification on failure.

The mechanics of this depend on your stack. In a dbt-based environment, dbt’s native contracts feature (available since dbt 1.5) enforces schema and column-level constraints at model build time. A contract violation fails the build before the data is published. In a Python-based pipeline environment, Great Expectations or Soda Core can run expectation suites as validation steps integrated into your orchestrator (Airflow, Prefect, Dagster).

The key design principle: consumers should never be the first to discover a contract violation. Validation should run on the producer side, and the producer should be notified before or at the moment data is delivered to consumers.

Phase 5: Publish to the Data Catalog

Data contracts only produce value if consumers can find and use them. Every contract should be published to the data catalog so that a consumer evaluating a data asset can see exactly what the producer has committed to.

In a Collibra implementation, data contracts can be represented as a custom governance asset type — with structured attributes for each contract component, linked to the relevant dataset asset, and surfaced in the asset detail view alongside lineage, ownership, and quality metrics. In a Microsoft Purview environment, contracts can be represented as governance policies attached to the data asset.

The critical integration point is lineage. Your data catalog should allow a consumer to trace from a contract through the assets it governs, through the downstream assets that depend on those assets, and through to the reports or applications that ultimately consume the data. When a contract is violated or changed, the impact scope should be immediately visible.

Phase 6: Establish the Governance Process

The last phase is not technical — it is organizational. Data contracts need a governance process to sustain them.

This means:

- A contract review cadence: Contracts are living documents. Tier 1 contracts should be formally reviewed at least quarterly to confirm SLAs are still accurate, quality rules still reflect business expectations, and ownership is current.

- A contract board or change control committee: For breaking changes, there needs to be a body with authority to approve changes and a clear process for consumers to weigh in.

- Contract compliance metrics: Track what percentage of your data pipeline runs are producing contract-compliant data. Track SLA breach rates. Track the mean time to resolution for contract violations. Make these metrics visible to leadership.

- Accountability in performance frameworks: If producers are accountable for contract compliance and consumers are accountable for registering their dependencies, that accountability needs to be reflected in team performance discussions, not just governance documents.

Data Contracts and the Broader Governance Ecosystem

Data contracts do not exist in isolation. Their value is multiplied when they are integrated with the full governance ecosystem.

Integration with the Business Glossary

Every semantic definition in a data contract should link to an approved entry in the business glossary. This is not optional if you want consistent meaning across contracts. When the business definition of “active customer” changes, every contract that references that term should be reviewed for impact.

In a Collibra environment, this integration is native: business terms are governance assets that can be related to data attributes, and data attributes are what contracts govern. The link between contract semantics and business glossary entries is a governed relationship in the catalog.

Integration with Data Lineage

Data contracts are node-level agreements. Data lineage is the network that connects those nodes. Together, they provide end-to-end visibility from source to consumption.

When a contract is violated, lineage tells you the blast radius: which downstream datasets, reports, and decisions are affected. When you want to change a contract, lineage tells you who the consumers are. These are not independent capabilities — they are interdependent.

Integration with Data Quality Programs

Data contracts specify what quality the data should have. Data quality programs measure whether it does. The integration point is the quality rule: what is specified in the contract should be measurable in the quality tool.

If your data quality program is monitoring metrics that aren’t tied to any contract, you have orphaned quality checks. If your contracts specify quality rules that aren’t being monitored, you have phantom commitments. Alignment between contracts and quality monitoring is a governance maturity indicator.

In MDM environments — specifically in the Profisee implementation at Nestle Purina — the data quality rules in the MDM workflow were essentially proto-contracts: they specified what valid product master data looked like, what was required before a record could be promoted to golden record status, and what happened when records failed validation. Formalizing these as data contracts was a natural extension of the existing MDM governance model.

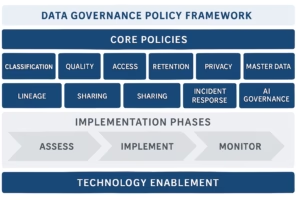

Integration with Data Governance Policies

Policy sets the enterprise standard. Contracts operationalize that standard.

If your data governance policy says all Tier 1 data assets must have documented ownership and quality SLAs, the data contract is the mechanism that makes that policy real and auditable. When an auditor asks whether your Tier 1 assets have documented quality commitments, you should be able to point to contracts.

The relationship between policy and contract is hierarchical: policy sets the requirement, the contract standard template operationalizes the requirement, and individual contracts are the evidence of compliance.

Data Contracts in Data Mesh Environments

The data mesh architectural pattern makes data contracts load-bearing structural elements, not optional add-ons.

In a federated data mesh, domain teams are the data producers. They own their domain’s data products end-to-end: ingestion, transformation, quality, governance, and publication. The central platform team provides infrastructure — the compute, storage, catalog, and governance tooling — but does not manage domain data.

In this model, a domain data product without a contract is a pipeline with no interface documentation and no operational commitments. A consumer team building on top of an undocumented data product has no protection from breaking changes, no visibility into quality guarantees, and no accountability mechanism when things go wrong.

Data contracts in a data mesh context serve four functions:

Discovery: Published contracts in the data catalog allow consumer teams to understand what data products are available and what they commit to, without needing to talk to the producer team directly.

Trust calibration: A contract with a strong SLA, comprehensive quality rules, and visible compliance metrics tells a consumer team that this data product is mature and reliable. A contract with minimal commitments signals that the product is in early development and should be used with caution.

Interoperability governance: In a large mesh, the governance team cannot review every pipeline. But they can set standards for what contracts must contain — tiering requirements, mandatory quality rule categories, required semantic links — and audit compliance at the contract level rather than the pipeline level.

Change impact management: In a densely connected mesh, a breaking change to a widely-used data product can cascade through dozens of downstream consumers. Contracts — with their registered consumer lists and formal deprecation processes — are the mechanism that prevents a breaking change from becoming an undiscovered crisis.

The practical challenge of data contracts in a data mesh is cultural and organizational: domain teams, who may have deep engineering skill but limited governance experience, need to be equipped and incentivized to write and maintain good contracts. This requires governance-as-a-service capabilities from the central platform team: templates, tooling, training, and automation that make contract authorship frictionless.

Data Contracts and Regulatory Compliance

Regulatory compliance is one of the clearest business justifications for a data contract program — and one of the most concrete ways to make the business case internally.

BCBS 239 and Risk Data Governance

The Basel Committee on Banking Supervision’s BCBS 239 standard — Principles for Effective Risk Data Aggregation and Risk Reporting — is the most directly relevant regulatory framework for data contracts in financial services. Published in 2013 and subject to ongoing supervisory assessment, BCBS 239 requires systemically important financial institutions to demonstrate:

- Data accuracy: Risk data must be accurate, reconciled, and validated

- Data aggregation: Banks must be able to aggregate risk data across the enterprise quickly and reliably

- Timeliness: Risk reports must be produced on schedule with documented data freshness

- Completeness: All material risk data must be captured with explicit coverage documentation

- Adaptability: The risk data architecture must be flexible enough to respond to regulatory requests for new aggregations

- Accountability: Clear ownership of risk data assets must be documented and maintained

Each of these principles maps directly to a component of a data contract. Accuracy and completeness map to quality rules. Timeliness maps to SLAs. Accountability maps to ownership. The documentation requirements map to schema and semantic definitions.

Financial services governance work makes this mapping concrete. When regulatory examiners walk into a supervisory review and ask for evidence of BCBS 239 compliance, they are asking to see, in practical terms, something that looks very much like a data contract ecosystem: documented pipelines with ownership, quality controls, SLA monitoring, and change management discipline. Organizations that have built formal data contract programs have a materially stronger response to this kind of examination than organizations relying on informal controls and tribal knowledge.

The connection is not theoretical. Regulatory penalties for BCBS 239 non-compliance — in the form of heightened scrutiny, capital add-ons, or supervisory requirements — create direct financial incentives for the kinds of data governance investments that data contracts represent.

GDPR, CCPA, and Privacy-by-Design

Privacy regulations create a different but equally compelling driver for data contracts.

Under GDPR, a data controller must be able to respond to data subject requests — access, rectification, erasure, portability — within defined timeframes. Fulfilling an erasure request requires knowing exactly which systems contain or derive data from the subject’s records. This is, at its core, a data lineage problem. And data contracts, because they register consumers explicitly and connect to lineage in the data catalog, are part of the solution.

A data contract that specifies the data classification (PII, sensitive PII, non-PII), the applicable retention period, the legal basis for processing, and the registered downstream consumers gives the privacy program a structured starting point for impact analysis when a data subject right is exercised.

CCPA creates similar requirements for California consumers, and the growing patchwork of state-level privacy laws in the US is pushing organizations to build these capabilities broadly rather than jurisdiction-specifically.

The practical integration: when authoring data contracts for datasets containing personal data, include a privacy section that specifies the data classification, the applicable regulation, the retention period, and any transfer restrictions. This metadata feeds the privacy program’s record of processing activities (ROPA) and supports automated enforcement of retention policies.

Sarbanes-Oxley (SOX) and Financial Reporting Controls

For publicly traded companies, SOX Section 302 and 404 require documented and tested internal controls over financial reporting. Data controls are increasingly part of SOX audits — particularly as more financial reporting data flows through data pipelines and analytics platforms.

A data contract for a financial reporting dataset provides auditable evidence of control: the schema is documented and validated, quality rules are automated and logged, ownership is assigned, and changes go through a controlled process. These are the kinds of documented controls that SOX auditors look for when assessing whether financial data is reliable.

Building Organizational Buy-In for Data Contracts

The technical implementation of data contracts is solvable. The harder work is building the organizational conditions that sustain a contract program.

Making the Business Case

The business case for data contracts rests on three arguments, and you’ll likely need all three depending on your audience.

The cost argument: Data incidents — broken dashboards, failed reports, incorrect analytics — cost organizations in analyst time, rework, and delayed decisions. If you can quantify the incident volume and estimated cost in your organization, the investment in preventing that category of failure through contracts becomes straightforward to justify. Even a rough estimate — fifty analyst hours per month wasted on contract-related incidents at a fully-loaded cost of $150/hour is $7,500/month or $90,000/year — makes the case for contract program investment.

The risk argument: For regulated industries, the compliance exposure from undocumented, uncontrolled data pipelines is a risk that leadership and legal teams understand. BCBS 239, SOX, GDPR — each creates a risk of regulatory action that data contracts help mitigate.

The scale argument: As data platforms grow — more data sources, more teams, more consumers, more pipelines — the implicit contract system breaks down faster. A small data team of five people can maintain shared context informally. A platform serving 200 data consumers across 15 domain teams cannot. Data contracts are how governance scales.

The Producer Incentive Problem

One of the more honest conversations in the data contracts space is about producer incentives. From a data producer’s perspective, writing a contract creates obligations: you now have documented SLAs you can be held to, quality rules that might catch your failures, and a change management process that adds steps to deploying updates. Why would a producer team voluntarily take on these constraints?

The answer is that the constraint is mutual. Without contracts, producers absorb significant invisible cost: consumer teams opening tickets when pipelines change, unclear expectations about what “good enough” means, and the cognitive overhead of managing undocumented downstream dependencies. With contracts, producers gain clarity — they know who their consumers are, what they expect, and what the approved process is for making changes. The discipline of a contract reduces surprises in both directions.

The key to producer adoption is making the value visible early. Start with a pilot where a producer team with high incident volume implements contracts for their most complained-about datasets. When incident volume drops and consumer satisfaction improves, that team becomes an internal advocate. The program spreads through demonstrated value, not mandate.

The Governance Team’s Role

The governance team’s role in a data contract program is not to write and own contracts — it is to create the conditions under which producers and consumers can write good contracts efficiently.

That means:

- Providing the standard: The contract template, the tiering framework, the change management protocol. These are governance deliverables that producers use.

- Providing the tooling: Integration of contract validation into the pipeline infrastructure, the data catalog as the publication platform.

- Training and onboarding: Domain teams that are new to contracts need guidance on how to fill out a contract accurately and what a good quality rule looks like.

- Auditing and reporting: Governance is responsible for measuring contract coverage, tracking violation rates, and surfacing compliance gaps to leadership.

- Adjudication: When a producer and consumer disagree about whether a change was breaking, or whether an SLA was met, governance provides the process and the authority to resolve the dispute.

The governance team that tries to own every contract becomes a bottleneck. The governance team that provides the framework and enables domain ownership is the one that scales.

Common Pitfalls in Data Contract Programs

Pitfall 1: Treating the Contract as a Documentation Exercise

The single most common failure mode is building a data contract program that produces well-formatted YAML files that nobody reads and no system enforces. If contracts are not validated automatically and not visible in the data catalog, they are documentation with extra steps.

The test for whether your contract program has operational value is simple: when a contract is violated, does something happen automatically? If the answer is “someone will eventually notice,” the contract program is decorative.

Pitfall 2: Writing Contracts After the Fact Without Producer Buy-In

Data contracts are sometimes introduced top-down as a governance initiative without the engineering teams who produce the data being part of the design. The result is contracts that don’t reflect how the pipelines actually work, owned by governance teams who can’t enforce them, and resented by engineering teams who see them as paperwork.

Contracts must be co-owned by producers and consumers. The governance team sets the standard and provides the framework. The producer team defines the actual SLAs and quality rules they can commit to. The consumer team registers their dependencies and flags requirements the contract doesn’t currently meet. Governance is the process; engineering teams are the parties to the agreement.

Pitfall 3: Over-Engineering the Contract on Day One

The perfect data contract standard — with comprehensive semantic links, formal SLA review committees, automated compliance scoring, and integrated data mesh discovery — takes time to build. Organizations that try to implement that full standard before writing a single production contract never write any production contracts.

Start with the minimum viable contract: schema, owner, one or two SLAs, two or three quality rules. Get that into production and validated. Then add components as the organization’s capability matures. A simple contract that is automated and enforced is worth more than a comprehensive contract that lives in a wiki.

Pitfall 4: No Process for Contract Violations

What happens when a contract is violated? If the answer is “we get an alert,” that is the beginning of an answer, not the end. There must be a defined response process: who gets notified, what the initial response should be, what constitutes resolution, and how root cause findings are documented.

Contract violation response is a governance process, not just a technical alert. Without it, violations accumulate without accountability, and the organization loses confidence that the contract program is functioning.

Pitfall 5: Schema Contracts Without Semantic Contracts

Many early data contract implementations focus exclusively on schema: field names and types. This is necessary but not sufficient. An organization can have perfectly typed data that means completely different things to different teams.

During a Collibra implementation at the VA, one of the persistent challenges was that field-level schema was reasonably well-documented, but semantic definitions — what specific fields actually meant in the context of specific systems — were inconsistent across domain systems. Two systems could both have a field called veteran_status with the same data type but with different valid values, different business rules about how they were populated, and different intended interpretations. A schema contract would not catch that. A semantic contract — tied to the governed business glossary — would.

Pitfall 6: No Consumer Registration

If producers don’t know who their consumers are, they can’t manage change impact. Consumer registration — a formal registry of which teams or applications depend on which data contracts — is underimplemented in most early programs.

Consumer registration doesn’t need to be perfect or complete on day one. But it should exist, and it should be part of how the data catalog surfaces data asset relationships. In Collibra, this can be modeled as a “subscribed to” relationship between a business process or application asset and the data contract asset.

Pitfall 7: Treating Contracts as Immutable

Some teams, having invested in writing a contract, treat it as permanent. Contracts should be reviewed regularly. Business rules change. Systems evolve. An SLA set in year one may not reflect the actual operational capability in year three — in either direction.

The contract review cadence (annually for Tier 2/3, quarterly for Tier 1) exists precisely to keep contracts accurate. A stale contract is worse than no contract in some ways — it creates false confidence in commitments that are no longer honored.

Tools and Technology for Data Contracts

dbt Contracts

Since dbt 1.5, dbt has supported native data contracts for models. A dbt contract specifies the expected column names and data types for a model’s output. If the model produces output that doesn’t match the contract, the build fails.

This is the simplest entry point for dbt users: you are already writing model YAML; adding a contract block is a small incremental step. The constraint is that dbt contracts are build-time enforcement only — they validate what the pipeline produces, not what arrives from source systems.

dbt contracts work best in combination with schema tests and data quality checks (dbt tests, or integration with a dedicated quality tool) to cover the full contract surface.

Great Expectations

Great Expectations is a Python-based data quality framework that allows you to define expectation suites — collections of assertions about a dataset — and run them as part of your pipeline. It produces data documentation (called “Data Docs”) that shows the results of expectation suite runs and their validation status.

In a contract context, Great Expectations is the validation engine. The data contract specifies the quality rules; Great Expectations implements and runs them. The integration can be done at the orchestrator level (Airflow operators, Dagster sensors) to ensure validation is a first-class step in the pipeline execution.

Soda Core

Soda Core takes a YAML-first approach to data quality checks, making it somewhat more accessible to analysts who are less comfortable with Python. Checks are written in SodaCL (Soda Checks Language), which is intuitive for practitioners with SQL backgrounds.

Soda also offers Soda Cloud, which adds monitoring, alerting, and a web UI for tracking quality check results over time. For organizations that want a managed quality monitoring layer that can be connected to contract-based rules, Soda Cloud is a strong option.

OpenDataContract Standard

The Open Data Contract Standard (ODCS), maintained by the AIDA User Group, is an open specification for structuring data contracts as YAML documents. It covers schema, quality, SLAs, ownership, pricing (for data marketplace contexts), and support information.

ODCS is valuable as a standard because it enables interoperability: tools that consume ODCS-formatted contracts can work across different data platforms. If your organization uses a mix of Snowflake, Azure Synapse, and BigQuery, a contract in ODCS format can be consumed by tooling across all three.

Collibra

Collibra does not market a “data contracts” feature by that name, but the platform provides all the capabilities needed to govern data contracts at enterprise scale: asset modeling (to represent contracts as governed assets), workflow management (to manage the contract approval and change process), business glossary integration (for semantic definitions), data lineage integration (for impact analysis), and access control (to manage who can author and approve contracts).

In a mature Collibra governance implementation, data contracts can be fully modeled in the governance catalog, with automated workflows for contract review cycles, change approval, and violation tracking. The implementation at the VA used Collibra’s workflow and policy management capabilities for exactly this kind of structured governance process around data assets.

Profisee and MDM Contract Governance

In MDM environments, data contracts govern the conditions under which records are promoted to golden record status and published to consuming systems. Profisee’s workflow capabilities support the definition of these conditions as governance rules — which are, in practical terms, a form of data contract.

The specific MDM contract considerations are: what must be true for a record to be considered “governed” (completeness of required attributes, referential integrity with related domains, survivorship rules), what downstream systems are the consumers of golden records, and what SLAs govern when golden records are published.

In the Nestle Purina MDM program, the quality and completeness rules governing product master records were the de facto data contract between the MDM system and the downstream ERP and analytics consumers. Formalizing those rules as explicit contracts — with versioning, consumer registration, and change management — was a natural step toward a more mature data governance posture.

Microsoft Purview

Microsoft Purview (formerly Azure Purview) provides data governance capabilities within the Azure ecosystem. While Purview’s native contract functionality is less mature than purpose-built tools, it integrates deeply with Azure-native data services and provides the catalog, lineage, and classification foundations on which a contract program can be built.

For Azure-centric organizations, the recommended approach is to store contracts as structured metadata in Purview’s custom attribute framework, use Purview lineage to surface contract coverage and impact, and integrate with Azure Data Factory or Azure Synapse pipelines for automated validation.

Data Contract Maturity Levels

Like data governance broadly, data contract programs mature over time. Understanding where your organization is on the maturity curve helps set realistic expectations and prioritize investments.

Level 1: Undocumented (Pre-Contract)

No explicit contracts exist. Producer-consumer relationships are implicit, based on tribal knowledge. Pipeline changes are communicated ad hoc or not at all. Data issues are discovered by consumers. Schema changes break pipelines with no warning.

Most organizations start here. Recognition that implicit contracts exist and that they are failing is the trigger for a contract program.

Level 2: Documented (Informal Contracts)

Contracts exist as documentation — READMEs, wikis, shared spreadsheets. Schema is documented. Some quality expectations are noted. Ownership is specified. But contracts are not version-controlled, not enforced automatically, and not integrated with the data catalog.

This is better than nothing, but the documentation drifts from reality. Without automation, documented contracts become stale.

Level 3: Enforced (Automated Validation)

Contracts are machine-readable and enforced as part of pipeline execution. Schema contracts are validated at build or publish time. Quality rules run automatically. SLA breaches trigger alerts. Breaking changes require approval. Consumer registration exists.

This is the functional threshold. Organizations at Level 3 have operational data contracts that provide real reliability guarantees.

Level 4: Governed (Catalog-Integrated)

Contracts are published in the data catalog. Consumers can discover contracts for any Tier 1 or Tier 2 data asset. Lineage is connected to contracts for impact analysis. Contract compliance is a tracked metric, visible to governance and leadership. Contract reviews are scheduled and tracked.

Level 5: Optimized (Continuous Improvement)

Contract compliance metrics drive continuous improvement. SLA targets are adjusted based on observed performance and consumer needs. Contract patterns are shared across domains. Automated contract generation (from schema inference plus steward review) reduces the authoring burden. The contract program is self-sustaining and continuously improving.

FAQ

What is the difference between a data contract and a data SLA?

An SLA (Service Level Agreement) is one component of a data contract. A data SLA specifies operational commitments — freshness, availability, latency. A data contract is a broader agreement that includes schema, semantic definitions, quality rules, ownership, and change management protocols in addition to SLAs. Think of the SLA as the delivery commitment within the larger contract.

Do I need a data contract for every dataset?

No. Data contracts are most valuable for data assets that cross team boundaries — where one team produces data that other teams depend on. Internal datasets within a single team, exploratory datasets, and early-stage data work typically don’t need formal contracts. Focus your contract program on your Tier 1 and Tier 2 data assets first, particularly those with the most downstream consumers and the highest business or regulatory stakes.

Can data contracts work with real-time streaming data?

Yes, but they require adaptation. For streaming data, schema contracts apply to the event or message structure. Freshness SLAs are replaced with latency SLAs. Quality rules apply to event-level validation. The key difference is that streaming data is validated in-stream (using tools like Apache Flink with quality checks, or AWS Glue Schema Registry for schema enforcement) rather than at batch delivery time.

How do you handle schema evolution without breaking consumers?

The key is distinguishing breaking from non-breaking changes and managing each through the appropriate process. Adding a new optional field is non-breaking — existing consumers can ignore it. Removing a field, changing a type, or making an optional field required are breaking changes. Breaking changes should go through a formal deprecation process: announce the change with a defined deprecation timeline (90 days is typical for Tier 1 assets), maintain the old version during the deprecation window, and require consumer confirmation before retiring the old version.

What is the relationship between data contracts and data mesh?

Data contracts are foundational to data mesh architecture. In a data mesh, domain teams publish data products independently. Without data contracts, consumers have no reliable way to understand what a data product commits to, and producers have no mechanism for managing change impact across the mesh. Data contracts are the interface layer of data products — they define what the product guarantees without exposing the implementation. A data mesh without contracts is a highly decentralized data swamp.

How do you get data engineering teams to adopt contracts?

The most effective approach is not mandates — it is making contracts valuable to the producers, not just consumers. Producers benefit from contracts because: registered consumers mean they know who will be impacted by a change (instead of finding out through angry tickets), automated validation catches issues before they reach consumers, and clear quality rules remove ambiguity about what “good enough” means. Start with the engineering teams that already experience the most friction from undocumented producer-consumer relationships — they have the most to gain.

How do you measure the success of a data contract program?

Track these metrics: percentage of Tier 1 data assets with active contracts; contract SLA breach rate (target: trending down); mean time to detect (MTTD) contract violations; mean time to resolve (MTTR) contract violations; number of data incident tickets attributed to contract violations (target: trending down); consumer satisfaction with data reliability (qualitative, through stakeholder surveys or governance reviews). The leading indicators are coverage and automation. The lagging indicators are incident reduction and trust improvement.

How do data contracts relate to GDPR or other privacy regulations?

Data contracts support privacy compliance in two ways. First, they provide the documented data lineage that privacy programs need: when a data subject exercises their right to erasure, the contract network tells you exactly which systems contain or derive data from the individual’s record. Second, contracts can include data classification and sensitivity metadata that feed automated access control and retention enforcement. A contract that specifies that a dataset contains PII, the applicable retention period, and the downstream consuming systems becomes part of the documentation basis for a privacy impact assessment.

What YAML or open standard should I use for writing data contracts?

The Open Data Contract Standard (ODCS) is the most widely adopted open specification. It is comprehensive, actively maintained, and supported by growing tooling. For organizations heavily invested in dbt, the native dbt contract format integrates directly with the build process and is the lowest-friction starting point. The practical answer for most organizations is to start with whatever format integrates most naturally with your existing data tooling, then migrate to ODCS as your program matures and you need cross-platform interoperability.

How long does it take to implement a data contract program?

The minimum viable program — contracts for your five most critical data assets, with automated schema validation and basic SLA monitoring — can be operational in eight to twelve weeks for a focused team. A full enterprise program, covering Tier 1 and Tier 2 assets with catalog integration, formal change management, and governance metrics, typically takes twelve to eighteen months of sustained effort. The timeline is more dependent on organizational change management than technical implementation.

Can data contracts help with AI/ML model reliability?

Absolutely. AI/ML models are consumers of data. When the data they were trained on changes — in schema, distribution, or quality — model performance degrades. A data contract between the feature pipeline and the model training system provides the same protections a contract gives any consumer: schema stability, quality guarantees, and change notification. Model observability tools like Arize, Evidently, or WhyLogs can be integrated with contract validation to detect when data drift violates the implicit or explicit contract the model was trained under.

Conclusion

Data contracts are not a trend. They are a maturity marker: the point at which a data platform transitions from “infrastructure that exists” to “infrastructure that can be trusted.”

The organizations that get the most value from data contracts are not the ones with the most sophisticated YAML schemas or the most elaborate catalog integrations. They are the organizations where producers and consumers have a shared understanding of their mutual obligations, where violations are caught automatically before they reach consumers, and where changing data assets is a managed process rather than a surprise.

If you are starting from zero, start small: identify your most painful pipelines, write minimal contracts for them, automate schema validation, and register the consumers. If you are scaling an existing program, focus on the organizational capabilities — review cadences, change management processes, compliance metrics — that turn a technical implementation into a sustainable governance practice.

The shift from implicit to explicit contracts is a shift from hoping the data will be there and correct to knowing it will be. In a data-driven organization, that shift is the foundation of everything.