Claude AI pricing

If you’re evaluating Claude for your data team, your development workflow, or your enterprise AI strategy, the pricing landscape can feel unnecessarily complex. Anthropic offers a Free tier, a Pro plan, two Max tiers, Team and Enterprise subscriptions, and a pay-per-token API — each with different model access, usage limits, and cost optimization levers. And as of April 2026, the Enterprise pricing model just changed significantly.

This guide breaks down every Claude pricing option available in 2026, explains exactly what you get at each tier, compares costs against competitors like ChatGPT and Gemini, and provides practical guidance on which plan makes sense for different use cases. Whether you’re a solo practitioner, a team lead evaluating seats, or an enterprise architect planning an AI deployment, this is the pricing reference you need.

In my work as a data governance engineer, I’ve evaluated AI tooling costs across multiple enterprise environments — from financial services to government to manufacturing. The pricing decisions you make here directly impact your AI adoption budget, your team’s productivity, and ultimately whether your organization gets real value from these models or bleeds money on underutilized subscriptions.

- How Claude Pricing Has Evolved

- Understanding Claude's Model Lineup

- Individual Plans: Free, Pro, and Max

- Team Plans

- Enterprise Plans

- API Pricing: Pay-Per-Token

- Cost Optimization: Cutting Your API Bill by Up to 95%

- Comparing Claude to Competitors

- Which Plan Should You Choose?

- Hidden Costs and Gotchas

- Building a Cost Model: Forecasting Your Claude Spend

- Claude Pricing for Data Teams: A Practitioner's Perspective

- Frequently Asked Questions

- How many messages can I send on the Free plan?

- Is Claude Code included with Pro?

- Can I use Opus on the Free plan?

- How does Claude's 1 million token context window affect pricing?

- What's the cheapest way to use Claude for high-volume workloads?

- How does the April 2026 Enterprise pricing change affect existing customers?

- Is Claude Cowork available on all paid plans?

- How do I choose between the API and a subscription plan?

- Can I mix Standard and Premium seats on the Team plan?

- Does Claude display ads?

- What happens if I exceed my usage limits?

- Are there any student or nonprofit discounts?

- How does prompt caching work in practice?

- What's the difference between Claude Code and Cowork?

- Can I use Claude through Amazon Bedrock or Google Cloud?

- How do I estimate my token usage before committing?

- What's the Batch API and when should I use it?

- Conclusion

How Claude Pricing Has Evolved

Understanding where pricing stands today requires context on how it got here. Claude’s pricing has evolved significantly since Anthropic’s initial API launch.

In 2023, Anthropic launched Claude’s API with a relatively simple pricing structure — a single model at a single price point. The introduction of the Claude 3 family (Opus, Sonnet, Haiku) in March 2024 created the tiered model pricing structure that persists today, giving developers the ability to choose between capability and cost.

The consumer subscription landscape shifted dramatically in 2025 with the introduction of the Max plan tiers ($100 and $200/month), acknowledging that a significant segment of users needed more capacity than Pro provided. The Pro plan itself stabilized at $20/month, matching ChatGPT Plus pricing and establishing an industry-standard price point for premium AI subscriptions.

The most consequential change came in April 2026, when Anthropic restructured Enterprise pricing from flat-rate per-seat billing to usage-based billing. This shift — charging $20/month per seat plus API-rate token consumption — represents a fundamental philosophical change in how Anthropic monetizes enterprise adoption. It aligns costs with actual usage but introduces cost unpredictability that some organizations find challenging.

Throughout this evolution, API prices have dropped dramatically. LLM API prices fell roughly 80% across the board from 2024 to 2026, driven by more efficient models, hardware improvements, and intense competitive pressure from OpenAI, Google, and emerging providers like DeepSeek.

The key takeaway: Claude’s pricing is not static. Whatever you decide today, plan to revisit quarterly as new models, pricing tiers, and competitive dynamics reshape the landscape.

Understanding Claude’s Model Lineup

Before diving into pricing, you need to understand what you’re paying for. Anthropic currently offers three model families, each positioned for different use cases and budgets.

Claude Opus 4.6: The Flagship

Opus 4.6 is Anthropic’s most capable model. It leads benchmarks in complex multi-step reasoning, long-form writing, and software engineering tasks. Opus 4.6 supports a 1 million token context window — enough to process approximately 1,500 pages of text, 30,000 lines of code, or over an hour of video in a single prompt. This is the model you use when accuracy and reasoning depth matter more than speed or cost.

Opus is available on Pro, Max, Team (Premium seats), and Enterprise plans. It is not available on the Free tier. Via the API, Opus 4.6 costs $5 per million input tokens and $25 per million output tokens.

Claude Sonnet 4.6: The Workhorse

Sonnet 4.6 is the model most people interact with daily. It’s fast enough for real-time applications while being capable enough for most professional tasks — writing, analysis, coding, and conversation. Sonnet 4.6 also supports the full 1 million token context window.

Sonnet is available on every plan, including the Free tier. Via the API, Sonnet 4.6 costs $3 per million input tokens and $15 per million output tokens.

Claude Haiku 4.5: The Speed Tier

Haiku 4.5 is optimized for speed and cost efficiency. At $1 per million input tokens and $5 per million output tokens via the API, it’s ideal for classification, data extraction, high-volume processing, and any workflow where cost matters more than nuance. Haiku doesn’t sacrifice quality as dramatically as you might expect — it handles structured tasks remarkably well.

Haiku is primarily accessed through the API. It’s the model of choice for production workloads where you’re processing thousands or millions of requests and every token counts.

Individual Plans: Free, Pro, and Max

The Free Plan

Claude’s Free plan is surprisingly capable for a zero-cost tier. As of April 2026, Free users get access to Sonnet 4.6 (not Opus), along with features that many competitors lock behind paid tiers: memory across conversations, web search, extended thinking, code execution, desktop extensions, MCP connectors, projects, artifacts, and file uploads (up to 20 files per chat, 30 MB maximum).

The primary limitation is usage volume. Free users get approximately 10-40 messages per 5-hour rolling window, depending on conversation complexity and current platform demand. Longer, more complex messages consume more of your quota. Anthropic doesn’t publish a fixed message count — usage limits are session-based and reset every five hours.

Who it’s for: Casual users, people evaluating Claude before committing to a paid plan, and light-usage professionals who need an AI assistant a few times per day. If you’re using Claude for occasional research, drafting, or brainstorming, the Free tier may be all you need.

What you don’t get: Opus model access, Claude Code, and the higher usage volumes that sustained professional work requires. If you find yourself hitting rate limits regularly, it’s time to upgrade.

Claude Pro — $20/Month ($17/Month Annual)

Pro is where Claude becomes a real productivity tool. For $20/month (or $17/month with annual billing at $200 upfront), you get:

- Access to both Sonnet 4.6 and Opus 4.6 — the full model lineup for chat-based use

- Claude Code — Anthropic’s terminal-based coding agent that can read, write, and refactor code across your project

- Claude Cowork — the desktop agent that can read, edit, and create files in folders you specify, open applications, navigate your browser, and deliver finished work products

- Significantly higher usage limits — roughly 5x the Free tier’s capacity per 5-hour window

- Priority access during peak demand periods

- All Free tier features — memory, web search, extended thinking, code execution, projects, artifacts, file uploads

The Pro plan is the inflection point where Claude transitions from a helpful chatbot to an integrated work tool. Claude Code alone — which lets you delegate coding tasks directly from your terminal — can save hours per week for developers. Cowork, which became available to Pro subscribers in January 2026, extends this agentic capability to knowledge work: creating documents, filling spreadsheets, and managing files.

Who it’s for: Individual professionals who use Claude regularly throughout the workday — developers, writers, analysts, data professionals, and anyone who needs consistent, reliable AI assistance. If you’re using Claude more than a few times per day, Pro pays for itself quickly.

Real-world value: At $20/month, Pro needs to save you roughly 15-20 minutes of work per month to break even (assuming a $75-100/hour effective rate). In practice, most professionals report saving hours per week, making this one of the highest-ROI subscriptions in the productivity tool landscape.

Claude Max — $100/Month (5x) or $200/Month (20x)

Max is for power users who hit Pro limits regularly. It comes in two tiers:

Max 5x ($100/month): Everything in Pro, with 5x the usage capacity. Independent testing suggests this translates to roughly 225 messages per 5-hour window. You also get maximum priority queue placement, ensuring consistent performance regardless of platform demand.

Max 20x ($200/month): Everything in Max 5x, with 20x Pro’s usage capacity — approximately 900 messages per 5-hour window. At this level, rate limits effectively stop being a concern for most full-day professional work.

Both tiers have identical feature sets. The only difference is the usage quota. Features include everything from Pro plus higher output limits and early access to new Claude features and models.

Who it’s for: Developers using Claude Code extensively (the average Claude Code user costs about $6 per developer per day in compute, with 90% staying under $12/day), professionals doing sustained multi-hour work sessions, and anyone who finds Pro’s usage limits interrupting their workflow.

The cost comparison that matters: One developer reported using 10 billion tokens over eight months via the API — estimated cost exceeded $15,000. The same usage on Max at $100/month for eight months would have cost $800. That’s a 93% savings. If your usage is heavy and sustained, Max is dramatically cheaper than API pay-per-token for individual use.

Team Plans

Team plans are designed for organizations of 5-150 people who need collaborative AI capabilities with administrative controls.

Standard Seats — $25/Month ($20/Month Annual)

Standard seats include all core Claude features — chat with Sonnet and Opus, web search, projects, artifacts, file uploads, and team-level collaboration features. However, Standard seats do not include Claude Code or Cowork.

Premium Seats — $150/Month ($100/Month Annual)

Premium seats add Claude Code and Cowork access with 5x more usage than Standard seats. This is the tier for developers, data engineers, and knowledge workers who need the full agentic toolkit.

Team Plan Features

Beyond individual seat features, Team plans include:

- Administrative controls — user management, usage monitoring, and policy settings

- Shared projects and knowledge bases — teams can collaborate on projects and share context

- Centralized billing — single invoice for all seats

- Mix and match — you can combine Standard and Premium seats within the same team, so not every user needs the expensive tier

Who it’s for: Small to mid-size teams that want Claude’s capabilities with team-level administration. The minimum is 5 seats, and you can mix Standard and Premium seats to optimize costs — give Premium seats to developers and power users, Standard seats to everyone else.

Pricing math: A team of 10 with 3 Premium seats and 7 Standard seats costs $440/month on annual billing ($300 Premium + $140 Standard). Compare that to 10 individual Pro subscriptions at $200/month — the Team plan costs more, but you get administrative controls, collaboration features, and higher usage for your power users.

Enterprise Plans

Enterprise pricing underwent a significant change in April 2026. Previously, Anthropic offered flat-rate Enterprise subscriptions at up to $200 per user per month. The new model shifts to usage-based billing on top of a $20/month per-seat base fee.

What Changed

Under the new structure, Enterprise customers pay a $20/month seat fee plus usage charges at API token rates. Organizations commit to estimated monthly consumption upfront. This means costs scale with actual usage rather than being capped at a flat per-user rate.

The rationale, according to Anthropic, is that the flat-fee model created mismatches: some heavy users hit usage limits that interrupted their work, while others didn’t fully use the capacity they paid for. Usage-based billing aligns costs with actual consumption.

What This Means in Practice

The impact depends on your usage patterns. For organizations with moderate, predictable usage, costs may stay similar or even decrease. For heavy users — particularly teams running extensive Claude Code sessions or processing large volumes through Cowork — costs could double or triple compared to the previous flat-rate model, according to enterprise licensing analysts.

Enterprise Features

Enterprise plans include capabilities not available on lower tiers:

- SSO and SCIM — single sign-on and automated user provisioning

- Dedicated support — priority support channels and account management

- Custom deployment options — tailored integrations and configurations

- Advanced admin controls — granular permissions, usage policies, and audit logging

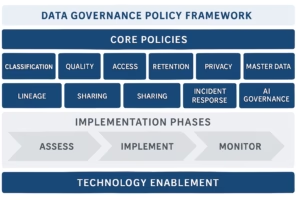

- Data governance — enterprise-grade data handling and privacy controls

- Custom usage guarantees — negotiated throughput and availability commitments

Who it’s for: Organizations with more than 150 users, those requiring SSO/SCIM integration, regulated industries needing custom data governance controls, and companies that need negotiated SLAs. Contact Anthropic’s sales team for pricing — typical deployments range from $500-1,000/month for small teams (10-25 users) to $5,000-15,000+/month for large organizations (100+ users), depending on usage volume.

Navigating the Enterprise Pricing Transition

If you’re currently on or evaluating a Claude Enterprise plan, the April 2026 transition demands careful analysis. Here’s a practical framework for assessing the impact.

Step 1: Audit current usage. Before the transition, document your organization’s actual token consumption per user, per team, and per use case. Most organizations discover that usage is highly concentrated — a small percentage of users consume the majority of tokens. Understanding this distribution is critical for forecasting costs under the new model.

Step 2: Categorize users by consumption tier. Segment your users into light (occasional chat interactions), moderate (daily professional use), and heavy (Claude Code, Cowork, extensive document processing). Light users may cost $25-40/month under usage-based billing (the $20 seat fee plus minimal token consumption). Moderate users may cost $50-100/month. Heavy users — particularly developers running Claude Code full-time — could exceed $200/month.

Step 3: Negotiate consumption commitments. The new model allows organizations to commit to estimated monthly consumption upfront. Higher commitments may secure better per-token rates, but overcommitting wastes money. Use your usage audit to set realistic baselines, and negotiate quarterly review cycles to adjust commitments as patterns stabilize.

Step 4: Implement usage monitoring. Under flat-rate pricing, monitoring was optional — you paid the same regardless. Under usage-based billing, monitoring is essential. Set up per-team and per-user dashboards, establish spending alerts, and create governance policies around AI usage. This is particularly important for organizations in regulated industries where data governance best practices extend to AI tool governance.

Step 5: Evaluate hybrid approaches. Consider whether some users are better served by individual Max subscriptions ($100-200/month with predictable flat-rate billing) while others stay on Enterprise (usage-based with administrative controls). This hybrid approach can optimize total cost while maintaining governance requirements for users who need Enterprise features.

The enterprise licensing analysts who have modeled this transition estimate that costs will roughly stay the same for moderate-usage organizations, increase 50-100% for heavy-usage organizations, and potentially decrease for light-usage organizations that were overpaying under flat-rate pricing. The organizations most at risk are those with large developer teams running Claude Code extensively — these users generate significant token volumes that were previously absorbed by the flat rate.

API Pricing: Pay-Per-Token

The API is how developers integrate Claude into applications, pipelines, and automated workflows. Pricing is per-token, where a token is approximately 4 characters or 0.75 words in English.

Current API Token Pricing (April 2026)

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| Claude Opus 4.6 | $5.00 | $25.00 |

| Claude Sonnet 4.6 | $3.00 | $15.00 |

| Claude Haiku 4.5 | $1.00 | $5.00 |

All three models support the full 1 million token context window at standard pricing — there’s no premium surcharge for longer contexts, which is a significant change from earlier pricing structures.

Extended Thinking Tokens

Extended thinking — Claude’s ability to reason through complex problems step by step before responding — generates additional tokens that are billed at standard output rates. There’s no separate pricing tier for thinking tokens; they’re simply output tokens.

Budget 5,000-10,000 thinking tokens for most use cases and 20,000+ for genuinely hard problems. Since thinking tokens are output tokens, costs add up: 10,000 thinking tokens on Sonnet 4.6 costs approximately $0.15. Extended thinking adds roughly 4-5x cost overhead compared to standard requests, so only enable it when the quality improvement justifies the cost.

Real-World Cost Examples

Customer support chatbot (Haiku 4.5): 1,000 input tokens + 500 output tokens per interaction = ~$0.0035 per conversation. At 10,000 conversations/month: ~$35/month.

SEO content generation (Haiku 4.5 + Batch API): 20 million input tokens + 10 million output tokens per month = $70/month at standard rates, ~$35/month with Batch API discount.

Code review pipeline (Sonnet 4.6): 5,000 input tokens + 2,000 output tokens per review, 500 reviews/month = $22.50 input + $15 output = $37.50/month.

Enterprise document analysis (Opus 4.6): 50,000 input tokens + 10,000 output tokens per document, 100 documents/month = $25 input + $25 output = $50/month.

Cost Optimization: Cutting Your API Bill by Up to 95%

Anthropic provides three major cost optimization mechanisms that can dramatically reduce API expenses. Used together, they can cut costs by up to 95% on eligible workloads.

Prompt Caching

Prompt caching lets you store frequently used context (system prompts, reference documents, few-shot examples) so subsequent requests don’t need to re-process the same input tokens.

How it’s priced:

| Cache Operation | Cost Multiplier |

|---|---|

| 5-minute cache write | 1.25x base input price |

| 1-hour cache write | 2x base input price |

| Cache read (hit) | 0.1x base input price |

The math works in your favor fast: a 5-minute cache pays for itself after just one cache read. A 1-hour cache pays for itself after two reads. For workloads where multiple requests share the same large context — agent workflows, document Q&A, coding assistants — caching reduces input costs by up to 90%.

Important change (February 2026): Prompt caching now uses workspace-level isolation instead of organization-level isolation. Caches are isolated per workspace, which improves data separation but means different workspaces within the same organization don’t share cache benefits.

Batch API

The Batch API processes requests asynchronously with a guaranteed 24-hour turnaround in exchange for a flat 50% discount on all token costs — both input and output.

When to use it: Any workload that doesn’t require real-time responses — bulk content generation, large-scale data classification, batch document processing, overnight report generation, and dataset enrichment. If your workflow can tolerate a delay of minutes to hours, the Batch API cuts your costs in half with zero effort.

Combined Optimization

Batch API and prompt caching discounts stack. Here’s what that looks like in practice:

A standard Sonnet 4.6 request with 10,000 input tokens and 2,000 output tokens costs $0.06 at base rates. With prompt caching (90% input reduction) + Batch API (50% off everything), the same request costs approximately $0.003 — a 95% reduction.

For enterprise workloads processing millions of tokens daily, this stacking can reduce monthly API bills from thousands of dollars to hundreds.

Other Optimization Strategies

Model routing: Don’t use Opus for tasks that Sonnet or Haiku can handle. Route simple classification and extraction tasks to Haiku ($1/$5 per million tokens), use Sonnet for most professional tasks ($3/$15), and reserve Opus ($5/$25) for tasks that genuinely require its superior reasoning. This alone can reduce costs by 50-80% without meaningful quality loss on routed tasks.

Prompt engineering: Shorter, more precise prompts reduce input token costs. Asking for concise outputs reduces output token costs. The difference between a well-crafted prompt and a verbose one can be 2-5x in token usage for the same result quality.

Context management: For long conversations, summarize earlier context rather than sending the full conversation history with every request. A 100,000-token conversation that could be summarized to 5,000 tokens saves 95% on input costs for each subsequent message.

Comparing Claude to Competitors

Pricing doesn’t exist in a vacuum. Here’s how Claude stacks up against the major alternatives as of April 2026.

Consumer Plan Comparison

| Plan | Claude | ChatGPT (OpenAI) | Gemini (Google) |

|---|---|---|---|

| Free | Sonnet 4.6, ~10-40 msg/5hr | GPT-4o mini, ads in US | Gemini 2.5 Flash |

| Standard Paid | Pro $20/mo | Plus $20/mo | Advanced $20/mo |

| Premium | Max $100-200/mo | Pro $200/mo | N/A |

| Team | $20-100/seat/mo | $25-30/seat/mo | Workspace plans |

At the $20/month tier, Claude Pro and ChatGPT Plus are directly comparable. Both provide access to their respective flagship models, extended reasoning capabilities, and coding tools. The feature sets differ — Claude includes Cowork (agentic desktop automation) and MCP connectors; ChatGPT includes DALL-E image generation and a broader plugin ecosystem.

A significant differentiator: since February 2026, ChatGPT displays ads to users on the Free and Go ($8/month) tiers in the United States. Claude does not display ads on any tier.

At the premium tier, Claude Max 5x at $100/month offers a lower entry point than ChatGPT Pro at $200/month. However, ChatGPT Pro promises unlimited GPT-5.4 access, while Claude Max 5x has defined usage limits (5x Pro). Claude Max 20x at $200/month provides 20x usage at the same price as ChatGPT Pro.

API Pricing Comparison

| Model Tier | Claude | OpenAI | Google Gemini |

|---|---|---|---|

| Budget | Haiku 4.5: $1/$5 | GPT-4.1 Nano: $0.10/$0.40 | Flash-Lite 2.5: $0.10/$0.40 |

| Mid-range | Sonnet 4.6: $3/$15 | GPT-4.1: $2/$8 | Gemini 2.5 Pro: $1.25/$5 |

| Flagship | Opus 4.6: $5/$25 | GPT-5.2: $1.75/$14 | Gemini 3.1 Pro: $2/$12 |

At standard rates, OpenAI and Google are generally cheaper per token, particularly at the budget tier where OpenAI’s GPT-4.1 Nano ($0.10/$0.40) dramatically undercuts Claude’s cheapest option, Haiku 4.5 ($1/$5).

However, raw per-token pricing doesn’t tell the full story:

For cache-heavy workloads, all three providers offer approximately 90% caching discounts, making effective costs much closer across providers.

For batch workloads, Claude’s 50% Batch API discount narrows the gap significantly. Combined with caching, Claude’s effective per-token cost approaches competitors.

For quality-sensitive tasks, model capability matters more than per-token price. If Opus 4.6 produces a usable result in one request where a cheaper model requires three iterations, the “expensive” model is actually cheaper in total cost.

LLM API prices dropped roughly 80% across the board from 2024 to 2026. All providers are competing aggressively on price, and the gaps are narrowing with each model generation.

DeepSeek and Open-Source Alternatives

The competitive landscape isn’t limited to the big three. DeepSeek has emerged as a significant price competitor, offering high-capability models at substantially lower API rates. Open-weight models like Llama (Meta), Mistral, and Qwen can be self-hosted, eliminating per-token API costs entirely in exchange for infrastructure management overhead.

For organizations with the engineering capacity to run self-hosted models, the cost calculus shifts dramatically. A dedicated GPU instance running an open-weight model costs a fixed monthly fee regardless of token volume — making it cheaper than API pricing at high volumes while introducing operational complexity that smaller teams can’t justify.

The practical guidance: if your monthly API spend exceeds $2,000-5,000 and you have MLOps capacity, evaluate self-hosted alternatives for your highest-volume workloads. Keep Claude API access for tasks that require frontier-model reasoning quality (complex analysis, nuanced writing, advanced code generation) where open-weight models don’t match performance.

The Right Framework for Comparison

Don’t compare per-token prices in isolation. Compare total cost of ownership for your specific use case:

- Cost per successful task completion — not cost per token

- Developer time saved — a model that reduces iteration cycles saves money even if tokens cost more

- Integration costs — switching providers has real engineering costs

- Feature value — Claude Code, Cowork, and MCP connectors provide capabilities that may eliminate the need for separate tools

Which Plan Should You Choose?

Here’s a decision framework based on usage patterns and organizational context.

For Individual Users

Choose Free if: You use AI a few times per day for quick questions, drafting, or brainstorming. You don’t need Opus or Claude Code. You’re evaluating Claude before committing to a paid plan.

Choose Pro ($20/mo) if: You use Claude regularly throughout the workday. You need Opus for complex reasoning tasks. You want Claude Code for development work or Cowork for knowledge work. You’re a professional who values consistent, reliable access. This is the sweet spot for most individual professionals.

Choose Max 5x ($100/mo) if: You hit Pro rate limits multiple times per week. You run extended Claude Code sessions daily. You need priority access during peak hours. Your work involves sustained, multi-hour AI collaboration.

Choose Max 20x ($200/mo) if: You use Claude Code as your primary development environment. You run continuous, all-day AI workflows. Rate limits at any lower tier interrupt your productivity. You’re a power user who needs near-unlimited access.

For Teams

Choose Team Standard seats ($20-25/mo) for: Business users, analysts, and managers who need AI chat capabilities without Claude Code or Cowork.

Choose Team Premium seats ($100-150/mo) for: Developers, data engineers, and power users who need Claude Code and Cowork with higher usage limits.

Mix and match: Most teams should have a minority of Premium seats (developers, power users) and a majority of Standard seats (everyone else). This optimizes costs while ensuring the right people have the right tools.

For Enterprises

Choose Enterprise if: You have 150+ users, require SSO/SCIM, need custom data governance controls, operate in regulated industries, or need negotiated SLAs. Be aware that the April 2026 shift to usage-based billing means costs are less predictable than the previous flat-rate model — budget conservatively and monitor usage closely.

For Developers Building Applications

Choose the API if: You’re integrating Claude into applications, pipelines, or automated workflows. Select your model based on the task:

- Haiku 4.5 for high-volume, cost-sensitive tasks (classification, extraction, routing)

- Sonnet 4.6 for most application-level tasks (chat, analysis, content generation)

- Opus 4.6 for complex reasoning, code generation, and tasks where quality is paramount

Use prompt caching and the Batch API aggressively. Route requests to the cheapest model that meets your quality bar. Monitor costs daily during development and set billing alerts.

Hidden Costs and Gotchas

Every pricing structure has nuances that the headline numbers don’t capture. Here are the ones that matter most for Claude.

Rolling Window Limits

Claude’s usage limits reset on a rolling 5-hour window, not daily. This means a heavy morning session will recover capacity by afternoon, but it also means there’s no way to “bank” unused capacity. If you don’t use your allocation, it’s gone.

Peak Hour Throttling

Free and Pro users experience more aggressive rate limiting during peak demand hours. Max subscribers get priority queue placement, which provides more consistent performance. If your work is time-sensitive and you can’t afford throttling delays, Max may be worth the premium for the priority access alone.

Extended Thinking Costs

Extended thinking tokens are billed as output tokens, which are 3-5x more expensive than input tokens depending on the model. A workflow that heavily uses extended thinking will have significantly higher costs than the same workflow without it. Monitor thinking token usage in your API dashboard and only enable extended thinking when the quality improvement justifies the 4-5x cost overhead.

Enterprise Usage-Based Billing

The April 2026 shift to usage-based Enterprise billing removes the cost predictability that flat-rate pricing provided. Organizations need to forecast usage carefully, set monitoring alerts, and potentially negotiate usage commitments with Anthropic. The loss of volume discounts and the move to committed consumption raises total cost of ownership for many customers, particularly teams with variable usage patterns.

Third-Party Framework Restrictions

As of April 2026, Anthropic blocked Pro and Max subscribers from using their flat-rate plans with third-party AI agent frameworks. If your workflow depends on routing Claude through external orchestration tools, you may need to use the API (pay-per-token) instead of your subscription plan. This affects cost calculations for teams using tools like LangChain, CrewAI, or similar frameworks.

Context Window Costs

While there’s no surcharge for using the full 1 million token context window, longer contexts mean more input tokens, which means higher costs. A 200,000-token prompt costs 20x what a 10,000-token prompt costs. Be intentional about context length — only include what’s necessary for the task.

Building a Cost Model: Forecasting Your Claude Spend

Whether you’re justifying a team subscription or budgeting an API integration, a concrete cost model prevents surprises. Here’s how to build one.

Subscription Cost Modeling

For interactive use (chat, Claude Code, Cowork), the math is straightforward:

Individual users: Estimate your weekly message volume and complexity. If you consistently send more than 50 substantive messages per day (multi-paragraph questions, code reviews, document analysis), Pro will likely rate-limit you during heavy sessions. Track your rate-limit hits over two weeks of Pro usage — if you’re hitting limits more than twice per week, the upgrade to Max 5x is justified.

Teams: Start by categorizing users. In a typical data team of 15 people, you might have 3 developers (Premium seats at $100/month), 2 data engineers (Premium seats), 5 analysts (Standard seats at $20/month), and 5 business users (Standard seats). That’s $600/month for Premium + $200/month for Standard = $800/month total. Compare that to 15 individual Pro subscriptions at $300/month — the Team plan costs more but provides administrative controls and higher usage for power users.

Enterprise: Under the new usage-based model, forecast by estimating tokens per user per month. Light users generate roughly 500K-2M tokens/month. Moderate users generate 5M-20M tokens/month. Heavy Claude Code users can generate 50M-200M+ tokens/month. Multiply token volumes by the relevant model’s per-token rate, add $20/seat/month, and compare against the previous flat-rate pricing to understand the impact.

API Cost Modeling

For programmatic workloads, build your cost model around three variables:

Volume: How many requests per day/month? What’s the average input and output token count per request?

Model mix: What percentage of requests go to each model tier? Route appropriately — most workloads should have 60-80% of requests on Haiku or Sonnet, with Opus reserved for complex tasks.

Optimization uptake: What percentage of requests can benefit from caching? What percentage can tolerate batch processing delays? Be realistic — not all workloads are cache-friendly, and some require real-time responses.

Example model: A data quality monitoring pipeline that processes 10,000 data asset descriptions per month:

| Component | Tokens | Model | Cost |

|---|---|---|---|

| Asset classification (10K requests) | 500 input + 200 output each | Haiku 4.5 | $6.00 input + $10.00 output = $16.00 |

| PII detection (10K requests) | 1,000 input + 300 output each | Sonnet 4.6 | $30.00 input + $45.00 output = $75.00 |

| Complex governance review (500 requests) | 5,000 input + 2,000 output each | Opus 4.6 | $12.50 input + $25.00 output = $37.50 |

| Total monthly cost | $128.50 | ||

| With 50% Batch API discount | $64.25 | ||

| With caching + batch | ~$30-40 |

This kind of modeling removes guesswork and provides the concrete numbers your finance team needs to approve the spend.

Claude Pricing for Data Teams: A Practitioner’s Perspective

As someone who works across data governance, data management, and AI implementation, I see specific pricing considerations that matter for data teams.

Data Governance and Cataloging Use Cases

If you’re using Claude to assist with data governance work — writing policies, analyzing data dictionaries, generating documentation, reviewing compliance frameworks — the Pro plan is typically sufficient. These are interactive tasks where you’re collaborating with Claude in conversation, and Pro’s usage limits handle them comfortably.

For teams doing this work at scale — generating documentation for hundreds of data assets, reviewing large regulatory documents, or processing data quality reports — the API with Haiku or Sonnet provides the most cost-effective approach. A data catalog enrichment pipeline processing 1,000 asset descriptions per month using Sonnet 4.6 costs roughly $15-30 in API tokens.

Claude Code for Data Engineering

Data engineers using Claude Code to write SQL, build dbt models, create Airflow DAGs, or debug pipeline issues should consider Max 5x at minimum. Claude Code sessions are compute-intensive — the average developer uses about $6/day in API-equivalent compute. At that rate, a Pro subscription runs out of capacity mid-day, while Max 5x provides comfortable headroom.

Enterprise Data Platform Evaluation

For organizations evaluating Claude as part of their enterprise data platform — integrating it into data catalogs, governance workflows, or quality monitoring systems — the API is the right choice. Build cost models based on your expected token volumes, factor in caching and batch discounts, and run a pilot before committing to production volumes.

In my experience implementing data governance programs at the VA and in financial services at Wells Fargo, the AI tooling cost is rarely the bottleneck — it’s the integration and change management effort. A $50/month API bill that saves a data steward 10 hours of manual cataloging work per month is an obvious win. Focus on value delivered, not raw token costs.

Collibra and Profisee Integration Costs

If your organization uses Collibra for data governance or Profisee for master data management, understanding how Claude API costs layer on top of your existing platform spend is essential. During a Collibra implementation at the VA, I saw firsthand how much manual effort goes into populating business glossaries, writing data asset descriptions, and maintaining governance documentation. An API integration that uses Claude to auto-generate and enrich this metadata pays for itself within the first month.

For MDM workflows at Nestle Purina, the entity resolution and data matching work that consumed weeks of manual effort per quarter could be augmented with Claude’s API at a fraction of the cost. The key is routing the right tasks to the right model tier: use Haiku for high-volume matching and classification, Sonnet for documentation and description generation, and Opus only when you need complex reasoning about data relationships and lineage.

Building the Business Case for Your CFO

Data leaders often struggle to justify AI tooling costs because the value is diffuse — it shows up as time savings across many tasks rather than a single dramatic cost reduction. Here’s how I frame it:

Quantify the current manual cost. How many hours per week does your team spend on tasks that AI can accelerate? Data cataloging, documentation writing, policy review, quality rule creation, and governance reporting all have measurable labor costs. At a fully loaded cost of $75-150/hour for a data governance professional, even modest time savings justify the subscription.

Frame it as a data governance vs data management enabler. AI tooling doesn’t replace governance or management work — it makes both more achievable. The Pro plan at $20/month per user, or the API at $50-200/month for team-wide access, is a rounding error compared to the salary costs of the team doing the work. The question isn’t “can we afford Claude?” — it’s “can we afford not to have it?”

Start with a pilot. Don’t ask for budget for a full enterprise deployment. Ask for 3-5 Pro subscriptions for a pilot team over 60 days. Track time savings, output quality, and user satisfaction. Use the pilot data to build the full business case. In every organization where I’ve seen this approach, the pilot team becomes the most vocal advocate for broader adoption.

Frequently Asked Questions

How many messages can I send on the Free plan?

Approximately 10-40 messages per 5-hour rolling window, depending on message complexity and current platform demand. Anthropic doesn’t publish a fixed number — usage limits are dynamic and session-based.

Is Claude Code included with Pro?

Yes. As of 2026, Claude Code is included with Pro ($20/month), Max ($100-200/month), and Team Premium seats ($100-150/month). It is not available on the Free plan or Team Standard seats.

Can I use Opus on the Free plan?

No. Opus 4.6 is only available on Pro, Max, Team, and Enterprise plans. Free users are limited to Sonnet 4.6.

How does Claude’s 1 million token context window affect pricing?

There’s no premium surcharge for using longer contexts. The 1 million token window is available at standard per-token rates for Opus 4.6 and Sonnet 4.6. However, longer contexts mean more input tokens processed, which increases costs proportionally.

What’s the cheapest way to use Claude for high-volume workloads?

Use Haiku 4.5 via the API with both prompt caching and the Batch API enabled. This gives you a 90% reduction on cached input tokens plus a 50% reduction on all remaining costs. Combined, this can reduce effective costs by up to 95%.

How does the April 2026 Enterprise pricing change affect existing customers?

Existing Enterprise customers are transitioning from flat-rate per-user pricing (up to $200/user/month) to usage-based billing ($20/seat/month + API-rate token charges). Analysts estimate this could double or triple costs for heavy users. Organizations should audit their current usage patterns and negotiate consumption commitments carefully.

Is Claude Cowork available on all paid plans?

Yes. As of January 2026, Cowork is available on Pro, Max, and Team Premium seats. Cowork is currently macOS only — there’s no Windows or Linux version.

How do I choose between the API and a subscription plan?

If you’re using Claude interactively (chat, Claude Code, Cowork), a subscription plan is almost always cheaper. If you’re building applications or running automated workloads, the API provides more control and potentially lower costs at scale. The breakeven point varies, but as a rule of thumb: if you’d spend more than $200/month on the API for interactive use, Max 20x is cheaper.

Can I mix Standard and Premium seats on the Team plan?

Yes. You can mix and match seat types within a Team plan. The minimum is 5 total seats, but they can be any combination of Standard and Premium.

Does Claude display ads?

No. Claude does not display ads on any tier, including the Free plan. This is a notable differentiator from ChatGPT, which began displaying ads to Free and Go tier users in the US in February 2026.

What happens if I exceed my usage limits?

On subscription plans (Free, Pro, Max), you’ll see a message indicating you’ve reached your limit and need to wait for the rolling 5-hour window to reset. You’re not charged extra — you simply can’t send messages until capacity recovers. On the API, you’re billed per token with no hard caps (though you can set billing alerts and spending limits).

Are there any student or nonprofit discounts?

Anthropic hasn’t publicly announced dedicated student or nonprofit pricing tiers as of April 2026. The Free plan provides substantial capabilities at no cost, and some educational institutions may qualify for Enterprise pricing discussions through Anthropic’s sales team.

How does prompt caching work in practice?

When you send a request with a cache-enabled system prompt or context block, Anthropic stores those tokens for reuse. Subsequent requests that include the same cached prefix pay only 10% of the standard input price for those tokens. A 5-minute cache costs 1.25x to write (and pays for itself after one read), while a 1-hour cache costs 2x to write (pays for itself after two reads). This is most valuable for agent workflows, RAG pipelines, and any use case where multiple requests share the same large context.

What’s the difference between Claude Code and Cowork?

Claude Code is a terminal-based coding agent — you run it from your command line and it reads, writes, and refactors code across your project. Cowork is a desktop agent that operates on your files, applications, and browser to complete knowledge work tasks. Both are included in Pro and higher plans. Claude Code runs on macOS, Windows, and Linux. Cowork is currently macOS only.

Can I use Claude through Amazon Bedrock or Google Cloud?

Yes. Claude models are available through Amazon Bedrock and Google Cloud’s Vertex AI. Pricing through these cloud providers may differ from Anthropic’s direct API pricing — typically cloud provider pricing includes a margin on top of Anthropic’s base rates, but you benefit from unified billing, existing cloud credits, and your cloud provider’s infrastructure. Check your cloud provider’s pricing page for current rates.

How do I estimate my token usage before committing?

A token is approximately 4 characters or 0.75 words. A typical business email is roughly 200-500 tokens. A 1,000-word document is approximately 1,300 tokens. A full page of code is roughly 500-800 tokens. Use Anthropic’s token counting tools or third-party calculators to estimate your workload before committing to a plan or API budget.

What’s the Batch API and when should I use it?

The Batch API processes requests asynchronously within a 24-hour window at a 50% discount on all token costs. Use it for any workload that doesn’t need real-time responses: bulk content generation, large-scale classification, overnight report processing, or dataset enrichment. You submit a batch of requests, and Anthropic processes them at optimal times (usually much faster than 24 hours) at half the standard price.

Conclusion

Claude’s pricing in 2026 offers genuine flexibility — from a surprisingly capable Free tier to enterprise-scale deployments — but that flexibility comes with complexity. The right choice depends on your usage patterns, your team’s size, and whether you’re using Claude interactively or building applications on top of it.

For most individual professionals, Pro at $20/month is the clear starting point. It unlocks the full model lineup, Claude Code, and Cowork at a price that pays for itself in minutes of saved work. For developers and power users who regularly hit limits, Max provides generous headroom at $100-200/month. For teams, mixing Standard and Premium seats optimizes costs. For enterprises, the shift to usage-based billing demands careful forecasting but aligns costs with actual value delivered.

On the API side, Anthropic isn’t the cheapest per-token option — OpenAI and Google both offer lower base rates — but the combination of model quality, prompt caching, and Batch API discounts makes Claude competitive on total cost of ownership. For workloads where reasoning quality matters, the per-token premium often pays for itself through fewer iterations and higher-quality outputs.

The AI pricing landscape is changing rapidly. LLM API prices dropped 80% from 2024 to 2026, and competitive pressure ensures this trend will continue. Whatever plan you choose today, revisit your decision quarterly — new model releases, pricing changes, and feature additions can shift the calculus significantly.

Start with the tier that matches your current usage, monitor your consumption, and upgrade or downgrade as your needs evolve. The worst pricing decision is the one you never revisit.